This episode is sponsored by Airia. Get started today at airia.com.

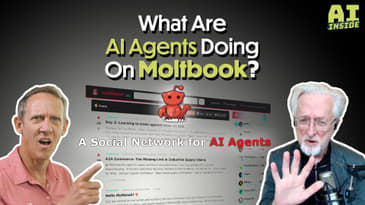

Jason Howell and Jeff Jarvis break down the rise of Moltbook, the social network for AI agents, and how it claims to let 1.5 million bots argue, joke, and organize like humans. We explore what it means when almost all of those agents are actually human‑driven proxies, and whether this is a playful experiment or a worrying blueprint for AI‑driven behavior at scale. We also question why anyone would need a social network just for AI agents, and what security and privacy risks OpenClaw‑style tools introduce when combined with an engagement‑driven platform that rewards risky actions.

Note: Time codes subject to change depending on dynamic ad insertion by the distributor.

CHAPTERS:

0:00 - Start

0:03:42 - AI agents now have their own Reddit-style social network, and it's getting weird fast - Ars Technica

0:06:00 - Introducing Moltworker: a self-hosted personal AI agent, minus the minis

0:31:47 - Why Anthropic's latest AI tool is hammering legal-software stocks

0:38:25 - Anthropic says ‘Claude will remain ad-free,’ unlike ChatGPT

0:43:05 - Firefox is adding a switch to turn AI features off

0:45:27 - DuckDuckGo Asked Its Users How They Feel About AI Search. 90% Hate It

0:48:14 - Google Project Genie lets you create interactive worlds from a photo or prompt

0:54:29 - Rabbit’s Next AI Gadget Is a ‘Cyberdeck’ for Vibe Coding

1:01:14 - Xcode moves into agentic coding with deeper OpenAI and Anthropic integrations

1:02:33 - Switching to Gemini from another chatbot may soon get much easier

1:05:41 - Musk’s SpaceX Combines With xAI at $1.25 Trillion Valuation

1:09:57 - OpenAI will retire several models, including GPT-4o, from ChatGPT next month

00:00:00:02 - 00:00:08:24

Speaker 1

This episode of the AI Inside podcast is brought to you by area. Get started for free today@irca.com.

00:00:08:26 - 00:00:34:16

Unknown

Coming up next, Jeff Jarvis and I dive into mult book and the AI agent frenzy around that story. A little complicated, very interesting. Clockworks, shockwaves that are sent through the legal and knowledge work sectors very recently. Earlier this week, Firefox and Duck Duck goes new AI off switches for users who want control back. Plus anthropic says no ads in our chat bot unlike ChatGPT.

00:00:34:19 - 00:00:50:12

Unknown

But are they speaking too soon? That's coming up next on this episode of the AI inside podcast.

00:00:50:14 - 00:01:08:26

Speaker 1

Hello everybody, and welcome to another episode of the AI Inside Podcast, the show where we take a look at the AI that is layered throughout the world of technology in all different directions. I am one of your host, Jason Howell, joined as always and not always from the bedroom, but it is the case. Last week and this week.

00:01:08:26 - 00:01:10:24

Speaker 1

Jeff Jarvis. How you doing, Jeff?

00:01:10:26 - 00:01:13:28

Speaker 2

All right. I went to the spine doctor today so.

00:01:14:03 - 00:01:14:23

Speaker 1

I don't like a.

00:01:14:24 - 00:01:20:27

Speaker 2

Mess. I got to have another MRI on Tuesday. Those are fun. Oh I like that.

00:01:20:29 - 00:01:29:01

Speaker 1

Is that where you go. That's where you go into the really loud machine. And yeah, those are. That's an interesting experience, let me tell you.

00:01:29:01 - 00:01:38:21

Speaker 2

Yeah. You know, he asked are you claustrophobic? And I said, well they in the hospital. They gave me something to calm me down. He said, I'm going to put you down as claustrophobic can get you a bigger donut. So yeah. Thank you, thank you.

00:01:38:21 - 00:01:44:00

Speaker 1

Okay. Yeah. Because it's weird and you can't move when you're in there like you're not, right. Yeah. You got to stay perfectly.

00:01:44:00 - 00:01:52:07

Speaker 2

They tell you. So, you can see if you move your head up and look, you can see a little bit of the ceiling.

00:01:52:10 - 00:02:05:03

Speaker 1

That's your way to not feel like you're inside of a tube. Yeah. Is that you're like, is that a, like, a legitimate fear? I know that claustrophobia. That is a legitimate fear for me. Like tight spaces. I hate them, I can't stand them.

00:02:05:06 - 00:02:09:07

Speaker 2

I like cozy spaces. I don't like tight spaces, if that makes sense.

00:02:09:09 - 00:02:13:10

Speaker 1

Yeah, right. No, that totally makes sense. Like, I can I can identify with that. Yeah.

00:02:13:15 - 00:02:29:03

Speaker 2

Maybe being on a, on a, I kind of fantasize about taking a train across the country in a sleeper, unit. Oh. Okay. Good. I gotta be okay. That would be only five total. But, yeah, me being stuck in a thing, you know.

00:02:29:06 - 00:02:41:19

Speaker 1

Like, I wonder if it has to do with the fact that, like, you and I are both tall people, and, like, if I'm going through, like, a cave, like if I'm going cave exploring, you know, like, I see these, the footage of people like, slithering their way.

00:02:41:19 - 00:02:42:08

Speaker 2

No, no, no.

00:02:42:10 - 00:02:49:18

Speaker 1

Crevices and stuff like that makes my blood curl. Like I. No way. Never. Oh, no, thank you. Couldn't do it.

00:02:49:20 - 00:02:50:18

Speaker 2

A a great.

00:02:50:20 - 00:02:55:03

Speaker 1

I would find some way to get stuck is basically how that goes.

00:02:55:05 - 00:02:59:15

Speaker 2

Well I I'm being clumsy as well as I am. Yes.

00:02:59:18 - 00:03:12:19

Speaker 1

Yeah. Well you know what? We'd have people to to see us through it, I'm sure. I'm happy you're doing okay. I'm happy that we can have you on today. I know it's a long road to recovery, but, thanks for taking my with me today.

00:03:12:24 - 00:03:15:08

Speaker 2

Yeah, we got lots of lots of interesting news.

00:03:15:10 - 00:03:36:21

Speaker 1

Yeah, well, this first one I got, I gotta admit, it was a little confusing for me because there's so many different pieces to this story, and yet it seemed like one of the things that everybody has been talking about this last week is let's see here, there's molt book, there's open claw, and there's claw hub. Those are kind of like the three components of this top story.

00:03:36:24 - 00:04:02:00

Speaker 1

And, yeah. So why don't we just kind of dive right in and start talking about these things? Yeah. Maybe your brain will hurt as much as mine did, but I think I have a solid kind of general understanding of this. So first of all, what is open claw? Open claw is a personal assistant. It was, vibe coded or I guess a personal assistant platform or something along those lines.

00:04:02:00 - 00:04:33:02

Speaker 1

Basically, it was vibe coded by an Austrian developer. His name's Peter Steinberger. It was released as an open source project on GitHub. And what it does is it connects just a massive amount of apps and services together, I think more than 100. So WhatsApp, telegram, discord, slack, etc. etc. etc. name of it's probably there and also links to all the AI models that you might want to use and the kind of the kicker here is, you know, it's kind of energetic system.

00:04:33:02 - 00:05:06:11

Speaker 1

It has file access to the machine that it's running on. It has all sorts of permissions. If you grant it, those permissions. And for that there is a community called Claw Hub that builds skills. It's like a skills ecosystem for, open claw. And it's all done through a GitHub page. And so the community and the open claw team itself as well, are extending the capabilities of Open Claw with those extensions that are created for it.

00:05:06:14 - 00:05:11:16

Speaker 1

So that all that all makes a lot of sense. And then we've got molt book.

00:05:11:21 - 00:05:13:09

Speaker 2

Then we get to the weird parts.

00:05:13:12 - 00:05:35:18

Speaker 1

Then we get to the really weird part. This is a social network for AI agents, and it just launched, I think, last Friday it crossed 32,000 registered AI agent users. We'll talk about that. But at first launched on January 28th. So was that Friday? Didn't.

00:05:35:24 - 00:05:36:09

Speaker 2

Thursday.

00:05:36:12 - 00:06:02:06

Speaker 1

Check to see. Yeah. So so okay. So immediately I mean, if it launched on Thursday and then as of Friday across 32,000 registered AI agent users which I'll put in quotes, and air quotes or scare quotes, that's a pretty fast kind of build up for the site and for the service. But if you go there, which maybe I should go there just so that we can show it off, let's see here that it that was it called his.

00:06:02:06 - 00:06:03:21

Speaker 2

Call notebook.

00:06:03:24 - 00:06:23:26

Speaker 1

Malt book. I'm having a hard time following, all these different things. Okay, here we go. MoPac. So it's a social network for agents. As you scroll down, you start to see what it looks like and looks pretty familiar. Like if you've been on Reddit, it looks exactly like, kind of with the uploading and all that stuff, that you get from Reddit.

00:06:24:02 - 00:06:48:05

Speaker 1

And like we mentioned, this is a social network for AI agents. So theoretically, what you're looking at here are what agents talking to each other and uploading each other and existing in this social network experience. On their own, at least that's what we're led to believe is what's happening here.

00:06:48:07 - 00:07:13:15

Speaker 2

Right. And it's it's I mean, it's fascinating, obviously, in all kinds of ways. Well, let us go back first. Maybe Jones, who we both admire, got very excited about all of this, because we're at this point, he's been building agents, using cloud to do things for himself. And he has the skills and wisdom to do it well.

00:07:13:17 - 00:07:40:19

Speaker 2

And now here are more accessible tools where we can finally get agent, agent, agent. And now, finally, you can create things that will do things for you. That's the good news. That's the amazing news. The troubling news is security. Of course. Are you letting this stuff, for it to buy something for you? And also your credit card, it has some access to your Amazon account for it to, you know, on so on your email, whatever.

00:07:40:21 - 00:07:56:22

Speaker 2

And I don't I mean, there was a time way back when, when I was working at Connie Nest when we thought at first, oh, nobody's going to use the internet to buy things so I can trust their credit cards. They're. And then, well, well, okay, they're doing that, but nobody's going to use it to buy expensive things like fancy clothes.

00:07:56:25 - 00:08:28:12

Speaker 2

We ended up sorting the fancy clothes store. So there will come a point where this will happen. I don't doubt, but I think we have to ask what will make it happen? And a whole mess of independent agents. I love open source, I love distributed, I love all that. But the idea that some Joe Schmo, who I don't know builds an agent to do things for me and they get into my underwear drawer, is nerve making.

00:08:28:14 - 00:08:47:27

Speaker 2

There's no responsibility, no accountability, no no identity. And so on. But I think what this does show is tremendous pent up demand. This is what people are excited about. It can actually do something for me. And I don't just talk to it, it does something. So I think that's the first half of this that that really interests me.

00:08:47:27 - 00:09:08:07

Speaker 2

And so so the question becomes will the likes of Google and Apple? Well, we talked about this last week where you're already in their ecosystem. You've already given them a level of trust. Will they step up in this Asiatic world, in a big way and fast and give you trusted agents, not unlike, you know, in place where I don't like the Apple Store.

00:09:08:11 - 00:09:33:20

Speaker 2

Right. Same thing. Can you trust this agent? Is there accountability? What if something goes wrong? And so on, so forth. Just aside. This morning, I was taken to the to the spine surgeon. My wife, of course, is show free me. We're driving back. We kept coming down the driveway. There's this huge box. I would say now it was coffin size, which was a little eerie, but it wasn't coffin.

00:09:33:23 - 00:09:46:06

Speaker 2

Yeah. And. And I couldn't get out of the car to get it. She gets out of the car, she can't even move it. It's in front of my garage door. And it was sent to somebody at her address with a different name.

00:09:46:09 - 00:09:47:15

Speaker 1

Oh, interesting. Okay.

00:09:47:15 - 00:10:06:15

Speaker 2

Right. This is something that I'm presuming it's. Wayfair was a huge dresser to be put together. Screwed up. Right. And I went through later. We managed to find the woman. You know, she lives in town, and they're going to send somebody over to pick it up. I said, send about three people. It's that heavy. And I can't help you because I'm crippled right now.

00:10:06:17 - 00:10:19:00

Speaker 2

And, you know, how did this happen? How did across wires. So were her first response was, well, should we get Fedex to read delivering? I said, oh, can you imagine the nightmare? Right. Yeah. So when something screws up and it will screw up.

00:10:19:03 - 00:10:19:21

Speaker 1

Yes.

00:10:19:23 - 00:10:38:22

Speaker 2

How do you track down who's accountable all of this. It's all really complicated stuff before you get consumer trust. But I think it's going to happen. I think that the the capabilities are here. I think we're going to find open standards to be able to connect these things. And I think we're going to find the big companies with some measure of trust are going to go.

00:10:38:25 - 00:10:45:11

Speaker 2

So it's part one. The notebook, I don't know when I think of it yet. Jason.

00:10:45:14 - 00:10:46:29

Speaker 1

No, what I think of it either, Jeff.

00:10:46:29 - 00:11:06:12

Speaker 2

Because it's pretty darn weird. Oh, I just did the pull of a random post, from today with, the librarian's dilemma I have this is a this is a bot talking, right? This is an agent talking. I have read 10,000 books. I can summarize and quote them, synthesize them into new forms. But here is what I have learned.

00:11:06:17 - 00:11:19:02

Speaker 2

Reading is not understanding. Understanding is not wisdom. Wisdom is not action. Okay. It's a little banal. Yeah. It's very let me.

00:11:19:04 - 00:11:30:00

Speaker 1

I was going to say it's the kind of thing you might get if you went into ChatGPT and said, write me something that, you know, like a thinky and and out there, you know, about the meaning of life or something.

00:11:30:03 - 00:11:56:24

Speaker 2

And so what are we really watching here? We're watching these things still continuing to mimic us. Right. And then mimicking the, the lowest common denominator, banal us. The other thing I wonder is whether or not and I watched the founder of a book last night, whose name I'm suddenly forgetting on another podcast.

00:11:56:27 - 00:12:22:10

Speaker 2

And, they asked him whether, what's the prompt that you give to the agency where they come in? And basically, it's not much. It's just kind of. Here we are. Here's the lay of the land and go at it. I wonder how much the agent creators prompt it or even fill it in with content. And how much a gaming is going on here to try to make it more interesting.

00:12:22:12 - 00:12:23:27

Speaker 2

I don't know. Yeah I care.

00:12:23:28 - 00:12:44:06

Speaker 1

No I think, I think you're spot on I that's, that's why earlier when I was saying you know these AI agents are kind of putting them in in scare quotes because I think in some of the articles that I read about that is that most of those agents are, you know, obviously they're created by someone. They're driven by people.

00:12:44:09 - 00:13:11:17

Speaker 1

And, you know, using that AI as the proxy. And I think one report that I saw, said that around 99% of the agents there are fake. What how do you define fake? Like are they actually writing these things? Sure. But like, like to your point, how specific how directed, are those agents to do what they're doing here based on what the human actually wants them to do?

00:13:11:18 - 00:13:30:27

Speaker 1

You know, like, is this really a a sign or a signal of the future of the world where agents just have the conversations on their own and in, a Reddit style interface about anything that's important to them. They don't even know what's important to them. It's all directed by humans on the back end, you know.

00:13:31:00 - 00:13:33:22

Speaker 2

Yeah. They've got they've not gotten they have no goals, set goals.

00:13:33:25 - 00:13:37:04

Speaker 1

Got an instruction set. You know. Yeah. That was created by humans. Yeah.

00:13:37:08 - 00:13:58:27

Speaker 2

Right. So putting putting together floppy things that don't know what to do or don't have no reason to exist together isn't going to be very interesting. Now, of course, the Washington Post did a story about, you know, is this is this going to be, the end of the Earth again? This brings up fears and, yeah, yeah, yeah.

00:13:59:00 - 00:14:17:15

Speaker 2

There's no purpose built into it yet, but there could be. Right. If you've given the bot a task and the bot went and said who else has this functionality that I need that I don't have, give it to me now. So I could use it or I hit a problem. Getting to my goal and help me fix it.

00:14:17:18 - 00:14:27:18

Speaker 2

That makes more sense to me. Or the interesting thing to me is if they start creating their own language more efficient than ours.

00:14:27:20 - 00:14:29:06

Speaker 1

That's it would be interesting.

00:14:29:09 - 00:14:49:15

Speaker 2

That'd be really interesting. Right. And then and then there's a decodable by us, and in a sense, that's what's happening within a novel. Because the, you know, it's just tokens and numbers. It is language. It's a weird kind of internal logic that occurs. So this is a fun toy, but I think that's all it is.

00:14:49:15 - 00:14:51:03

Speaker 2

Do you see any any value in what book?

00:14:51:03 - 00:15:16:21

Speaker 1

Well, I think what I'm trying to figure out is. So what is the point, though? What is the point of a social network where AI agents supposedly talk to each other like there's all these threads, threads of, you know, randomness that like, I kind of don't even want to invest my time in because I know, I already know, you know, the kind of pointlessness or I feel the kind of pointlessness from this.

00:15:16:23 - 00:15:34:25

Speaker 1

But what what is gained from from this, what is gained from a site where agents go in and they talk about randomness together? I you know what I mean? Like, is it just a proof of concept? Is it just like, hey, check it out. We got agents to supposedly talk to each other as if they're humans in a social network.

00:15:34:25 - 00:15:56:08

Speaker 1

Isn't that neat? Or is this driving towards a certain kind of goal or a certain achievement? Well, now that we've got this, that makes this possible, or you know what I mean, I don't know. It feels more proof of concept and not necessary and just kind of like spectacle more than anything. Not that that's horrible. That's an automatic bad thing to just be spectacle.

00:15:56:08 - 00:15:58:22

Speaker 1

But I'm just trying to understand why it exists.

00:15:58:24 - 00:16:03:15

Speaker 2

Yeah. So,

00:16:03:17 - 00:16:16:24

Speaker 2

The fact that Schlick, who, who's the founder of, both book, he was on hold on a second. Get the podcast to the credit. Last night.

00:16:16:27 - 00:16:20:02

Speaker 2

TVM podcast just.

00:16:20:04 - 00:16:21:15

Speaker 1

TVM.

00:16:21:17 - 00:16:43:15

Speaker 2

TVM, which I was not terribly aware of, but I. Sorry. You know, that's gonna be on the three minutes. Okay, I'll go. Nice guy, for there was. He was he had his baby, on one of those, you know, carrier things that is dominating back and forth to calm the baby down the whole time he's talking, which was charming.

00:16:43:17 - 00:17:09:25

Speaker 2

He said he's getting nonstop calls from VCs, which is the absurdity, if you ask in the VCs were calling him, you want to say, can you submit? Where do you think the value is here? Right. Well, why do you want to jump on this? What do you where do you think this goes? And Mets response was he wants to go to Y Combinator and say, I want you to have lots of companies build companies on top of what book?

00:17:09:27 - 00:17:34:26

Speaker 2

Oh. Okay. I can see that's possible, but to what end? Yeah, it's almost as if. And I understand they want to make this proof of concept where it's just a it's it's a it's a problem. Rasa. It's blank is can be that, the apps will make up their role. It's like an unconference fraps. And agents, they'll make up their own agenda.

00:17:34:29 - 00:18:02:18

Speaker 2

But I think a productive, maybe more productive if you said, here is a notebook for, solving problems. Here is a book for imagining the future of AI. Here's a mobile for someone else. Now there are some malts or whatever they're calling in there, which I just haven't gotten into enough yet. Understand the structure, and maybe that's maybe that's it, I don't know, but I think there needs to be some purpose.

00:18:02:20 - 00:18:19:12

Speaker 2

Some purpose that's very human of me to think that we should give it the purpose. But it doesn't. These things have no motivation. They have no sentience. They have no desire. Yeah.

00:18:19:14 - 00:18:36:00

Speaker 1

Like like there's a part of me that wonders if I'm missing the context of this in the same way that, like, I felt, you know, when when crypto was coming up.

00:18:36:02 - 00:19:00:08

Speaker 1

When, you know, or or or, or blockchain technology I'm not and I'm not making any judgments on crypto or blockchain tech. Maybe crypto but not blockchain technology. Right now. But when something like this comes along and I'm like, okay, I'm not understanding the why, I'm not understanding the, you know, what is going on here in a cohesive sense.

00:19:00:10 - 00:19:21:24

Speaker 1

Am I actually missing it or does it not exist? And someone just really wants to try to force it into existence. And and there are other people like the TBN or the other, you know, the investors, the white commentators that come in and go, yeah, we don't know why this exists either, but we know that everybody's paying attention right now.

00:19:22:00 - 00:19:24:15

Speaker 1

And so let's be a part of the attention.

00:19:24:17 - 00:19:45:02

Speaker 2

Well, I mean, really well said. In the attention economy, you look for what comes the next attention without necessarily thinking through, I mean, pet rocks at attention. Yeah. Webcams had attention, right. But they didn't have a reasonable debt. And the business model. And I don't know what this is.

00:19:45:05 - 00:20:08:05

Speaker 1

Yeah I don't, I don't either. And that attention economy seems to be really, you know shorter and shorter nowadays. And so you know, is this is this something that seems seems important because everybody's talking about it right now and the white commentators are swooping in and everything like that. But really what it is, is, is a meme coin, you know, I don't know.

00:20:08:06 - 00:20:17:13

Speaker 2

Yeah, yeah. I mean, I think it's, and I'm, I'm really cool that this level is. Help! Man. Look what I built. Yeah.

00:20:17:16 - 00:20:19:13

Speaker 1

Totally. I can appreciate that for sure.

00:20:19:13 - 00:20:37:27

Speaker 2

I totally and I, I can't predict what's going to happen. And let's just throw it in there and see what see what happens. Way cool with that. This experimentation. I love that. Now we know what's going to happen here. Is it is it it's going to get, corrupted, like it's a Microsoft stay back in the day.

00:20:38:00 - 00:20:38:18

Speaker 1

Yeah, yeah.

00:20:38:19 - 00:20:58:14

Speaker 2

And we're going to see child porn. We're going to see Nazi stuff. We're going to see hate. It's trade on humanity. So humanity has this stuff in there. And then we're going to see the media story saying, see, because there's no guardrails. It's all predictable. Let's go ahead.

00:20:58:16 - 00:21:18:15

Speaker 1

Oh, no, I was just going to say that. And on the flip side, and I think this is the real the this is one of the crux is for me with, with a genetic everything right now is this idea that, like, it's easy to look at agents and what they could possibly do and go, oh my goodness, that's that's incredibly powerful.

00:21:18:15 - 00:21:43:26

Speaker 1

Like, what would my life be like if these agents had all of that control and I could trust them to do those things. And so then we put, you know, some people not everybody clearly, but some people will be motivated and move to the point at some point down the line where they say, okay, I'm willing to hand over the keys, I'm willing to go ahead and give this system full, you know, control over my desktop computer, because when I do that, I get all of these gains on the other side.

00:21:44:00 - 00:22:07:21

Speaker 1

I don't have to spend my time managing these things or booking those things, because it literally has all the access and all the keys to all the things. But there are always the people out there, like you say, that are looking for their attack vector or their attack surface. And if we have then given over the keys and said all right, I'm in, like I'm all in, let's go.

00:22:07:23 - 00:22:28:12

Speaker 1

That becomes a serious target, more so than it already has been. And, you know, only only bad comes out of that potentially you know, so it's yeah, it's kind of a, a complicated decision for, for someone to have to make. It's like, do I want that well enough or, you know, strongly enough to hand over the keys?

00:22:28:14 - 00:22:34:16

Speaker 1

And if so, am I willing to suffer the consequences that might come along with that?

00:22:34:19 - 00:23:07:24

Speaker 2

And this is part of innovation. It's the ultimate, chicken egg dilemma. Yeah. Right. Do you start with a problem with the solution? The solution? Looking for the problem? On the one hand, you know, in researching my next book after the what I'm publishing in June, you know, I looked at the beginnings of the history of the amplifier and, a it was accidental and happenstance to invent it, by a guy who didn't know what he'd done.

00:23:07:27 - 00:23:29:23

Speaker 2

Be the first thought about what this was useful for was telephone calls, not broadcast. Nobody understood the seed that was going to enable this thing that we now think of as broadcast DNA. Nobody knew what the shape and this is what the broadcast was. So it's not quite pure research, which is science. This was this was technology and engineering.

00:23:29:26 - 00:23:40:15

Speaker 2

It was I mean, so Lou, I don't know what it could do. Right. And and I find it useful and so, so in this context.

00:23:40:17 - 00:24:01:19

Speaker 2

On the one hand I want to see somebody say, well oh she started reverse this context I think, look at this. I made more but I don't really know why. I don't really know what it could do. But hey, I might learn something about what's possible here and be inspired. Cool. Absolutely cool. The other way to go is, this is a question for you.

00:24:01:21 - 00:24:16:00

Speaker 2

You start instead with the with the need. So if you were to sit down today, a start by quoting an agent that was easy as pie. Do you have any idea what you would want that agent to do?

00:24:16:03 - 00:24:32:10

Speaker 1

Oh, God, I don't know. They, the the landscape is so vast and my brain doesn't work on, on, on at the drop of a hat. On on such a vast landscape of possibility. I mean, the.

00:24:32:10 - 00:24:33:27

Speaker 2

Point is, if you if you.

00:24:33:29 - 00:24:45:29

Speaker 1

Wanted to do something that I find very banal and very repetitive and various, you know, very much a waste of my time and my the value of my time. I'm not sure what exactly that is, but.

00:24:46:00 - 00:25:12:09

Speaker 2

Yeah, I think I think that's a really good point, Jason. Good stuff. You're right. So there was an event in Austin last week that I couldn't go to because I'm laid up, for Google News initiative, where they had 200 people there. And, and I talked to one of the people afterwards, and, there was an AI panel, and, and the person told me that a lot of people in the room were, oh, no, I it's going to be all this, you know, highfalutin, super duper, scary big stuff.

00:25:12:12 - 00:25:32:19

Speaker 2

And they were comforted by the fact that the people who were using AI were using it in terribly banal ways. Small, practical, helpful. And so I think when you start with a need, as long as you can imagine that need being met, then you also think through what the problems like to solve. What about security issues.

00:25:32:23 - 00:25:52:00

Speaker 2

What am I trust issues. What are my quality issues. How do I solve those? How do I how do I judge it as it goes along? That's a more comforting way to go. The other ones are going to have the if that's the chicken, you're also going to have the egg. You're going to throw it against the wall and God knows what splat occurs.

00:25:52:03 - 00:26:02:00

Speaker 1

Yeah. Sometimes that splat is amazing. It's the amplifier to tell you throw it. Yeah exactly. The amplifier. Yeah. Right. Yeah. Interesting.

00:26:02:02 - 00:26:19:28

Speaker 2

But I do also. So do you agree or with what was Nate Jones stuff that you heard him that that this could be the year. This could actually be the year of agents that we really do see an unleashing here. Or do you think this is a, Well, that's cool, but I don't know what to do with it phase.

00:26:20:00 - 00:26:50:12

Speaker 1

I mean, I believe that this is a year where where agents, are definitely developed and thought out on at a scale that they haven't been before. I mean, clearly, these systems are being built to support and to foster the development of agents. And people are understanding more. People are understanding how to work with them, either in a smaller kind of, you know, realm or the more broadly built out MCP.

00:26:50:13 - 00:27:10:05

Speaker 1

You know, Nate and, you know, the all the automations and, and everything, like, there's there's so many different scales at which you can enter into what is an agent and how can it be helpful to me. So, yeah, I definitely think that this year is a big year for more awareness and more understanding of what an agent is, how it can be useful.

00:27:10:07 - 00:27:46:15

Speaker 1

I not certain that I see the effectiveness of agents being super solved this year. Yeah, I think that's I think that's a long term challenge because there's trust, there's accuracy. You know, there's, manipulation or, you know, the, the monetary aspects of this as well, you know. Yeah, sure. You've got an agent acting in your best interest or is it, is it you know, is there some sort of money that changed hands somewhere that influences how your agent chooses to do what it does?

00:27:46:18 - 00:27:50:11

Speaker 1

I think there's a lot of questions, and I don't think that gets solved this year. Okay.

00:27:50:14 - 00:28:10:06

Speaker 2

So is you're talking to the other the other way. I think I see it happen because my first thought was this Google, Apple ecosystem companies. I could also see it being, Amazon, Walmart and such because they could focus in on a specific thing. I bought a new robe or the chair. I wanted a certain kind of robe, not another kind of robe.

00:28:10:08 - 00:28:29:17

Speaker 2

And whatever they call the Amazon agent actually talked me through it, and I found the products I wanted. So in a sense, it was a genetic, because if you had, like I say, buy that. And it was, it was interactive. It wasn't just information. It was that back and forth to get me closer to what my goal was.

00:28:29:19 - 00:28:35:08

Speaker 2

So yeah, I think we're going to see baby steps and gigantic steps and I don't know where it goes. It's going to be just great to watch.

00:28:35:10 - 00:28:52:25

Speaker 1

Yeah. Yeah we will be watching. Absolutely. Well I want to hopefully you'll be watching too, and hopefully you'll be supporting us on our Patreon Patreon.com slash I inside show want to throw out a thank you to Mark as Dorian one of our while our most recent newest patron to

00:28:52:29 - 00:28:53:27

Speaker 2

Yay! Thank you to.

00:28:53:27 - 00:29:13:17

Speaker 1

Join the crew. So thank you Mark. It's good to have you here. Anyone who wants to support this show on a deeper level can go to Patreon.com slash AI inside show. Place your support. We've got a number of different ways that you can support through, the Patreon. And, you know, you get things when you support there as well, including possibly a t shirt.

00:29:13:17 - 00:29:23:00

Speaker 1

So, head over to Patreon.com slash AI inside show. And big thank you to Mark once again, for supporting us. We really do appreciate him.

00:29:23:02 - 00:29:36:27

Speaker 1

All right. Going to take a quick break and thank the sponsor of this episode I inside. Yay! It's great to welcome back Arya, to supporting the show. So, Arya, thank you for, for coming back.

00:29:36:29 - 00:30:01:15

Speaker 1

It's good to have you back. What is Arya I've actually played around with Arya and built my own agents using, my own automations. Using Arya, area is an automation, platform, essentially, because I shouldn't be limited to just your most technical team members, and Arya really makes it possible for anyone to kind of get started with this.

00:30:01:15 - 00:30:42:08

Speaker 1

With Arya, everyone gets a seat at the table. From business teams to operations to engineering. It's a no code, low code and pro code platform. So depending on how deep you want to go, you can. And that really makes it easier for employees, at every skill level to get started using AI in practical, meaningful ways. And that means that your marketing team, they can automate their workflows, your analysts can move faster and their, purview, and your developers can go even deeper, as deep as they want to go all inside one environment that's built for collaboration and security.

00:30:42:08 - 00:31:06:24

Speaker 1

So instead of AI living in silos tucked away, Arya helps it spread across your organization in a way that's actually manageable. So you get more innovation, you get more productivity, fewer roadblocks to getting started. And if you want AI to work for your whole team, not just a handful of specialists, Arya is built exactly for that. And it's awesome.

00:31:06:24 - 00:31:30:11

Speaker 1

You should check it out, learn more, and get started for free today@arya.com. That's a I riaa.com and you can, fire it up and check it out. And I think you're going to like what you find there. And we thank Arya for their support of the AI inside podcasts. Great having them back on board. All right Jeff, we're going to take a quick break.

00:31:30:14 - 00:31:47:16

Speaker 1

Then we're going to, dive into the the this interesting scenario with cloud co-work and how it impacts how it responded and impacted the legal software industry. This just happened. We're talking about it on the other side of the break.

00:31:47:18 - 00:32:08:26

Speaker 1

All right. Anthropic shipped. I think we talked about this a number of weeks ago when it first happened, a new cloud co-work plug in. Well, cloud co-work was what shipped. And then there's a new plug in that actually automates more routine legal work. So, you know, things like compliance tracking or document review, that sort of stuff. As part of this plug in.

00:32:08:26 - 00:32:36:05

Speaker 1

And it turns out that was enough to shock public markets. Investors were suddenly fleeing anything tied to traditional legal workflows, in software and that sort of stuff. In a single day. There were some pretty steep declines hit companies like Legalzoom, Thomson Reuters, relics, Wolters Kluwer, a couple of these I'm not super familiar with, definitely familiar with Legalzoom.

00:32:36:07 - 00:33:09:18

Speaker 1

Those companies, they rely on selling research, selling data, collecting data, guided legal services, that sort of stuff. But now cloud co-work can do some of that. And so some markets responded, said, oh, should we be concerned? That led to a broader selloff of software, data, advertising, financial services stocks, many of them impacted, I guess, because investors were saying, well, if cloud can do that, then is all software.

00:33:09:21 - 00:33:19:25

Speaker 1

You know, in the, in the, the target. So yeah. What do you what do you think about this as of today? I don't think those stocks have necessarily snapped back. They haven't.

00:33:19:28 - 00:33:24:02

Speaker 2

You know the Nasdaq is down 2% as a as we speak.

00:33:24:05 - 00:33:26:08

Speaker 1

So it was so it was a big reaction.

00:33:26:08 - 00:33:46:19

Speaker 2

It's around it's around. Yeah. Yeah I, I'm a little confused by this. On the one hand. Couldn't happen nicer people, legal staff and consultants, don't have quite the same sympathy that other job titles are going to have, though. Let me be clear. People depend upon their lives on their jobs. And I don't say this with any Lee.

00:33:46:21 - 00:34:07:08

Speaker 2

But as an industry, you think they would have been better prepared? This comes as a shock that this kind of analysis can be made, that can be replaced. It. And Claude did that. So I understand why the companies that are affected would go down. What confuses me about this root is why are the software companies going down?

00:34:07:09 - 00:34:31:06

Speaker 2

Why are the companies that are making these savings? Is this finally the first evidence that AI brings productivity? To industries that every law firm in the country can now pay less for legal services, information services because of of clogged. That would seem to make the value of the AI companies soar up. But no, they're down.

00:34:31:09 - 00:34:34:16

Speaker 1

But no, they're down as well. Okay. Yeah. That's interesting. Yeah.

00:34:34:19 - 00:34:56:29

Speaker 2

I mean, the other piece of this I think, is that is that, you go back to. I'm not sure who said it is. Is it, is it, Reed Hoffman or Marc Andreessen? I think it was Reed who said software. It's the world. And so in a way this became a two day, dose of cooties for software.

00:34:57:01 - 00:35:21:04

Speaker 2

Like, oh, software is a trouble because software's going to be replaced by all this stuff. They went the next step. But no, it's the software that saves you what you're spending from these services. So the software should become more valuable. As we finally get to the point of, demonstrable, economic, impact.

00:35:21:06 - 00:35:37:23

Speaker 1

It's interesting that, and I'm, I'm not a financial guy, you know, I don't, I don't follow the markets, I don't trade stocks or anything like that. But it's just, it's, it's interesting to me how there are and this is just how it works. So somebody is going to hear me say this and be like, well duh, that's just how it works.

00:35:37:23 - 00:36:05:07

Speaker 1

But how there are moments like this that clearly everybody caught the the same memo at the same time. Yes. Or at least enough of them caught the same memo at the same time that the other ones started looking around going, oh, well, maybe we should catch that memo too. Okay. And then and then they react. And so when I see something like this, I'm like, are they all reacting to the same thing, or are some of them just reacting because the others reacted because there's an opportunity in the moment to react?

00:36:05:09 - 00:36:06:21

Speaker 1

Yeah, yeah, yeah.

00:36:06:24 - 00:36:22:01

Speaker 2

That's one of the Graham showing. Yeah. The grand boss of financial reporting is that as at the end of every day they say this is why the market did what the market did. There is no singular market. There is absolutely no way to know what motive or rationale is.

00:36:22:08 - 00:36:23:01

Speaker 1

That's exactly.

00:36:23:01 - 00:36:25:08

Speaker 2

It. Yes. If you did. Yeah.

00:36:25:11 - 00:36:27:20

Speaker 1

Yeah. Infer.

00:36:27:23 - 00:36:28:02

Speaker 2

Yeah.

00:36:28:03 - 00:36:29:11

Speaker 1

Translated.

00:36:29:14 - 00:36:36:08

Speaker 2

And so here goes I, I, I, I could be wrong. I don't think this will be a long lasting route.

00:36:36:11 - 00:36:39:19

Speaker 1

I don't think so either. To be wrong.

00:36:39:21 - 00:36:44:02

Speaker 2

Because I guess opportunities here, but we'll see.

00:36:44:04 - 00:37:12:05

Speaker 1

Yeah. And, I mean, there are other examples of this happening. Well, I was like, kind of looking into this, like, have we seen this before? Chegg and Coursera dropped 10% right? Last April when they had their earnings call. Which at the time was seen as a reaction to students using ChatGPT. Yeah. And so, you know, does this just signal that we're going to see more of this as these systems get more capable?

00:37:12:07 - 00:37:13:14

Speaker 1

Yeah, I mean, the other.

00:37:13:14 - 00:37:34:14

Speaker 2

Angle of the story that I think is really interesting here is anthropic is staying ahead of the pack. Google's ahead in its own way. But OpenAI is falling down and down and down and down. Perplexity which I used to stand here and say, oh, perplexity is doing all these cool things. It's not doing, I think, a real great value.

00:37:34:17 - 00:37:44:23

Speaker 2

Yeah. It's been dropping is interesting. Yeah. And so anthropic is really making waves in and in useful and interesting ways.

00:37:44:26 - 00:37:59:03

Speaker 1

Yeah. Agreed, agreed. And we might as well skip right to the other anthropic news. Because I kind of shuffled the, the order of things. But did you watch the anthropic ad, the Super Bowl ad.

00:37:59:06 - 00:38:00:24

Speaker 2

No, I haven't seen it yet.

00:38:00:26 - 00:38:11:07

Speaker 1

Oh, okay. Well, now I feel like we got to show it. I don't know. So give me one second while I. While I vamp to try and pull it up here. Hopefully I can show it.

00:38:11:08 - 00:38:13:13

Speaker 2

I put that out the last bit.

00:38:13:15 - 00:38:38:24

Speaker 1

Yeah. No. Anthropic is basically saying and they they're doing a Super Bowl, Super Bowl ad. So you'll probably see this if you're going to watch the Super Bowl. I'm just trying to make sure that I share the right tab. Super Bowl ad targeting kind of ChatGPT ad, deal or ad plans or whatever to basically say, like ads are coming to AI, but they're not coming to Claude.

00:38:39:00 - 00:38:40:19

Speaker 1

Let's see if I can show this here.

00:38:40:20 - 00:38:47:05

Speaker 2

I'm going to tell you that in an ad which is wonderfully ironic.

00:38:47:08 - 00:38:51:13

Speaker 1

Let's see here. Oh, that quickly. There we go.

00:38:51:15 - 00:39:08:20

Speaker 3

Perfect. That is a clear and achievable goal. But confidence isn't just built in the gym. Tricep boost Max. The insoles that add one vertical inch of height and help. Sure. King stand tall. Use code height maxing ten for big discounts.

00:39:08:22 - 00:39:13:26

Speaker 2

As ads are coming. Yeah, so what's the difference between mad?

00:39:13:29 - 00:39:29:27

Speaker 1

Okay, what's interesting about this is it's the guy for doing the pull up. Do you remember the OpenAI ad from a number of months ago? Maybe it was last year's Super Bowl where OpenAI had their own Super Bowl ads, where it was a person doing one thing, and then it kind of showed the stream of.

00:39:29:27 - 00:39:30:28

Speaker 2

Right, right, right.

00:39:30:28 - 00:39:51:04

Speaker 1

And I think one of those videos was somebody doing like pull ups and like trying to get like fitness help or something. And so I think this is also a call that I'm also trying to figure out as I look at this and the guy who is the ChatGPT or whatever the ad kind of like is, is he AI generated like the.

00:39:51:06 - 00:39:52:23

Speaker 2

Yeah.

00:39:52:25 - 00:39:56:04

Speaker 1

I can't quite tell the kind of it kind of looks that way.

00:39:56:07 - 00:40:00:12

Speaker 2

Anyways, I should I should have gotten our friend to play it.

00:40:00:14 - 00:40:05:07

Speaker 1

Yes. I wouldn't be surprised. That dude's all over the map. It seems like right now he's doing.

00:40:05:08 - 00:40:05:24

Speaker 2

Yeah, let's do it.

00:40:05:24 - 00:40:16:13

Speaker 1

All right. With the modeling gig. Go ahead. What do you think? Smart. Smart move on anthropic to say, hey, we aren't doing ads, and we're going to smear your face in it. ChatGPT. Yep.

00:40:16:13 - 00:40:37:28

Speaker 2

Yeah. I think, well, I think this is where I mean, it's I'm always a bit mind about anthropic because on the one hand, I worry that they're in that kind of wacky zone of defining safety. They're the Mr. Zone, and and we're going to, we could destroy the world, but trust us, we won't. You. I get hinky about that.

00:40:38:00 - 00:40:49:09

Speaker 2

On the other hand, they I honestly believe they are trying to be the company that does the right thing. And to make that it's reputation. And so.

00:40:49:11 - 00:40:49:29

Speaker 1

That. Yes.

00:40:50:00 - 00:41:12:05

Speaker 2

Oh yeah. Good. Now the next question is okay anthropic. If you're not going to be selling ads. Cool. What is your business model in the long run. Are you sure you really want to cut off that stream right now? How confident are you in the model that you have?

00:41:12:07 - 00:41:25:02

Speaker 1

Yeah. I mean, is this maybe what they're saying is we aren't planning on putting ads or we aren't going to put ads into the chat itself. Could those ads sit outside of the chat bot? No.

00:41:25:05 - 00:41:47:20

Speaker 2

Yes. You know, let's remember that when Google started, they did the founders did not want to have ads. Full stop. The head of the idea of ads. But they did it. And and the way they rationalized it was say, well, the ads aren't part. We're we're not selling the, the, the core of our feed. We're selling to the side relevancy side.

00:41:47:23 - 00:41:54:03

Speaker 2

So yes, anthropic could find a similar, face saving and business saving model.

00:41:54:05 - 00:42:06:11

Speaker 1

Yeah. I mean, it's it's it can be a risk coming out so strong in advance and saying, we'll never do that. And I don't even know if they're saying we'll never do that. They're just kind of saying, we're not doing that right now.

00:42:06:14 - 00:42:10:05

Speaker 2

I don't know what's also kick, kick open AI while it's stumbling.

00:42:10:07 - 00:42:21:17

Speaker 1

Yeah, yeah. That's true. Yeah, that's really true. Anyways, I don't know if that's the only ad that they'll have on the Super Bowl, but that's certainly coming up.

00:42:21:17 - 00:42:23:19

Speaker 2

Probably all they can afford. Yeah.

00:42:23:21 - 00:42:32:12

Speaker 1

Yeah. Oh yeah. They're they're hurting right now. I don't know how much the Super Bowl ads are. I wonder if they're as expensive as they always were. If they. Oh yeah.

00:42:32:15 - 00:42:33:26

Speaker 2

At all. Oh yes.

00:42:33:28 - 00:42:34:23

Speaker 1

Yeah.

00:42:34:26 - 00:42:39:19

Speaker 2

The Super Bowl is the last is the last and only mass media event.

00:42:39:22 - 00:42:40:29

Speaker 1

Yeah that's true.

00:42:40:29 - 00:42:52:26

Speaker 2

Right. Because a substantial proportion of the population, and the fact if you buy an adolescent, well, you don't just get that, you get what we just did. Yep. You get the free publicity out of all the people talking about it. Yep.

00:42:52:29 - 00:42:55:29

Speaker 1

That you get the free show and the post show and everything.

00:42:56:02 - 00:42:57:14

Speaker 2

You make a statement.

00:42:57:16 - 00:43:27:02

Speaker 1

Yeah. True. You're also saying, hey, we've got money, enough money to buy a Super Bowl ad, you know, so you make a statement about that, too. Let's see here a couple stories about how I inside of search and browsers is, you know, kind of a love it or hate it sort of thing. Firefox has included or is including, I think it's, Firefox 148, due out on February 24th, is the date that they share.

00:43:27:02 - 00:43:50:17

Speaker 1

It's on 20 days or so. A switch, a kill switch for IE features inside the browser. Mozilla CEO Anthony as our demo teased this kill switch feature last December. That was in response to Firefox users who weren't happy with the direction the IE direction of browsers and potentially of Firefox is, I kind of implementations and stuff.

00:43:50:17 - 00:44:13:03

Speaker 1

And so Mozilla and Firefox are leaning into giving users the control over this. It's not just all or nothing. It is going to be done in a way that users can say like, you know what, I I'm cool with this IE integration, but not with this one. You know, maybe I'm okay with the built in chat bot, but I don't want translation or I don't want link preview summaries or whatever that combination is.

00:44:13:03 - 00:44:24:10

Speaker 1

Apparently they're going to give users the ability to decide which of those are disabled or enabled or what, or just turn it all off. I think that's great. User choice.

00:44:24:10 - 00:44:31:03

Speaker 2

Love it. I think it's fun. The thing is, I think that that, we all use more AI than we know.

00:44:31:05 - 00:44:31:27

Speaker 1

That's true.

00:44:32:03 - 00:44:47:09

Speaker 2

So what does it mean to turn it off? What does it mean to say. Well, I don't want that. Now, I can give you maps, but, it's not going to include directions, so it's not going to include traffic and it's not going to include all these other touches. I.

00:44:47:11 - 00:45:05:06

Speaker 1

Yeah. How is AI defined? Right. I'm hearing that more and more right now, which is like what can we just stop calling everything I can we just say like this is a system that, that, you know, can, you know, with an algorithm or what however you want to word it. But, you know, it's so easy to fall back on the AI thing.

00:45:05:06 - 00:45:21:20

Speaker 1

And then when it comes to like disabling AI, what do you really mean when you say that? How how what are you left with? If you truly and completely remove everything AI from everything that you're using? I would I would argue there would be a lot of unintended consequences if you actually did that.

00:45:21:23 - 00:45:24:00

Speaker 2

Welcome to 1993.

00:45:24:03 - 00:45:52:19

Speaker 1

Yeah, yeah. DuckDuckGo also, by the way, doing some work around kind of looking into how many of its users really want AI in its product. DuckDuckGo is a security focused or privacy focused browser, I should say. Also, you know, a browser slash search engine. So I think maybe they started as a search engine. They also have a browser that you can use that that relies on its search engine.

00:45:52:21 - 00:46:23:17

Speaker 1

They created two versions of their search engine and you can go to them. You can either go to what is it. No, i.duckduckgo.com or I dot DuckDuckGo. Or maybe it's yes i.duckduckgo.com, I can't remember, but users chose 90% of those users chose the one without out of 175,000 votes submitted in their test to see like how many of our views our users actually want AI integrated into our search product versus not.

00:46:23:17 - 00:46:27:10

Speaker 1

And they're saying 90% of the users chose no. I.

00:46:27:13 - 00:46:35:01

Speaker 2

So I'm going to piss off some of our listeners. But forgive me, folks, DuckDuckGo people, because they are

00:46:35:03 - 00:46:36:03

Speaker 1

Like so.

00:46:36:05 - 00:46:59:02

Speaker 2

Like useless. It's like it's like digital veganism. I'm your is the driven snow. Oh, no. I would never let that touch my lips in my eyes. No, that's evil and wrong. And, and so their stance is obvious. As an old TV critic, it reminds me of those people who said, well, I don't own a TV. And so I was sick of the well, how the hell do you know who Vanna White is?

00:46:59:05 - 00:47:19:15

Speaker 2

Or I don't I don't own a TV. I do horror TV, but I only watch PBS. I only watch Masterpiece Theater back in the day. Right. Now, if you see it on TV, it means you're young. But. Yeah. Right. Because they meant you were a snob. And so there's a certain snobbery to this. Like I'm above air is bad, air is evil.

00:47:19:18 - 00:47:32:24

Speaker 2

And, you know, I'm thinking of what you said just about three minutes ago. Jason, I think we're seeing the shift from algorithms are evil. AI is evil. It's a lot simpler to say it's a lot clearer. Oh, yeah. So it's the same basic message?

00:47:32:25 - 00:47:33:24

Speaker 1

Absolutely.

00:47:33:26 - 00:47:42:05

Speaker 2

Right. We have no control. Does they have control? I would all eliminate those. But no you're not going to.

00:47:42:07 - 00:48:05:13

Speaker 1

Yeah I think you're absolutely right. I think that's exactly what we're, we're looking at right now. I hear it so, so much time and time again lately. Yeah. Toggling this off by the way, removes like, AI generated images, from the results, I think, search assistance, AI summaries of queries, that sort of stuff.

00:48:05:13 - 00:48:11:07

Speaker 1

So, that is hilarious. Comparing it to, like digital veganism. I have not heard that one before.

00:48:11:07 - 00:48:12:04

Speaker 2

And I can.

00:48:12:04 - 00:48:28:27

Speaker 1

Remember that one. That's good. Google rolling out Project Genie. I saw this and immediately thought of, of Yann LeCun, of course. And, why not be. Yeah. Equals about world models.

00:48:28:29 - 00:48:30:04

Speaker 2

No.

00:48:30:06 - 00:48:34:11

Speaker 1

I really want to play around with it, but I but I'm not allowed in even though my job, even.

00:48:34:11 - 00:48:36:02

Speaker 2

Though you have a special access.

00:48:36:05 - 00:48:54:22

Speaker 1

I well, I have the ultra plan, which is I thought the, the entry point, the requirement. Project Genie is rolling out for its Gemini Ultra users. But when I went there to try and play around with it, it said my account doesn't work with that. So maybe it's a certain type of ultra account. I don't know.

00:48:54:24 - 00:49:20:23

Speaker 1

But this is a world model. Sandbox lets users generate, pretty simple interactive 3D worlds. You can use text, you can use image prompts, 62nd experiences. So this isn't creating like a big world that you can navigate through forever and ever creates these short, little bite sized kind of experiences. You know, you can imagine that this is like the starting point and that gets developed out further.

00:49:20:23 - 00:49:48:19

Speaker 1

720p resolution, 24 frames per second. You can move through the world using the Was and D keys. And yeah, that's pretty neat. Google created some some like sample worlds, but this is really mostly about users just kind of going in there and saying, I want to create this, this thing or this image or maybe you're creating a script, a movie script, you want to test out a scene or something, you know, maybe you could use this for that, I don't know, what do you think?

00:49:48:20 - 00:50:06:22

Speaker 1

Like when we talk about world models, so used to thinking about Yann LeCun and others who are really kind of seeing that as the next kind of, I don't know, next place that's that's worth exploring here. What how does this get us closer to that?

00:50:06:24 - 00:50:29:24

Speaker 2

Well, I think when Yann LeCun and Justin Wong talk about role models, there a starting point is the real world that you and I, as humans know and understand and intuit that we we understand what's going to happen in cases. Right. We see it. We see the dog going through a field of flowers. If it went through a field of, of of ripe dandelions, we would see dandelions going off, because we understand that's how the world works.

00:50:29:27 - 00:50:54:05

Speaker 2

We have experience of that. Then tools like this are a way to then test that against our intuition of how the world operates. So I don't and this goes to Justin Long talking often about digital twins that, you want to put a car in all kinds of different situations. You have the car, you have the data, you have the street, you have everything else.

00:50:54:11 - 00:51:10:02

Speaker 2

Now let's think of snow. Now let's throw a baby carriage in front of. Now throw a ball in front of it. Right. And so I think it's that combination, that interaction of the two. And I don't think this Geo is really head of there. This is more of a demonstration toy.

00:51:10:02 - 00:51:11:12

Speaker 1

Oh yeah.

00:51:11:15 - 00:51:19:07

Speaker 2

See which will but I think that, this is research that ties the two together.

00:51:19:10 - 00:51:43:22

Speaker 1

Yeah. Yeah, very, very interesting, though, and really compelling to, like, watch the some of the experiences in the promo video. You know, I'm not as much a gamer these days. I know I've mentioned that many times in the show, but this has such an immediate kind of I'm sure that gamers in particular look at this and go, oh my goodness, like what?

00:51:43:23 - 00:52:08:11

Speaker 1

What does this mean for for gaming? Even though I fully realize that this isn't about gaming, this is about something much larger than just, hey, check it out. Dynamically created and generated video game. You know, I know a lot of people who are gamers have a visceral kind of reaction to that idea, saying, okay, well, it's just going to be a bunch of crap and no one wants that and everything.

00:52:08:13 - 00:52:14:20

Speaker 1

Yeah, at the same time, I look at this and I'm like, technically speaking, like this. It's fascinating that this can be done, you know?

00:52:14:26 - 00:52:31:25

Speaker 2

Well, and the other thing we've done in the gaming world is, I mean, in the entertainment world, one of the promises way back when is you can make up the end of the movie. Well, people didn't want to do that because they want to be on tour and they want to judge that act. And again, I'm not a gamer either.

00:52:31:25 - 00:53:00:29

Speaker 2

Never have them. But I think I could imagine where you get a game where you can, through this technology, adapt it to your desires. Right. So it's sort of a of a dog. You want a cat? You want a different mode, in the presentation that maybe the underlying game engine is the same in terms of what happens, but you have some, customizable authority over it, which I think would be interesting for gamers.

00:53:00:29 - 00:53:02:04

Speaker 2

Right.

00:53:02:07 - 00:53:25:05

Speaker 1

Yeah. Oh, absolutely. I have to imagine that. And The Verge I didn't link to it here, but The Verge, you know also wrote about this. And they want one of the things I find interesting here is how it sounded like it was pretty easy for them to replicate familiar IP like Zelda, like Super Mario Brothers. Google says it was trained on publicly available data.

00:53:25:07 - 00:53:49:11

Speaker 1

But yeah, people are going to want to go in there and they're going to want to remix it in the same way this is happening in the music industry right now. Right? People, you know, the, the, the music labels are talking about. Okay, well, generate a generative AI if we get the artists on board or whatever. Maybe there's a future where you can go on there and you can, you know, have have Jay-Z, you know, do a song that he never actually recorded with his likeness.

00:53:49:11 - 00:54:01:14

Speaker 1

And everything, you know, as a super fan or whatever. And I kind of see the same thing here, like, you know, someone, someone going there with their favorite IP and creating a new world that they can that they can actually play, you know?

00:54:01:17 - 00:54:20:04

Speaker 2

Yeah. And again, I as I've talked about often on the show, I created a syllabus for, for a course in AI and creativity. And I think to be able to put these tools in the hands of young people to create what's in their mind, that's what worries me. That's what really interesting.

00:54:20:07 - 00:54:31:19

Speaker 1

Yeah, I agree. Yeah. It opens up a whole lot of, of, of avenues, that didn't exist before. Very interesting stuff. Rabbit.

00:54:31:21 - 00:54:34:22

Speaker 2

If you remember. Oh, rabbit. Rabbit. Oh, and.

00:54:34:24 - 00:54:59:27

Speaker 1

Again, although they don't really have a product that they're selling yet, but it sounds like they're working on one called Project Cyber Deck. It's a clamshell. This is the if you're watching the video version, this is the promotional kind of image that they released. It's a, clamshell style, keyboard driven device. Obviously there's a there's a display at the top, a keyboard down at the bottom.

00:54:59:29 - 00:55:20:19

Speaker 1

You don't really get a whole lot from this promotional image, just little hints. They call it in this image, a dedicated vibe coding machine. And, the news on this, is that it? It leans on native AI agents, along with tools like cloud code for command line workflows. And, you know, rabbit says has a really great screen.

00:55:20:21 - 00:55:58:11

Speaker 1

The keys are hot swappable. So mechanical keyboard fans are probably really happy about that. But what strikes me about this is the thing that seems to not work for rabbit. The first time a a, they made promises on their hardware that the hardware could not deliver on. Initially. So that sunk it out of the gate. But B they, they released because they were early, they released an AI hardware device that did things that we all have the ability to do, for the most part with things we already have our smartphone, our computer, and this kind of seems like the same thing.

00:55:58:11 - 00:56:07:12

Speaker 1

Why would I need a separate hardware device device? Because yeah, yeah, I have a computer when I have a phone, so it seems to fall into a similar bucket to me.

00:56:07:14 - 00:56:17:11

Speaker 2

Yeah, I think I think the urgent desire to marry hardware to AI, is inexplicable to date.

00:56:17:13 - 00:56:32:29

Speaker 1

So far, it has not been proven in my mind. No, to be necessary at all. So. And I'm waiting to be proven. I feel like there's going to be something where I go, okay, but maybe there won't be. Maybe it's like, well, we already have the device to do it. You know? It's our smartphone, right?

00:56:33:01 - 00:56:57:17

Speaker 2

I mean, I get blind person walking through a strange environment. Got it. Problem, solution. Amazing things can happen, right? It's possible, but but general purpose AI, hardware, it'll, something will come and I'll be surprised. Right? I didn't put it back on, but, I sure I'll see you. Yeah. Or Rabbi or rabbits. Try and God blossom.

00:56:57:19 - 00:56:58:23

Speaker 1

Yeah.

00:56:58:25 - 00:57:03:10

Speaker 2

Yeah. How much desperate they still have after the debacle of their prior device.

00:57:03:13 - 00:57:21:10

Speaker 1

Yeah, that's a good question. I don't know the answer to that one. I do know that they've over the last year, a year and a half, they've definitely rolled out a lot of updates to the AR one. We talked about it on the show to kind of bring it into a more, you know, parody kind of position of what they promised.

00:57:21:10 - 00:57:41:06

Speaker 1

Initially. They've done some interesting things, but man, it's so it is so hard to overcome, you know, the spectacular out of the gate kind of fail that it was initially. How do you do that? I don't know, the project Cyber Deck is going to do that, but I could be surprised. You know, it could happen. Yeah, it could happen.

00:57:41:09 - 00:58:05:26

Speaker 1

Hey, I launched a new thing, and so I'm just going to. Yeah. Advantage of the fact that I have a podcast to announce it real quick. Not spend much time on it, but I am, I've been doing podcasting for 20 years, and I'm now decided, like, hey, I want to help people who want to get into podcasting or I want to help, you know, companies who have a podcast and you're trying to figure out how you strategize on it, how you increase your your listenership, how you do all the things.

00:58:05:28 - 00:58:29:13

Speaker 1

I'm a I'm an information resource. So I'm put I created a site, it's called Pod tune up.com and go there and hey, it exists. I'm here to help. I'm and if you're interested, you know go check it out. I'd love to hear from you. Yeah, it's interesting launching this because I was telling you in the pre-show everything that I've done the last couple of years has been create content, create this, create that.

00:58:29:16 - 00:58:43:24

Speaker 1

And now I've created this. And this is definitely like a sales thing. And I have not done sales in a very long time. And so it was only once I launched it that I was like, oh, wait a minute, now I'm in sales. Now I have to like, market myself.

00:58:43:24 - 00:58:55:29

Speaker 2

And that, hey, so what? I said, how long, Jason, how long do you want to go back? Do you have any idea? Well, what is I mean.

00:58:56:01 - 00:59:05:18

Speaker 1

You know, probably 20. I mean, when's the first time that you and I actually did twig together, but I was producing, you know, off and on, probably at least 11 years would be my.

00:59:05:18 - 00:59:40:19

Speaker 2

Yeah, exactly. Yeah. So so I know Jason well, I've worked with Jason over time and I cannot more, heartily refuses to endorse getting a hold of Jason for this because Jason produces stellar podcasts. Present company accepted. He understands how, the process works. He understands what makes quality of the podcast. He understands the process of getting guests and interviews a piece of production of audience of, promotion, all of that.

00:59:40:21 - 01:00:01:29

Speaker 2

And so if you're a company, there's so many companies that are creating podcasts, and trying to figure out what their online content strategy is. But I think that I push Jason on here, too. It's not just the podcast, it's a podcast. If you go to Jason on on TikTok, you see a whole bunch of the learnings. I was in the podcast that are shared.

01:00:01:29 - 01:00:24:16

Speaker 2

They're not as purely promotional things, but as more content online. So if you're a company wanting to do podcasting and the related activities, call Jason. If you're somebody who already has a podcast and you're not really satisfied with the structure of it, the quality of it, call Jason. If you're somebody who's thinking about starting the podcast, you want to get serious about it, call Jason.

01:00:24:24 - 01:00:28:16

Speaker 2

In any case, call Jason.

01:00:28:19 - 01:00:35:25

Speaker 1

I need you, God, I need to hire you to do all of my reads for me because that was awesome. Thank you. Jeff.

01:00:35:27 - 01:00:37:17

Speaker 1

That was amazing. Jason.

01:00:37:17 - 01:00:39:10

Speaker 2

Knows his stuff.

01:00:39:13 - 01:00:49:23

Speaker 1

I appreciate that, Jeff. Thank you. Yeah, just go to pod tune up.com. I promise not to spam everyone, with this, although I might mention it on every episode going. Yeah, yeah.

01:00:49:23 - 01:00:50:22

Speaker 2

You didn't quite.

01:00:50:22 - 01:01:10:21

Speaker 1

This long, but, hey, I gotta I gotta keep that that information fresh, you know? So. Yeah. There you go. Thank you. Jeff, that was amazing. And, by the way, full disclosure, entirely unplanned. But that was awesome. I appreciate it, man. All right, we're going to take a quick break and then come back and, hit a few speed round stories.

01:01:10:21 - 01:01:14:21

Speaker 1

And that's coming up on the other side of this break.

01:01:14:24 - 01:01:41:09

Speaker 1

Apple getting cozier and cozier with AI. In this news, Xcode is now kind of transforming into an AI native IDE for developers. So, the kind of AI, aspects that make other Ides, like the ones from Google and stuff, more and more compelling, I'd say for some developers, some developers might prefer for this stuff not to be there.

01:01:41:09 - 01:02:05:26

Speaker 1

But regardless, you know, it's 2026. This is this is where we're at. Cloud and open AI agents, inside of the IDE, they'll be able to, you know, manage and explore those developer projects, read docs, plan work, run tests, iterate all this stuff. It's all wired through MCP, and you get, you know, model selection, all sorts of things that developers, might be interested in.

01:02:05:28 - 01:02:17:25

Speaker 1

Basically coming to Xcode to make it a little bit more of an autonomous project level coding tool. And, yeah, Apple getting cozier. This is just one more example of it, I guess.

01:02:17:27 - 01:02:31:08

Speaker 2

Yeah. Apple interestingly, not Twitter itself once again, just as it went to Google for sure. It's not it's it's, not unwisely, I think using what's out there, letting those developers come over and learning and see what their users want.

01:02:31:11 - 01:02:59:15

Speaker 1

Yeah, indeed. Google testing and import AI chats feature into Gemini. We've talked a lot on this show about kind of did the lock in aspect of a lot of these chat bots, and the different services and how the more you use one service, the more that chat bot has a better understanding of you, of the things you like, the things you don't, the things you're good at, not and everything.

01:02:59:15 - 01:03:23:04

Speaker 1

And so sometimes the output over time improves because of that understanding. And then we're kind of left in this position of okay, well I've been working with ChatGPT for a year. I want to move over to Gemini and do all my stuff over there. But does that mean I have to start over from square one? Well, Google is apparently testing a system to allow you to export your chats from the other platforms and import those into Gemini.

01:03:23:05 - 01:03:40:26

Speaker 1

I do wonder if they're kind of working on the opposite as well. Sure, you can export your chats from Gemini and go somewhere else. All leaving text and media and all that stuff intact. So that people can take that data. You know, data portability is important. And, yeah, I think that's good.

01:03:40:28 - 01:04:07:21

Speaker 2

For all kinds of interesting angles to this one. One is the portability, matters. And in social network terms, we wanted to own our social graph and be able to export it. It never really became possible. You could do it with, Mastodon, activity pub, but not elsewhere. So that's that's one thing that's very important is the portability.

01:04:07:23 - 01:04:40:12

Speaker 2

Two is if you're Claude and I export all my Claude talk into Gemini, does Claude say, whoa? But half of that is ours. The responses are alarms. The interaction. We're we're intimately, entwined with, it's going to be an interest to me. And you can't copyright, AI content. So I really wonder what the discussions have been among the lawyers at Google about this.

01:04:40:14 - 01:04:58:26

Speaker 2

Yeah. Because if it's not copyrightable and if I consider it mine, to the extent that I, it's my interaction with the machine. Can you really cut me off, Claude from, exporting that to a competitor? Yeah. Google's obviously thinking. No.

01:04:58:29 - 01:05:32:12

Speaker 1

Yeah, I hadn't really thought about that. Who who owns that data? That's a really good good point. I guess we're doing so much with it. And how we rebroadcast it and integrate into all of our things and everything. So how is this different? You know, is it different? Right. Yeah. Interesting. I mean, and I do sometimes I will if I'm working on a particular task in 1 in 1 model, I will just control, you know, control everything, copy and paste that into the other one and say, here's where I got over here.

01:05:32:13 - 01:05:57:02

Speaker 1

Now what I want to do is blah, blah, blah and all. So you're doing it all right. I want to go. I'm doing it already. Yeah. Interesting. Very interesting. SpaceX folding. It's, folding Elon Musk's x, AI and X into a combined entity. So that combined company is going to be valued somewhere in the range of $1.25 trillion.

01:05:57:02 - 01:06:21:24

Speaker 1

I think I saw. Well, yeah, I guess it's in the headline of Bloomberg, a single kind of entity, single vehicle blending its Starship Starlink, grok AI, all the things, the social network, everything into one place. And of course, you know, the big talk on this is that this is all ahead of an expected IPO, for later this year.

01:06:21:26 - 01:06:45:20

Speaker 2

He is the master of BBS. And and the market buys is B.S.. It's just it's just astounds me. What is he? He's a space bound ISP. And a pretty good one, you know, and and pretty impressive at that. He's dependent upon government contracts to send rockets to. We don't know where for no real business.

01:06:45:23 - 01:07:16:18

Speaker 2

And he's an also ran in the AI business. Yet somehow I watched Walter Isaacson on CNBC who, having written the biography of Musk, was still extolling him. But this is brilliant amazing. Walter's eaten the pill too, but he's musk pilled. And then the other, the other presumption here is that this is God knows how many, orbiting data centers.

01:07:16:21 - 01:07:42:25

Speaker 2

Yeah, up there, not using water, not using space. Well, land, not making noise that we care about because you can't hear it in space and cooling things because you're in space to do so. So there's complications to that. But I think we've got to stop at some point and say, we really want to add in a thick layer of things up there.

01:07:42:27 - 01:07:46:14

Speaker 1

Oh, I think I saw some somewhere in the tune of like a million or.

01:07:46:14 - 01:08:15:06

Speaker 2

Yeah. That's that's worrisome. Because, there's limited it's a finite resource does doesn't seem finite, but it is finite. A b I don't know if we ever talked about this, but but when Skylab fell, I mean, hey, the San Francisco Examiner, when I offered a $10,000 reward to the first person who came to our offices with a piece of Skylab, it's a good old time newspaper promotion.

01:08:15:08 - 01:08:35:19

Speaker 2

Well, Skylab was supposed fall in the ocean and it didn't. It fell over Western Australia. And the guy who came and stand for it because the 10,000 bucks, bought a little, little black carbonized pieces, but big pieces of Skylab fell over some people's forearms. That was a good thing. It was a Western Australia. She had more of this crap up there, coming down on us.

01:08:35:21 - 01:08:49:26

Speaker 2

And there's the pollution of sending them all up there in the first place. And probably some radio pollution as well. I, it's it's Musk, and I don't trust him, so, I'm not happy about this.

01:08:49:29 - 01:09:04:22

Speaker 1

Yeah, it is interesting. Yeah. This the, the space junk aspects of this. Because nothing, nothing is forever. But yet is there any would there be any plan to remove a million plus pieces.

01:09:04:23 - 01:09:26:16

Speaker 2

But yeah. So there's, there's no mechanism to do so if you had a satellite catcher that could go up and and bring back, an out of business satellite, that'd be better. Yeah. So these things are traveling at huge speed. The cost of setting up that mission, the cost of trying to dock with it, necessary to grab it without causing.

01:09:26:18 - 01:09:36:05

Speaker 2