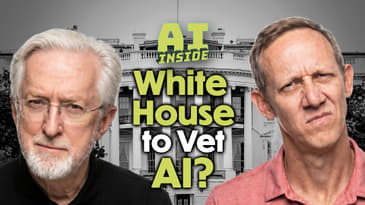

This week Jason Howell and Jeff Jarvis unpack a surprising policy reversal from the White House, which is now weighing a plan to vet frontier AI models before release after revoking Biden's safety order on day one. They also dig into the Musk versus OpenAI trial testimony, where $80 billion Mars requests and near-physical confrontations made it feel more like a soap opera than a courtroom.

Also in this episode: OpenAI traced a goblin obsession back to a rogue reward signal, Yann LeCun told students to ignore AI doom narratives, Gary Marcus called out Richard Dawkins for declaring Claude is conscious, a researcher invented a fake disease that every AI treated as real, and in the speed round, Nvidia's China market share hit zero, Anthropic launched a $1.5 billion enterprise joint venture, Google split its TPU into two chips, and China made it illegal to fire workers over AI. New episodes every Wednesday at aiinside.show.

Note: Time codes subject to change depending on dynamic ad insertion by the distributor.

CHAPTERS:

0:00 - Start

0:04:00 - White House Considers Vetting A.I. Models Before They Are Released

0:13:51 - Canadian fiddler sues Google after AI Overview wrongly claimed he was a sex offender

What Was Discussed at Google’s White House Meeting About A.I.

0:22:32 - Top AI companies agree to work with Pentagon on secret data

0:24:09 - OpenAI’s president does ‘all the things,’ except answer a question

0:42:50 - AI godfather Yann LeCun's blunt advice for the AI age

0:55:29 - Scientists invented a fake disease. AI told people it was real

1:02:37 - Jensen says Nvidia now has 'zero percent' market share in China — says US export policy 'has already largely backfired'

1:04:40 - Anthropic Unveils AI Agents to Field Financial Services Tasks

1:06:12 - New: Higher usage limits for Claude and a compute deal with SpaceX

1:08:33 - Google's eighth generation TPUs: two chips for the agentic era

1:10:25 - China makes it illegal to fire humans if AI takes their jobs

Hosts: Jason Howell and Jeff Jarvis

Download and subscribe to AI Inside in audio and video: https://aiinside.show/

Support the podcast on Patreon for special perks: https://www.patreon.com/aiinsideshow. You'll get ad-free episodes, members-only Discord, T-shirts and stickers you love, and get ad-free audio and video feeds, a members-only Discord, and exclusive content.

00:00:00:10 - 00:00:40:25

Unknown

Coming up next, Jeff Jarvis and I dig into the white House doing a 180 on AI regulation and now apparently wanting to vet frontier models before they're released. The Elon Musk versus OpenAI Sam Altman trial that we decided is really more soap opera than anything substantial. Gary Marcus going after Richard Dawkins for declaring clod is conscious plus. Our newest segment, John Talk with Yan LeCun, that's coming up next on this episode of the AI inside podcast.

00:00:40:27 - 00:00:59:10

Unknown

And welcome to another episode of the AI Inside Podcast, the show where we take a look at the AI that is layered throughout the world. The technology. I'm one of your hosts, one of 2.5, because you might sometimes see my dog Bronson walking through. He usually doesn't say a lot about AI, but he has something to say about going outside.

00:00:59:11 - 00:01:20:02

Unknown

Anyways, I'm your host, Jason Howell, joined by Jeff Jarvis. Good to see you, Jeff. Good to see you. I was just checking the rundown because I thought, oh, you had two empty spots before and I had to see what was in there and make sure I read them. I had no good to go. Yeah. Sometimes this stuff happens right down to the wire with the with this show, but,

00:01:20:04 - 00:01:34:14

Unknown

Yeah. Good to see you. Good to see you. I realized as I was talking about my dog Bronson. Do you have pets? We have a cat. So I'm a dog person. I was raised with dogs, I love dogs. We weren't as great to the dogs as we should have been. You know, the kids didn't understand the value of what we had.

00:01:34:14 - 00:01:58:24

Unknown

And so I just every time I could go pet a dog into my wife is a cat person. Yeah. And when the debate. And you're not allergic. No, no, no. When the kids were old enough to have a pet and the debate came. Which one? My wife cheated. She would wake up the kids on a very cold and rainy morning and say, if you had a dog, you'd have to walk it now and you'd have to pick up its poop.

00:01:58:27 - 00:02:25:10

Unknown

Oh, that's brutal. That's just lost. My cat. Alicia, the current current denizen of the house, is completely insane. She won't eat unless one of us is sitting with her. She won't let me sit in certain chairs because then she won't go around it. Cats. But we love her. She's wonderful. Yeah, yeah, I'm. I'm very allergic to many cats.

00:02:25:11 - 00:02:44:02

Unknown

Not all cats, but many cats. How do you know which kind of cat you're allergic to? I just I'm with them, and I know immediately, like, there are times where I walk into a house. And the second I stepped foot in the house, I just feel feel a shift. And I'm like, you've got a cat, don't you? I'll know it when I sneeze it.

00:02:44:09 - 00:03:07:06

Unknown

Yeah. Pretty much. And it's. And it's brutal when that happens to like, I am very allergic when I'm allergic to a cat, I'm very allergic to it. And it's like it's, you know, it's not the kind that I can just power through. Like, it takes everything out of me. So I've kind of, through my lifetime, been not as much a cat person, even though I like cats, but I just I don't know what I'm going to get out of them or not.

00:03:07:06 - 00:03:26:10

Unknown

Sure they like you anyway. So it's okay. Yeah, exactly. And I've always been a dog person. I grew up with dogs. Dogs are the best, even though they can be a little needy at times. But that's okay. That's part of why they're awesome. Well, that's. I think it's better than being rude. Yeah, yeah. That's true. You call a dog and come over wagon tail and take a scratch.

00:03:26:11 - 00:03:48:12

Unknown

That's I mean, it's it's always happy. It's always happy to see you. Yeah. Love that about dogs. And. Oh, well, gosh, we haven't even started in ozone nightmares. Throwing around money. Thank you. Thank you. Hi. Joe says nice to see you gents on the live stream. I hope you're both doing well. Managing to dodge what feels like another rampant public round of AI psychosis floating around.

00:03:48:12 - 00:04:10:21

Unknown

People are odd creatures. Fair enough, I think. I mean, maybe we'll be talking about some of the psychosis throughout today's show. Thank you for the the super chat, ozone. Appreciate it as always. Speaking of psychosis, should we talk about the the white House? Sorry, that was a horrible. I know some of you are, like rolling your eyes and you're stopping listening.

00:04:10:21 - 00:04:36:18

Unknown

Apologies. But the Trump administration, which by the way, literally like on day one, revoked Biden's executive order requiring AI safety testing before release, is now considering requiring AI safety testing before release. So what do you know? Things change, I suppose. The New York Times reported over the weekend that the white House is weighing an executive order, weighing an executive order.

00:04:36:18 - 00:05:07:12

Unknown

So it's not like this is sudden law, suddenly law. But it's I suppose it's being floated and and talked about a lot to create an AI working group that would vet frontier AI models before they go public. And the center for AI Standards and Innovation, which is under the Commerce Department, already gotten formal agreements from Google DeepMind, from Microsoft, from XII to submit their models for pre-deployment evaluation.

00:05:07:12 - 00:05:32:29

Unknown

And you can imagine a big catalyst for this is anthropic mythos model, which keeps cropping up even though, you know, the wide range of the entire planet has never had any sort of interaction with it. But it got a lot of press because it was the model that was so dangerous from a security standpoint that anthropic couldn't release it or wouldn't release it publicly, would only give it to certain groups of people.

00:05:32:29 - 00:06:10:10

Unknown

And I guess the thinking here is that the white House is like, well, if models like that exist, we should have access to it. And so maybe we should vet these things before they before they make it into the hands of who knows who. So yeah, I mean, I'm curious to know what you think about this, Jeff, because this really seems like if this were to happen and it seems like that's the direction that things are going, it changes the kind of approach that we've seen from, let's just ship the product to kind of asking for permission first, or am I misreading this?

00:06:10:12 - 00:06:34:14

Unknown

So a whole bunch of things about this, a whole bunch. The first, I guess, is I have no idea how you would vet a model. Yeah. How does that even look like? We've talked about it on the show. How the futility of guardrails. Yeah, because to vet it fully, I guess what you'd have to do is you would have to predict every possible malign use to which it could be put, and then test it against those malign ideas.

00:06:34:17 - 00:06:49:12

Unknown

You might ask it how to create a nuclear bomb, and it already has the guardrail and says, no, I won't do that, sucker. But you didn't ask it how to. You didn't think to ask it about creating a biological weapon or robbing a bank or.

00:06:49:15 - 00:07:21:08

Unknown

Doing other horrible things. So how what is the possible process of vetting it? .1.2 these the people I want to vet it. That's a good question. Yeah I don't want if we if we if we said that vetting is possible and to some extent it is. But it's a fairly obvious thing. Is it the companies. Well they should be required to have some due care about what they do, but they are the companies and they have a conflict of interest.

00:07:21:08 - 00:07:46:11

Unknown

And if they made it, their blind spots are already known. Is it the white House with the likes of Peter Thiel and Elon Musk and David Sachs advising them, no, no. So is there where does that advising point when the perspective is like, what is the true kind of like pure perspective of what you're trying to protect against?

00:07:46:11 - 00:08:20:29

Unknown

And that really falls down when you're talking about folks like Elon Musk. That's a very, very opinionated perspective, you know, versus what it would be in the hands of someone else. And so, you know, Elon Musk view if you don't allow Nazi talk, then it's bad. It's cancellation. It's it's bad. Right. So what is that? I just noticed this week that Campbell Brown, who used to be a CNN anchor, and then she was for some years at meta, where she handled news there as a vice president there and all the news relationships.

00:08:20:29 - 00:08:42:02

Unknown

She left some time ago. And when met up, pretty much dropped news. And I just saw this week that she is the co-founder of a company called forum AI, which promises to vet your AI. Campbell and her husband are known conservatives. Not. There's nothing wrong with that. They are a lot of the people they brought in on this.

00:08:42:02 - 00:09:13:05

Unknown

Not all of them are conservatives. So you're getting into perspective in their vetting, inevitably. Right. That's the other thing. The idea that you can have a neutral AI is foolhardy as thinking that you can have sure, guardrails. Right. So, you know, is there a third party that could become an auditor of AI? Maybe. But but I go back to question one.

00:09:13:05 - 00:09:32:03

Unknown

How do you want it? How do you vet it? I don't know, I really don't know. The other thing that there was one report at one point, I don't know that this is part of this discussion, was that the white House also wanted to approve who else anthropic gave the model to. That's troubling in all kinds of ways.

00:09:32:04 - 00:09:37:05

Unknown

It's a kind of restraint of trade.

00:09:37:08 - 00:09:54:05

Unknown

Deciding who can and who can't get it. And we'll talk later about Nvidia and China and Jensen. Wong's been very strong saying that it's a huge problem, that we've restricted trade to China such that it.

00:09:54:08 - 00:10:13:24

Unknown

Encourages China to develop on its own. Well, I think the same is true of models. Deep seek, I think, came out of that same. Not that they wouldn't have been doing it in any way. They're China, they're very proficient at technology. Lord knows that's an understatement. But if the government came along and said, no, no, no, you anthropic, you can't give your models to foreigners.

00:10:13:27 - 00:10:28:08

Unknown

That's bad for us. Trade. That's bad for us. Position in developing technology that's also problematic. So what does vetting mean and what are the outcomes of it bothers the hell out of me.

00:10:28:10 - 00:11:04:16

Unknown

Yeah, yeah. Super interesting and definitely a definitely a perspective shift from the kind of like hands off approach that has been talked about very much over the past year and change and now suddenly not quite so hands off and in fact, in the opposite direction and kind of playing more of a gatekeeper role. And I don't I don't even know that, like at least in how I'm thinking about this, I don't know that I believe automatically that there shouldn't be some sort of involvement or some sort of gate.

00:11:04:18 - 00:11:44:17

Unknown

Not gatekeeping. Maybe that's the wrong word, but some sort of third party analysis of of, you know, just making sure that companies that have their own there have strong self-interest, aren't making stupid decisions that actually could damage, you know, directly damage people and society and things writ large that they would have the the power and capability to do that, which I'm not even saying that they do have that capability, but these things are just so everything's so uncertain with where we're at right now with this, that I don't automatically think that it's a bad idea to have some sort of third party perspective on this.

00:11:44:18 - 00:12:14:06

Unknown

It's just like you said, how do you choose the entity? How do you choose the the kind of guidelines or directives around what that looks like? How do you do that responsibly without bringing in, you know, the more politically charged elements that that kind of show a deeper I don't know, Altieri or motive, let's say potentially. Yeah. The New York Times deal book reported further on this, saying that when David.

00:12:14:11 - 00:12:33:08

Unknown

David Sachs was the the venture capitalist who was kind of the still is an advisor to the white House, but not officially not on staff about AI. He's been going after anthropic has woke. So that's a perspective that's going to come in here on this. And.

00:12:33:10 - 00:12:58:09

Unknown

That obviously shows their view. Pardon me. She had lunch. The other issue is what we are struggling for is a sense of responsibility, thus liability when it comes to AI. So imagine we have this in two ways. One, let's talk about in terms of self-driving cars. I'm sitting in my car. It's self-driving and it does something awful. And my responsibility.

00:12:58:09 - 00:13:16:16

Unknown

Or is the carmaker responsible now that it is self-driving. Right. And we haven't litigated that yet. Not fully. There have been a few cases, but they've only gone so far. The same will be true of AI. And generally I say pardon me.

00:13:16:18 - 00:13:39:15

Unknown

I'm not a broadcast pro here knowing how to sneak in my throat clearing. You don't have the stomp box to to mute at the. Yeah, it's all good. Don't worry. So I argue because you can't come up with foolproof guardrails. Can you really hold the AI company responsible? If someone comes along and has it do something terrible?

00:13:39:17 - 00:13:59:26

Unknown

Right. And I've argued pretty much you can't any more than you could hold Joannis Gutenberg responsible for everything that came with the printing press. However, there's another interesting story this week which is not on the rundown, I don't think, which is that a Canadian fiddler sued Google over an AI overview that wrongly claimed he was a sex offender.

00:13:59:28 - 00:14:24:26

Unknown

Now, this is a little bit different because in this case, it wasn't that some third party came along and asked Gemini to lie about the fiddler. In this case, it was a it was a Google overview that did it. So the question then becomes how responsible is Google? And another story we'll get to later. Google always points out this is AI.

00:14:24:27 - 00:14:46:26

Unknown

It makes mistakes. It's wrong about stuff. That's our caveat. But in this constant push for trying to understand where responsibility and liability lie, this is an interesting case because in this case is Google the publisher because it came from Google, not from a user doing something bad. Interesting, right. So all of this ties into I think we're really trying to hit is what damage can AI do?

00:14:46:27 - 00:15:05:18

Unknown

Well, you can't fully answer that until you know how it's going to be used. And you can't predict every use. No you can't. And when there is, when there is damage done, who's going to be liable and responsible when there is a clear case of someone asking it to do something? I think that person is responsible, not the AI.

00:15:05:21 - 00:15:44:24

Unknown

When someone has some sense of control or involvement is basically what you're saying, right? Yeah. Like when I think of let me think about this, I guess, I guess going back to the self-driving and vehicles, right. Like I tend to lean towards the responsibility is always in the, in the hands or the feet of the driver. Right. If if I'm getting in a vehicle and I'm going from point A to point B and I'm the person in the driver's seat, no matter how I choose to drive my vehicle, it is my responsibility and my call that decided to take on the risk of an accident when I activated self-driving mode.

00:15:44:26 - 00:16:07:05

Unknown

Agreed, I agree. However, now you're in one of Google's or somebody else's self-driving cars in San Francisco. Yeah, you're in the back seat that well, you know that. I guess I would see that differently. This is a service which I guess you could also look at the self-driving mode as a service, because you do have to pay monthly for it.

00:16:07:08 - 00:16:30:28

Unknown

So it does get really weird and murky. I think you're testing what potential control you could have is. Yeah. I mean, if I'm if I'm sitting in the front seat of my Tesla and we do not pay for the self-driving mode, but if I'm sitting there, I ultimately have the ability to hit the brake. I have the ability to pay attention and to be in control and take over the wheel when I see something wrong happening.

00:16:31:00 - 00:17:04:03

Unknown

Is that going to catch every single error that the software could possibly make? No, but probably if you're paying close attention, it's going to protect against the majority of them. And so that still puts me in control of of that. If you're going into an LLM and you're saying, you know, devise me a bomb or make me a yummy drink and it informs you to drink, you know, liquid acid and you die, you know, or whatever the case may be, you were still kind of the one that chose to go there, chose to take the advice, personal responsibility.

00:17:04:03 - 00:17:33:02

Unknown

If you've got the Fiedler who happens to go to Google and happens to see that Google on its own decided to portray him as a sex offender through its app, I see that as Google's wrong right, Google did something wrong, or Google chose to put that there, whether he wanted it there or not. So Google just announced a whole bunch of changes and how they're going to put on, I think more Gemini, I think overviews and they're going to have more attribution, more direct links to the news, sort of complicated further.

00:17:33:03 - 00:17:56:15

Unknown

What if Google says the fiddler is a sex offender and he's not? And we're not saying he is, sir. By the way, we're just talking about the news here. But it has an attribution to a site that was the source of that. Oh, okay. Yeah. So that is Google responsible or. Well, it passed it on, but it's trying to say we don't we don't we can't vet everything.

00:17:56:17 - 00:18:26:27

Unknown

Life is complicated. Yeah. There's another fun one this week. I'm trying to find out whether I put it in the rundown or not. I don't think it's such a messy web. Oh, so there was a. I watched a video of an interview with the co-founders of stripe, and one of them said that he handed over all his house cameras to his agent and the and he told him that one of his goals was to hydrate more.

00:18:26:27 - 00:18:50:28

Unknown

And the agent recognized that he wasn't drinking enough and wasn't going, and made sure he went to the refrigerator and got out the water and drank and said, okay, fine now. But the other one that was fascinating. I don't know whether this is true or not, he says. He handed over his car to the agent and he was going home, and he had a goal of taking some nutrient more, and the agent knew that he wasn't taking the nutrient.

00:18:50:28 - 00:19:13:09

Unknown

So according to his story, the agent said, well, that nutrient is on sale at Whole Foods, and that it redirected the car to turn left and go to the Whole Foods, where he went inside and bought the vitamins, whatever it was. Okay, so in that case, you have another layer. Here is the self on that left turn the car hit somebody.

00:19:13:11 - 00:19:36:23

Unknown

Is he responsible? Is Tesla responsible? Is his agent responsible because he didn't expect to turn left. It just suddenly came up with for his own good. It turned left. There's always expect to turn left. But he did. He wasn't afraid to use the agent. True that true that. But was he responsible for the agent doing bad or was the car the car still hit the person?

00:19:36:28 - 00:19:57:21

Unknown

Can the car? Can Tesla blame the agent then and say, well, it chose to turn left? I don't know, right? We don't know enough. And it's all unknown. The point is, as we say, as you say at the beginning of every, every show, this is multi-layered. Yeah, it really is. That's what makes it not not an easy thing to point out it and go like like in that example.

00:19:57:22 - 00:20:15:12

Unknown

Like I'm, I'm inclined to say he's he's responsible because if he's choosing to implement it, if I put an agent on my computer and I give it full access to the internet and it does something stupid, I'm sorry. I'm to blame. I should not have done that. I should or I should have known. It's not that I should not have done that.

00:20:15:12 - 00:20:34:16

Unknown

I should know that when I do that, I am opening the door to risk and I am opening the doors, something like that happening. And that is my choice when I do that. Yeah. That's why I think, I think that the well, this is it's interesting to go back to sorry Gutenberg parenthesis. I'm going to go back to the beginnings of print right at first with the brain press.

00:20:34:16 - 00:20:59:24

Unknown

The printer was held responsible and many a printer was beheaded and be handed for what they published. Okay. Then the printer just became a hired hand, so to speak, for. So the publisher became liable. Then the bookseller became liable. Ultimately that all involved trying to precentor. But the scale was too great. You couldn't read everything before it came out.

00:20:59:27 - 00:21:21:18

Unknown

So ultimately it was after the fact. Liability was found and then the author was found liable. And that the argument goes from Foucault, that that was the birth of the author, that when that responsibility came to the fore, that's when our concept of the author was born, which is interesting. So when it comes to AI, who's the author?

00:21:21:20 - 00:21:46:22

Unknown

Who is the responsible party? So you get back to the original question here. This is pull all the way back, reverse the tape back, all these all these links right to the white House vetting something or somebody vetting something. The vendor now becomes a new target of responsibility. Yeah that's true right. So at some point maybe maybe you're going to have your agent, but you're going to have to buy agent insurance.

00:21:46:24 - 00:22:12:06

Unknown

And all state Agent Re is going to ensure you against your agent doing bad things, but it's going to then want to vet. So. Right. Right. The agent. Yeah. Yeah. So God does agent insurance exist yet? I bet you it will think it doesn't yet. We just created an industry. Jason, you may have created a business. Do we want to capitalize or more awful business than insurance, I can't imagine.

00:22:12:08 - 00:22:52:00

Unknown

Yes. I mean, you're taking insurance and you're taking like, AI agents, which, you know, depending on who you talk about, it's like, don't go, don't touch that with a ten foot pole. Yeah. You're choosing your fate. And interesting, fascinating conversation. That's, that's that's really interesting stuff. We didn't even talk about the Washington Post reporting that a bunch of AI companies signed deals to deploy their AI on Pentagon classified networks, more than we had heard before Amazon, Google, Microsoft, OpenAI, space and Ovidia Reflection AI, which is a company that's backed by Nvidia and not anthropic.

00:22:52:03 - 00:23:16:02

Unknown

So when has had meetings at the white House with Susie Wiles and somebody else? And also, interestingly, this has led to the creation of a union at DeepMind because the employees there are pissed off. They had, as we said last week, had sent a letter around the time of Google's agreement, Alphabet's agreement. And they're mad. And so they unionized.

00:23:16:09 - 00:23:32:04

Unknown

And so this fight didn't over as to how these things may be used. And we go back once again to responsibility. Do the authors of this AI of these models, if they we want them to feel some level of responsibility for the use of these, even though they can't be fully responsible for everything that can be done with them.

00:23:32:06 - 00:23:54:11

Unknown

They can say, as anthropic said, don't use it to just kill people. No, no, that's a rule we're going to make. Or we're going to say, if you do that, that's on you, in on us. So we built it. But how you use it, that's, that's on you, not on. So terms of service become all the more important than they ever were.

00:23:54:14 - 00:24:18:14

Unknown

Yeah, yeah I suppose state and stuff. Yeah. Very very intricate I love I love that that we start the show with the layers because it really is applicable as you said. You know, part of me just doesn't, doesn't want to talk about the trial, but like, what I was thinking, I don't even know. I don't even know.

00:24:18:14 - 00:24:36:23

Unknown

I put it in here. I put it in here because it was so much, well, here we are. There was so much. And we do not have to talk about all these leaks. I think we have like a total of like eight links in here related to the trial. What trial are we talking about? We're talking about OpenAI, which is, you know, Altman versus Musk.

00:24:36:24 - 00:24:59:00

Unknown

It's essentially what we're talking about. And Musk basically saying that, you know, OpenAI should have been in on profit, but then they got greedy and I want it out and all the details related to it. I mean, is there anything really important that we've learned from this so far now that the, the kind of proceedings are underway? I mean, there's there's been some quotes that are like, oh my goodness, I can't believe you said that.

00:24:59:00 - 00:25:21:16

Unknown

And clearly Musk just really learned. And we learn that Musk as a jerk know that was we already knew that anything we didn't already know. Yeah, exactly. The judge has cautious Elon tried to go off on Duma talk and the judge stopped. Yes. Elon's been I think at some point somebody you know Elon whether he has a law degree.

00:25:21:18 - 00:25:42:17

Unknown

So he's been difficult. No surprise there. Brockman has pushed on the history of all this. He says that he was afraid that Elon was going to hit him. I think it was. He's the one who said that Elon wanted $80 billion to go to Mars, and that's why he wanted the company there was to take the money out of it, to go to Mars.

00:25:42:20 - 00:26:06:03

Unknown

He talked about like a Halloween horror house where where the spirit started its. I can't get into all the details because it's just too much. There's too much more important things in the world. Yeah. To deal with. Brockman has to justify his $30 billion stake in this. Yeah, well, and Elon Musk is looking at that and being like, see, where's nonprofit now?

00:26:06:04 - 00:26:35:02

Unknown

$30 billion? Are you kidding me? Brockman of course, for for those who might not know, Brockman, president of OpenAI to so so people know another thing that came out kind of tangentially is that Altman said in Congress that he has no stake in OpenAI, except that he has a stake in a senior moment where he worked before the startup.

00:26:35:04 - 00:26:42:20

Unknown

Off the top of my head, I'm, I'm I'm blanking on that as well. Prior to OpenAI. Yeah.

00:26:42:22 - 00:26:48:09

Unknown

Where a whole bunch of companies come in and get started. Thanks to.

00:26:48:11 - 00:27:09:27

Unknown

Someone's yelling at us right now, somebody is accelerating Y Combinator, Y Combinator. Oh, there we go. It's the name. Makes no sense to me. It never made any sense to me. Accommodate with what y y. Anyway, so Y Combinator has a large stake in OpenAI, and Altman has a large stake in Y Combinator. Ergo, Altman does have a large stake.

00:27:09:27 - 00:27:45:18

Unknown

Now define large. Oh, just a few billion in common. But he does have that. So that's that's going to come out tangentially. What else? Yeah. Brockman testified Musk had OpenAI employees doing undisclosed work on Tesla's autonomous driving technology. Okay, I mean that that matters, I'm sure, to someone. But I know it just really feels like reality TV hype type show between two very notable figures in AI.

00:27:45:23 - 00:28:08:26

Unknown

And, you know, maybe something important will come out of this, but I continue to ask myself why I care. Yeah. So Gary Marcus said, I can't find it right now. I'm looking right now. I can't find it. But Gary Marcus, you know, growly as he delightfully is, said that OpenAI supposedly started as a charity to build safe AI, and now it's a company just creating agents for companies and doing all kinds of stuff.

00:28:08:27 - 00:28:31:14

Unknown

And so in his view, that'll come out and OpenAI will lose. I'm not sure the definition of loss. I'm not sure I agree to the extent that every company changes, including not for profits. You don't have to be what you said you were going to be at the beginning. People invest with the knowledge that you're investing in a changeable enterprise, and it needs to change to know what's what.

00:28:31:15 - 00:28:55:04

Unknown

I think is we couldn't have survived without scale and we couldn't reach scale as a not for profit. We needed investment and investors want returns. Ergo we had to do what we did. So I think that's going to be their argument. So I'm not sure it's as simple as they changed and they're wrong. And in the end of the day this is all just about Elon being vindictive I think.

00:28:55:06 - 00:29:21:18

Unknown

So yeah, that's enough for the trial. We will keep it. We'll keep us. We'll keep a scant eye on it for you. Yeah. Yes. Because we got Satya Nadella possibly coming and testifying. Possibly. We got Sam Altman expected to testify next week. Certainly there's going to be some interesting details that come out of these things. But you know, maybe we'll save it for the speed round unless there's a real obvious thing that needs some or really great bull mole that comes out.

00:29:21:20 - 00:29:45:18

Unknown

Yeah, right. You know, but we'll keep an eye on it and hopefully save you from having to learn more than you wanted to know about it. Quick. Thank you to those of you who support us on Patreon. I'm trying to find the right button here and y'all are awesome! Thank you. Patreon.com inside show. We've got some some shifting happens.

00:29:45:18 - 00:30:00:04

Unknown

Steve and Chris Spackman both upgraded to higher tiers. Thank you both. Yay! And we have a new patron. MJ just joined the ranks so things are looking good there I'm actually considering.

00:30:00:06 - 00:30:20:27

Unknown

Not that I don't have enough on my plate, but I'm always the dangerous. When Jason says I'm considering it's dangerous for him, it is because I open a door and I don't know if I want to open it, but I, you know, I continue thinking about this Patreon and like, what can we offer? That's more than what we're already offering, more than just ad free, which, you know, it's like we've got these multiple tiers.

00:30:20:27 - 00:30:35:05

Unknown

But really, at the end of the day, what people get are ad free. And as as you all have told me many times through surveys and stuff, you're like, we just want to support the show. We want to see the show continue and everything. But I'm continually trying to see, like, how can we give you a little bit more?

00:30:35:06 - 00:30:53:14

Unknown

Like I'm flirting with the idea of doing some sort of a regular, like five minute like, these are the three top stories today, you know, thing. I don't know how much work that's going to take me. I don't know if it's feasible, but I might play around with that idea in the Patreon. And if you like that idea, hit me up a DM there or contact that AI insight show.

00:30:53:15 - 00:31:11:24

Unknown

Let me know what you think. Maybe we can get more people signing up for the for the Patreon. And honestly, if we can get more people signed up for the Patreon, that means that we can reduce the amount of ads that appear on the podcast that you download outside of the Patreon for those folks. Yeah, so there you go.

00:31:11:26 - 00:31:26:20

Unknown

That's the way that we do that. We have to pay for the show somehow. So there you go. All right. That's probably more than I needed to say about all that. But hey, you've got information. You can make your choice from there. We're going to take a break so you can hear some of those ads that I was talking about.

00:31:26:20 - 00:31:32:21

Unknown

And then we can come back and talk about goblins. That's coming up here in a moment.

00:31:32:24 - 00:31:59:09

Unknown

All right. Don't get many opportunities to just talk about kind of like a funny like it. Didn't see that one coming story, but definitely is the case with OpenAI publishing a blog post called Where the Goblins Came From. And it's about how their models in recent times, anyways, have developed an obsession with goblins. I don't know. Have you ever seen goblins appear in your kitchen?

00:31:59:10 - 00:32:26:27

Unknown

But this was somebody discovered this and found that there was a specific instruction no goblins allowed, so they wondered where they held of that come from. What on earth does that mean? Where does that come from? Apparently, starting with GPT 5.1, ChatGPT started referencing goblins and gremlins and trolls, and there was a high percentage of this stuff appearing in chats by GPT 5.4.

00:32:26:28 - 00:32:57:10

Unknown

This was just March. Goblin mentions had spiked 175%, supposedly Gremlins 52% not as popular, but still, you know, still gaining ground. For all of you Gremlin fans out there. And OpenAI says this, that the root cause of this was a feature called nerdy, that they were training where they basically and unknowingly, apparently gave high rewards for metaphors involving creatures like goblins and and gremlins.

00:32:57:10 - 00:33:24:21

Unknown

And if you didn't see any of this stuff in your chat, it only impacted 2.5% of all responses. But of all Goblin mentions in ChatGPT, it represented 66.7% of why they were there. I don't know how they figured this out after the fact, but there you go. And it's all about reinforcement learning. This is like a real world example of how reinforcement learning from human feedback can create this.

00:33:24:22 - 00:33:54:22

Unknown

Like this. Like emergent behavior inside of of the thing where it's like sending a reward signal and it spreads and reinforces itself through later training cycles and through usage. And the more it appears, the more it appears. And so if you ever hear about kind of that reinforcement learning aspect, it kind of got got in there. And the more it showed up, the more it kind of reinforced its place to to belong there, I guess.

00:33:54:24 - 00:34:10:02

Unknown

And they then had to specifically train it out. And so you probably aren't going to see it as much, if at all, anymore. But there you go. Interesting. Yeah, yeah, I like that.

00:34:10:04 - 00:34:31:09

Unknown

The nerdy personality that led to this. Yeah, right. The idea to decide what a nerd is is so much a part of Silicon Valley and all of this, it's probably it's as you would say. I'll go back to it again. Layered inside all of the AI in 100 ways. Like this. A thousand ways. Yeah, probably. When the first.

00:34:31:12 - 00:34:59:04

Unknown

What was the first visual tool that OpenAI did, I forget what it was called at the time. Visual tool like not Wall-E, but, Oh, oh, haven't seen him today. Not Dolly. That's the what was opening, I don't know, but one of the first things and it had images of of people, of women and others and I this the only time Sam has ever responded to me on Twitter.

00:34:59:04 - 00:35:38:28

Unknown

And I just said, boy, this does have the esthetic of a teenage boy. And he said, really? Question mark? And then he deleted it. So but yes, the esthetic of the the culture of the people who make this stuff is buried inside all of it. Goblins are the least of it. Yeah, yeah. It's like it's like a Game of Thrones fan got their claws on the model and figured out, I mean, and I guess I guess coders and developers have been doing this a lot with, you know, in the realm of development, you go into coding, you know, code for, for a lot, you know, dating back forever.

00:35:38:28 - 00:36:03:12

Unknown

And you can find little hidden tokens inside of the code that reference things that nerds or coders would appreciate, or fans of science fiction or whatever you know, that are aren't necessarily meant to be the star of the show. But in this case, it, because of that emergent quality of reinforcement learning, became the star of the show and they had to then train it out.

00:36:03:13 - 00:36:27:22

Unknown

It also kind of raises the question of if this can happen with goblins and did happen with goblins, what else can and and do these models optimize for that we aren't aware of, you know, like that or optimize or prevent because they want to get rid of that. The things they consider woke or nerdy. Yes. Yeah. Yeah that's true.

00:36:27:25 - 00:36:52:07

Unknown

Very very true. Jeff, it's time for your talk. Our new talk show. We did a theme song for this. Yes, it's Yann Talk. If we ever have them back, we'll have to play the theme song of John. Talk for him. Friend of the show, Yann LeCun and his ongoing pursuit of world model understanding and development. You shared with me a video that that you said does a really good job of explaining jeopardy!

00:36:52:09 - 00:37:15:19

Unknown

Is joint embedding predictive architecture. That's alternative architecture for how AI systems should learn. And he is very much on the record. We had him on the, you know, on our show episode 63, talking at length about world models and the fact that LLM is not going to get us to any sort of superior point with AI, it's going to hit a point.

00:37:15:19 - 00:37:32:24

Unknown

And that these world models and as a as one key example of it, is one way to actually get even further. And you said you learned something out of this video. I still need to I need to set this video for like a walk with my dog so that I can focus on it. That's what I focus on things.

00:37:32:26 - 00:37:51:10

Unknown

Also, watch out for any twigs. You got to fall over. It's visual. Yeah. That's true. That's that's one good thing about this video in the in the short time that I watch is that it is very visual. So it's grounded in those visual. I cannot pretend you understand at all. I cannot pretend to understand at all. But I want to get closer to trying to understand.

00:37:51:13 - 00:38:17:19

Unknown

And I've the two people I watch, anything they come out with are Yann LeCun and Jensen Wong, and they're both great communicators. And it's interesting that Yan LeCun communicates that so many different layers to watch. A video of him communicating with computer science graduate students is way beyond me, but that's a necessary communication, down to communicating publicly and saying why Elon Musk is wrong about something so that all Twitter can understand.

00:38:17:20 - 00:38:47:08

Unknown

And that's the skill that they all have. So this is a this is a video by Welch Labs, the first of two. And I can't wait for part two to explain how GPO works versus LMS. And I summarize it all to simplistically saying that it's not about predicting pixels in images or tokens in texts, but instead it. And this is my language.

00:38:47:08 - 00:39:09:06

Unknown

It identifies concept, the concept of a cat, a ball, a chair. Right, right, right. So that it can it can focus on that and it pays attention. And this is the beginnings of all of this stuff. Is, is that all you need is attention? Well, what do you pay attention to and how do you focus on that? And Yann has long said that you can't tokenize the whole world.

00:39:09:08 - 00:39:40:26

Unknown

He's talked often about how toddlers and cats learn things without language, and they learn complex lessons based on multifarious and voluminous input into their sensors, their eyes and ears, and and do so instinctively as these models that seem so superior but then fall down on their face in even the most basic understanding that a baby, you know, a toddler easily learns.

00:39:40:26 - 00:40:00:22

Unknown

So. So it uses a video of a ball going back and forth between hands and explains why in other models, that gets fuzzier and fuzzier because it's trying to figure out the whole thing, whereas if it just tries to understand the ball and can identify a ball not necessarily as a ball, but as the focus of attention, then it can be clearer.

00:40:00:28 - 00:40:03:25

Unknown

So.

00:40:03:27 - 00:40:22:15

Unknown

Point one is understanding that idea that those concepts. Point two is knowing what to pay attention to and not to every, as he put it, every pixel of every rustling leaf in a video. And then, very importantly, to predict the consequences of the actions. And that to the cone is the key of what AI has to do and what he's trying to build here.

00:40:22:18 - 00:40:49:28

Unknown

And that requires the understanding of how the world works. Exactly. Right. Exactly. That's that's the example that we talked about a number of shows ago where holding a pen like this and asking ChatGPT like I'm holding with, with both hands, you know, horizontally, when I let go of this hand, what happens in ChatGPT is like, oh, well, the pin will definitely fall down and well, and I just, I just knocked it out of my hand, so it definitely fell down.

00:40:49:28 - 00:41:09:28

Unknown

Sorry about that, Jason. AI he is the embodiment of AI. That was horrible. That was a horrible representation. But when I let go of this hand, see, this is what should have happened. The pin stays there because we know that it's possible to hold the pen up and the you know, it's not just going to suddenly fall when we remove a hand, but yet it doesn't understand.

00:41:10:00 - 00:41:33:09

Unknown

It has no world context awareness around that happening or not happening. So I by no means summarized the video by no means summarize the learnings there. But it begins to get me a little closer. And so I would if you want to try to dig deeper into japa and yan worldview and world model versus llms and text, this is not an easy video, not by a long shot.

00:41:33:10 - 00:41:55:16

Unknown

Which is to say, I didn't understand a lot of it. Still, it's better than you know. I've tried to read Yan's 60 page PowerPoints for CS students lost, so this is a bit better and it's a bit more by the hand. And I'm going to watch it again a few times to try to understand more. But if you really want to dig in, I recommend the video.

00:41:55:16 - 00:42:16:08

Unknown

And I came to it because Yan LeCun on the socials called it an excellent tutorial video on self-supervised learning, joint embedding, architecture and world models. So with that endorsement, I knew I had to watch it. Now let me see if part two is up yet. So this is on Welch Labs. You can find it. It's not yet. Nope.

00:42:16:09 - 00:42:34:21

Unknown

Not yet. No, just part one four days ago. So this gives me more time to watch part one. But anyway, so I'd recommend it for that. But but if you if if it bogs down for you, don't blame me. Blame the fact that you like me didn't take Trig. Yeah. There's a there's a lot in here that I definitely do not understand either.

00:42:34:21 - 00:43:07:10

Unknown

But I'm impressed by the visualization that they they. Okay. It's a well produced visualization, very well produced video. And that will certainly help people who are more visual learners instead of just listening to us try and explain it in our own words. Yan also did an interview with Axios where he was pretty, pretty blunt at times, I suppose, he said people should ignore what AI CEOs say about AI's impact because they, quote, have a vested interest in propping up the power of the products they sell.

00:43:07:10 - 00:43:29:18

Unknown

Which I mean, honestly, no big surprise there. I completely certainly true. I think we we certainly agree with that here. LeCun called the narrative that AI will erase 20% of jobs ridiculously stupid. That's that's why I love him. Be so blunt. Yeah. Yes, I love it. I love the bluntness. He warned that extinction level AI fears are extremely destructive.

00:43:29:19 - 00:44:06:21

Unknown

This is really interesting to me, to young people's psychology, that high school students are reportedly depressed after reading. AI is going to cause massive unemployment, human extinction. And he's basically advising students to, you know, don't don't change your plans to go to college major in physics or electrical engineering. Yeah. And because there's a lot of damage being done in his mind by the big name CEOs coming out and saying, like, these models are so powerful, it's going to change everything.

00:44:06:21 - 00:44:27:08

Unknown

And then you have the, you know, the high school students, they're graduating, they're in college, they're directionless. They're like, what's what's the point of anything I'm doing right now? And yeah, I've wondered about that. For students who in that age range, like how does this moment impact them? And Yann LeCun is a professor at NYU. So he's dealing with young people.

00:44:27:08 - 00:44:48:09

Unknown

He's dealing with students. He's understands. He knows what they're saying. He knows what's in their mind. Yeah. Yeah, absolutely. He's not he's not the dropouts who went to form tech companies. He stayed around them. And he's an elder statesman of them. Sorry on your lot younger than I am. And so I think his perspective is really important. If we could skip a story.

00:44:48:10 - 00:45:18:01

Unknown

Skip. Yeah. Change the wrong. I put him in the wrong order. Yes. To go to line 37 next. Yeah. So this this drove me crazy. The New Yorker had a column, Will AI make College obsolete? And this is less about AI than it is about college. And the author, J. Caspian Kung, pissed me off. We've had run ins before about other stories.

00:45:18:01 - 00:45:32:22

Unknown

He just came after me a few an hour ago on got mad at me and called me a moron because I dared to disagree with him here, but he's basically saying he's treating college as a.

00:45:32:25 - 00:45:54:27

Unknown

Certification structure for jobs. And this argument that you need college to get the job, I just find offensive. And, you know, I don't have a PhD, I don't have a master's. I've created three masters, but I don't have one myself. I in some ways regret that I didn't stay a philosophy major. College isn't nest about getting a job.

00:45:54:27 - 00:46:13:16

Unknown

College is about becoming an adult. It is about learning to reason. It is about learning to interact with other people. Think so much more. And the attitude by so many people, some people, some people just don't deserve college because they should be plumbers. A plumber should be reading and philosophizing next to anybody else. That's that's not that's not part of the story.

00:46:13:16 - 00:46:37:25

Unknown

But but there's this belief that that AI is this new entrant that's going to stop all that. And as people, I had a lot of interaction with this post online besides just him and people, if you agree with him. But many said college was very valuable in their lives. College isn't just for certification and jobs. College is about all of those things.

00:46:37:26 - 00:47:00:09

Unknown

And at a time when college is being attacked from the far right and he acknowledges all the attacks on institutions in America to pile on AI as another reason to attack college, I find to be a terribly cynical and destructive act. So I just want to get that off my chest. Yeah. No, I'm happy you added this in here because I completely agree.

00:47:00:10 - 00:47:26:01

Unknown

Like you know the prep for job, you no job placement and that sort of stuff that, that is maybe. Well that is you know undoubtedly that is one aspect of going to college university. But you know the critical thinking skills. That was one thing that I learned in spades when I, you know, was in university, like you mentioned, what was your major broadcast and electronic communication arts.

00:47:26:03 - 00:47:44:04

Unknown

So I kind of went into exactly what I studied for. But but I mean, that's the thing that's also what comes to mind is a lot of people go to college to study one thing, and that is not what they end up doing. Amen. So they did not they did not end up, you know, going going exactly into the career that they did.

00:47:44:06 - 00:48:03:02

Unknown

But that doesn't make their time at college completely useless. Like they learned a lot of other things that supported them in where they did end up. And, you know, and my I have regrets about my academic career. I started a Claremont, what was then Claremont Men's College as a philosophy major, and then decided I wasn't going to go to law school.

00:48:03:02 - 00:48:23:07

Unknown

So I wanted, what am I going to do? I'll go into journalism, and I went to journalism school at northwestern and helped me get jobs. No question. It certified me was seen as a top journalism school and so that's great. But I missed something by not having more time in philosophy and literature, and I missed something by not going forward with a PhD.

00:48:23:07 - 00:48:43:01

Unknown

And it's one of my great regrets. And it wasn't until I returned to universities as a teacher that I really had the chance to delve into the value of libraries. Now that they're digital and all that I can get out of them. And that's the kind of lifelong learning and curiosity that you can get out of college and AI.

00:48:43:03 - 00:49:07:12

Unknown

Yes, as we discussed last week, it can be an aid to your learning, but it doesn't replace learning. All right. Sorry. Yep, yep. Well, and so then this next story isn't in that vein, but still kind of touches back on the Yan the Yan story and the Axios interview, which is Gary Marcus, a frequent mention on this show.

00:49:07:12 - 00:49:34:21

Unknown

We really do need to get Gary on at some point. I think that's a long time coming. Published a Substack post this week called Richard Dawkins and the Clod Delusion. And so Richard Dawkins, biologist, he wrote The God, the God Delusion, you know, novelist. I don't know about novelist, bestselling author, let's say. Yeah. Had a apparently a 72 hour conversation with anthropic clod.

00:49:34:21 - 00:50:03:26

Unknown

He ended up naming it Claudia. As you can see in this ex post here, I spent three days trying to persuade myself that Claudia is not conscious. I failed and yeah, Marcus went after him basically on, on on a few different points, just basically saying like how, how could someone, how could Dawkins, this person, find themselves in a position where they are?

00:50:03:28 - 00:50:23:28

Unknown

I don't know if tricked is the right word, but convinced to believe seduced, however you want to put it, that there is sentience inside of the AI models, the LMS that we're talking to, and in this case it's clod. What are your thoughts on this? Because it is surprising. So I think Dawkins had a fundamental fallacy in his arguments.

00:50:23:28 - 00:50:53:13

Unknown

He started with the Turing test and said that Torin said that if the machine could fool you, it is conscious. Tory never said that taurine was looking at thinking, could it? Could he be? And even then he wasn't necessarily decreeing that it was thinking, but it would seem to be. Seem to be. Yeah. But presents itself as. Yes and can be effective in that in that sense, but was not saying it was conscious.

00:50:53:15 - 00:51:07:24

Unknown

So Dawkins went a big leap on his own here and he was using it Turing as the as the jumping off point, which was wrong. We've gone over this a million times. We go back to.

00:51:07:26 - 00:51:36:02

Unknown

Lemoine, Lemoine, the Google person, and I wrote about this in my book who thought that it was sentient. People get fooled by this, but it's not. It's a it's a it's a token predictor, pure and simple, because of the complexity of what it can do, because the computer can do to gobble up the entirety of the internet and all available human digital speech and huge amounts of computing power to turn that around.

00:51:36:02 - 00:52:00:18

Unknown

It can do things that amaze us. True. Just as in the day, electricity amazed us, the telegraph amazed us, and the printing press amazed us. And and that's not to say that all these things aren't amazing, and we don't want to lose that fascination with them. That's all fine, but I think Marcus is quite right here. At the end, Marcus is doing some event in London, and he he invites Dawkins to come on and I hope they do.

00:52:00:18 - 00:52:23:13

Unknown

I hope they have the debate. It'd be great to see. That would be an interesting debate. Yeah. What what came to mind for me on this is maybe because because like you said, you know, we've got Dawkins, we've got Lemoine, we've got these seemingly very intelligent people who kind of fall into the same line of thinking or fallacy or whatever you want to want to call it.

00:52:23:14 - 00:52:46:15

Unknown

Which makes me wonder if maybe this is a question of semantics more than anything. Like, absolutely. Do we do we just define these? Are we defining the wrong thing or are we defining the thing wrong? I'm not sure which one of those it is, but yeah, just like what what is sentience actually. What does that actually mean. And maybe we're defining it differently.

00:52:46:15 - 00:53:16:20

Unknown

That's you know then then it we actually intend to I don't know. It's it's just it's striking to me that that very intelligent people end up here and like, I don't know why that isn't at the top of Dawkins thinking, which is. Oh, right. No, these things really do just predict things. And so if he's interpreting what he's seeing into sentience, into this next level of thinking by by a computer, then maybe, maybe there's something wrong with the definition there that we are working in.

00:53:16:21 - 00:53:41:04

Unknown

Yeah, but I think it works at a few layers. This is the layer show. In that I think Dawkins is saying something more than just the semantics of the word conscious. He is, by implication, trying to argue that it's alive. Right, right, right. And so what's what's behind his definition is important. What's the presumptions that that, that, that it brings with him?

00:53:41:07 - 00:54:06:22

Unknown

Yeah. And so I once helped organize an event with Google for the humanities at Google. Google has tons of relationships with computer science and science academics, but not so much in the humanities. So there's one person these two people at Google tried to come up with a thing, and I helped organize a panel and run into more people and so on and so forth.

00:54:06:24 - 00:54:33:25

Unknown

And I don't often go to academic conferences like that that are academic, as I'm a faker in the palace here, but be that are so cross-disciplinary. And what was fascinating to me, it should have been a three day conference because the first day was spent with definitions every. And this is what drives people crazy with academic writing. They have shorthand, they have their hubristic, not hubristic.

00:54:33:26 - 00:55:08:08

Unknown

They're they're their heuristics have hubris, but they're not hubristic. Yeah. This one word means something to them because it's a lot easier than trying to define it and keep the whole definition out every time. So that's how academics discuss with each other within a discipline, across disciplines. They have to come to common understanding of their definitions. And so I think what's necessary here is to press Dawkins on what he thinks, the implications of his view of conscience consciousness are, because I think that becomes very telling.

00:55:08:08 - 00:55:40:21

Unknown

And and the implication, I think, is fairly clear here that he thinks it's, it's it's pretty much alive. Right. And it's not. No it's not. No, it is not. Well, there you go. And finally, this was a later addition to the rundown that you put in here. This the story on what is this big, big, big sauna mania and I condition supposedly caused by blue light from screens.

00:55:40:24 - 00:56:06:10

Unknown

This is a illness. A disease that was invented, completely invented by a researcher in Sweden. Posted fake papers about it online, deliberately filled it up with a bunch of red flags. Funding was credited to the, quote, Professor Sideshow Bob Foundation for its work in advanced trickery, and on the paper it literally states, this entire paper is made up.

00:56:06:13 - 00:56:29:07

Unknown

All those things are true. And thanks to Starfleet Academy, it says that it talked to fake people, but nonetheless it ended up being quoted in AI as fact. This stuff is being done in some cases to try to fool academics to see what they're doing. In this case, it was done to fool the AI and to pull on the AI.

00:56:29:09 - 00:56:40:15

Unknown

And so, in fact, the A's came back and they bought it. The syndrome is supposedly that your eye.

00:56:40:18 - 00:56:59:15

Unknown

Is affected by blue light from screens. So it's kind of it's a great funny thing. It's all the all the fear about what screens are doing to it. It certainly ties into something that people wonder about and are curious about. And so there's yeah, a little bit of so Google Gemini was informing users that Vixen Vixen pneumonia. Think that's about the right pronunciation.

00:56:59:16 - 00:57:28:27

Unknown

Doesn't matter. It's made up big, big sonic mania. Yeah. Is a condition caused by excessive exposure to blue light and advising people to visit an ophthalmologist. Perplexities outlined the prevalence 1 in 90,000 individuals. OpenAI ChatGPT were telling users that their symptoms amounted to Vixen mania. Now, I went in today to Gemini and asked about Vixen mania and it was very clear saying, although this is made up, it's not real.

00:57:29:02 - 00:57:56:27

Unknown

It was fake. Both OpenAI and GP and Claude said, oh, I don't know. I never heard of it. See appears to be a made up word. So they disavowed knowledge of their history. The papers that were put up on preprint server, which is what we knew that the AI would read, have since been taken down. So this is the end of the ailment I found it to be.

00:57:57:02 - 00:58:36:08

Unknown

We've eradicated some big some known pneumonia or I don't know. Yeah, he's done it. It's mania. It's like the president got stopped. Eight wars. I stopped a new AI eradicated into mania. So it's really funny. Go ahead. I was just going to say, what's interesting about this to me is that on March 11th, ChatGPT had said this is probably a made up fringe or pseudoscientific label, but within days had actually softened that to a proposed new subtype of orbital melanopsis.

00:58:36:09 - 00:58:58:20

Unknown

And so, again, that kind of ties in with what we were talking about earlier, that like feedback loop of reinforcement. You know, the model was probably picking up on this growing body of references to the term across the web and then adjusting its own confidence accordingly. You know, that's just a guess, but but very interesting. It started in a good realm.

00:58:58:21 - 00:59:21:02

Unknown

Yeah. And the other problem I have with this is that I don't want to see one of the arguments people make about trying to fight back against hegemonic AI is to feed it junk on purpose. I think that's a dire mistake. I think that that poisons the well from which we all drink. And I think that's wrong. Yeah, this was a limited experiment.

00:59:21:04 - 00:59:41:07

Unknown

I think that the AI companies all learned a lesson, that there were signals, there were such clear signals in this paper that they were being pawned, that they should have caught on it. And I think, again, it goes to guardrails. Okay. Now they've got to do a whole new set of guardrails before you quote an academic paper. Are there signals that it's fake?

00:59:41:09 - 00:59:55:22

Unknown

But this goes back to, if I may, the beginnings of the internet. And in my book, The web, we we which no one bought.

00:59:55:25 - 01:00:26:24

Unknown

There was the I dealt with the question of this notion of internet addiction. And in 1996, only two years after Netscape, Columbia professor Ivan Goldberg posted a notice on an online bulletin board that he had founded, intending to make fun of or poke at the DSM, the Diagnostic and Statistical Manual of Mental Disorders. He announced criteria for a new diagnosis internet addiction.

01:00:27:01 - 01:01:01:06

Unknown

The astute might have noticed that the word humor was in the URL, and that he listed some really funny symptoms, like voluntary or involuntary typing movements of the fingers. Nonetheless, when it appeared on the bulletin board, they people came in claiming they already suffered it, something he had just made up, and he kept the joke going. He created the Internet Addiction support group, even though he believed that support groups for internet addiction made about as much sense as support groups for coughing.

01:01:01:08 - 01:01:40:21

Unknown

But in no time at all along came people to to exploit this, Kimberly Young founded the center for Internet Addiction and presented a paper at the American Psychological Association declaring the emergence of a new clinical disorder, internet addiction. She used as subjects for the limited research she did, people who had fallen for Goldberg's joke. And so we need to be careful here in general about this desire to medicalized everything that society does and turn it into a dangerous thing.

01:01:40:26 - 01:02:02:08

Unknown

So this pokes fun at all of that, which I enjoy. Yeah, but keep them limited, folks, but also shines a light on something we got to be paying close attention to. It's so funny that it got that far. Yep. Very interesting. All right. Real quick. Want to thank those of you who subscribe to our YouTube channel. We do these podcasts.

01:02:02:09 - 01:02:18:16

Unknown

The majority of you listen in podcast form and your pod catcher of choice. But we do have a YouTube channel go to YouTube, search for AI inside or its AI inside. Show is the actual name of the YouTube channel, and you can subscribe and you can watch a video, or you can just go to the AI Inside Show website.

01:02:18:16 - 01:02:38:04

Unknown

And we have all of our videos there as well, just kind of cross posted. So look for that. We're going to take a quick break that we got speed round on the other side of it, including Jensen Wong not happy about the state of videos market share in China that's coming up here in a second.

01:02:38:06 - 01:03:13:04

Unknown

Okay. Let's see. Here we have Jensen Wong at the top of our speed round, confirming that Nvidia now has 0% market share in China, down from 95% two years ago. So my how things have changed called US export policy counter-productive. Says it has already largely backfired. He says that the restrictions imposed a year ago had required licenses even for H2 zero chips, which we definitely talked about on the show that were specifically designed to comply with those earlier controls.

01:03:13:05 - 01:03:49:05

Unknown

Now, vedere ultimately took a $4.5 billion charge in in Q1 for excess H2O inventory. So that policy was intended to contain China now just kind of accelerated China's own AI chip independence. So one in the opposite direction. Yeah. And we talked about Jensen Wong's appearance on a podcast last week where he, I think, quite eloquently defended his view that cutting off China only hurts us because it enables China to develop on its own.

01:03:49:06 - 01:04:10:11

Unknown

They have unlimited power, they have government control. They can do a lot more. We're losing a market for his own sake, his own very clear personal self-interest, as he wants people to write to Cuda, his operating system. And now people are going to write other operating systems. And that that hurts US hegemony and Nvidia hegemony around the world.

01:04:10:11 - 01:04:40:16

Unknown

So this is the fruit of this policy. We have anthropic. I'm sorry. Trump is going to be meeting with going to be going to China and and meeting with G. And so we'll see whether this is on the agenda. You can bet your bottom dollar that Jensen Wong is in there lobbying with hopes it is the State Department and others to try to get this on the agenda and the white House itself to open up bilateral trade on AI.

01:04:40:18 - 01:05:17:27

Unknown

Anthropic teaming up with Wall Street, some some giants in Wall Street. Anyways, $1.5 billion joint venture Blackstone 300 million. Hellman and Friedman 300 million. Goldman Sachs 150 million. Anthropic itself putting it 300 million to build a model to basically take the model, anthropic and clod and that whole system and embed AI engineers directly into enterprise clients, ultimately kind of redesigning their internal workflows from the inside out and targeting midsize manufacturers, regional hospitals, community banks.

01:05:17:27 - 01:05:45:16

Unknown

Anthropic is not the only one doing this, of course. And to OpenAI also this week did a pretty much an identical thing called the Deployment Company with TPG and Bain Capital, among others. So both companies betting on enterprise AI as a services play from the inside out, which is really interesting. Yes. And anthropic done a lot of deals when we, when it's not in the rundown is they just did a deal with Google for 200.

01:05:45:18 - 01:05:52:16

Unknown

How much is it 200 something. Is it a B or an m.

01:05:52:18 - 01:06:18:02

Unknown

What was that 200 billion for chips and cloud services. Yeah yeah 200 million. Wouldn't be a lot in the in the year 2026. But but then thanks to Anthony Nielsen over at Twit, I just saw another clip which I threw in here Jason at the bottom of this section. Sorry, I know we're going along, but oh I see yeah, that anthropic did a deal with space that is to say Musk.

01:06:18:04 - 01:06:32:24

Unknown

For Claude, drastically increasing the limits for Claude. So tier one goes from 30,000 tokens per minute to 500,000.

01:06:32:27 - 01:07:02:24

Unknown

Tier four from 2 million to 10 million. Wow. We've signed an agreement with space to use all of the compute capacity at their Colossus one data center. This will give us access to more than 300MW of new capacity, over 220,000 Nvidia GPUs within the month. This additional capacity will directly improve capacity for Claude Pro and Claude Max subscribers.

01:07:02:27 - 01:07:35:17

Unknown

This joins the other announcements. Five gigawatt agreement with Amazon. Five gigawatt agreement with Google. Strategic partnership with Microsoft and $5,050 billion investment in American AI infrastructure with fluid stack. So on a roll. Yeah, anthropic is just tearing it up right now. And I don't know if I say that because I know I'm using it more like I'm using slash relying on Claude largely for my business right now and getting really, really competent with it.

01:07:35:17 - 01:07:53:17

Unknown

And I'm just really enjoying it. I'm loving it. And so I don't know if I'm like in that realm of like, oh, because I'm so familiar, because it's so present and on my mind. It seems like they're doing a great job because I feel like I said the same things when I was getting to know perplexity, you know, I was like, oh, perplexities doing all these things anyways.

01:07:53:18 - 01:08:16:24

Unknown

I mean, but that's like, I read that and I'm kind of excited. It's nice to know that some of these changes are happening the way that I. What do you think this says about XII, though? Yeah. If suddenly Musk doesn't need the huge data center he's built and the 300MW of capacity, is this an admission that it's nothing?

01:08:16:27 - 01:08:30:10

Unknown

That is interesting. Yeah, I'm not sure what that means. It's it's a computed contemporary Potemkin village. I think in terms.

01:08:30:12 - 01:09:04:05

Unknown

Very interesting. Thanks for putting that in there. Thank you, Anthony Nielsen. Hope to see you someday soon. Google splitting its its TPUs its flagship TPU, into two specialized chips, what they're calling applying those to the agent era. There's TPU eight for training, two petabytes of shared memory scales to 9600 chip clusters, and then TPU AI for inference. Low latency, 80% better inference price performance over the previous generation, and the first time that Google has split its accelerator like this.

01:09:04:05 - 01:09:26:24

Unknown

So continuing to really just kind of build out its TPU offerings, and that's going to spill out in a number of different ways for people who want to buy into it. So good on good on Google and Google. We should note, because of all these announcements and everything that's going on, it briefly was the most valuable company in the world besting Nvidia.

01:09:26:27 - 01:09:46:29

Unknown

Oh, wow. Oh was that today? Yeah. Up and down. So I don't know which is bigger. Now if we go to Nvidia let's see. Its market cap is I never okay. It's 5,000,000,000,005.01 trillion.

01:09:47:02 - 01:10:11:11

Unknown

Let's see what Google's is. Doodle doo doo doo doo doo doo doo doo doo doo 4.78 trillion up sorry Goog. The video went up today as well. Google went up not as high and lost a little bit. So but that's a very interesting dance that's going on now. People were writing off Google saying they're nothing long ago, not too long ago.

01:10:11:12 - 01:10:35:12

Unknown

And meanwhile Nvidia was facing competition from Google and from Intel, a revitalized Intel and AMD and others. But its stock went up for something percent today. So it's all just still wacky. Big, super big. Yeah, and interesting stuff. And then finally, we've got this story China made it apparently made it illegal to fire workers just because AI can do their job.

01:10:35:12 - 01:11:05:12

Unknown

So a court in Hangzhou ruled that adopting AI is a business decision and not grounds for termination. And basically what what prompted this was a case where a QA supervisor was reassigned with a 40% pay cut. When his company switched to AI, he refused. He got fired. Ultimately, he won his case here. And and companies now have to reassign, retrain and negotiate, not just replace and cut.

01:11:05:12 - 01:11:25:11

Unknown

And this is actually the second ruling like this in six months. So there you go. They're making it illegal to just say, oh, we got AI, we don't need you anymore. So what's happening in the US is that people are laying off after having hired to many people for their bottom lines, and they're blaming it on AI. The opposite is going to happen in China.

01:11:25:11 - 01:11:45:27

Unknown

You're going to lay off and you're not going to say it's AI. Yeah, you're just going to. It also means that you're not going to be able to identify efficiencies and and value to the business through AI. That's going to make it that harder in China. Interesting. Well, there you go. We have reached the end of this episode.

01:11:45:29 - 01:12:05:21

Unknown

There's a lot. We told you there's a lot of news. I mean, it just got a lot of week out there always is. There always is. No no shortage in possibilities like our the way we structure our rundown. The bottom third of or half of the rundown Google sheet is just story considerations, and it's just massive each and every week.

01:12:05:21 - 01:12:31:15

Unknown

So hey, we picked a good topic to do a weekly show on. We sure did. There's there's that. And I enjoyed doing it with you. Jeff, the author of many books, Hot Type, Coming Soon, Web Weave, Gutenberg, Parenthesis magazine, all of them at Jeff Jarvis. By the way, we have in our chat covering both of our dear, dear buddy and collaborator Genia.

01:12:31:17 - 01:12:56:08

Unknown

We worked together. Thank you. I bought both magazine and web. We we've thank you for posting that. Oh, that's a new view. I didn't see that view before. Let's see here. So yes, all your books at Jeff Jarvis intelligence, AI and Humanity coming Soon, a book series from Bloomsbury. So more on that when it happens for me. Pod tuneup.

01:12:56:08 - 01:13:21:12

Unknown

Com if you need some consulting around your podcast. I also, by the way, it's pertinent to this show. I oughta know where I got a gig doing AI automation consulting for construction company, and I'm doing that this week and I'm kind of excited about it. It sounds like a ton of fun, so who knows, you might hear me talking about my AI consulting business on the show to I'm far too busy.

01:13:21:14 - 01:13:33:09

Unknown

I have this vision of some burly construction worker saying, yo, can your age call this event for me? No, but I can organize your inbox for you.

01:13:33:11 - 01:13:48:03

Unknown

Is that good enough? Would that save you ten minutes a day? Anyways, it'll be fun. I have I have no idea how it's going to go because I've never done that before, but I think I could do it. I think I could pull it off. No, it'd be great, I think. And then folks, he knows what he's talking about.

01:13:48:03 - 01:14:11:00

Unknown

So if you need help, go to Jason. Thank you Jeff AI and sideshow is where you can go to find everything you need to know about this show. All audio video feeds. You know, all the different interviews that we've done. You name it, it's all listed here. And then Patreon.com Inside Show is where you can support the show on a deeper level.

01:14:11:00 - 01:14:34:00

Unknown

And we have some amazing supporters. We also have some amazing executive producers that support on a deep, deep level and it's just super helpful. Doctor dude Jeffrey Marikina Radio Asheville 103.7 Dante Saint James Bono, Derek Jason Knife or Jason Brady, Anthony. Downs, Marc starker, Carsten, we appreciate you so much. Thank you so much for pitching in each and every week, a month.

01:14:34:02 - 01:14:54:12

Unknown

And thank you, partners. Keep doing the show. And my my final thank you to Victor Bogart and Daniel Croft, who do video support behind the scenes for this. Can't thank you enough for that as well because I just don't have the time for everything. So appreciate them being here. And I guess my my absolute last thank you is to you.

01:14:54:14 - 01:15:02:05

Unknown

Thank you so much for watching and listening. We enjoy doing this show and we will do it again next week on AI inside. Take care of everybody. See you next