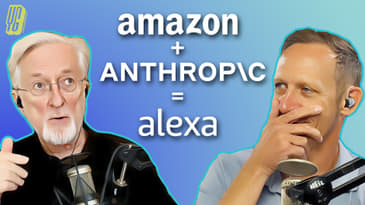

Jason Howell and Jeff Jarvis dive into Amazon's AI plans for Alexa, Elon Musk's Colossus announcement, the cultural implications of AI in entertainment and elections, and more!

Please support our work on Patreon: http://www.patreon.com/aiinsideshow

NEWS

@elonmusk: This weekend, the @xAI team brought our Colossus 100k H100 training cluster online.

OpenAI plans to build its own AI chips on TSMC's forthcoming 1.6 nm A16 process node

NaNoWriMo is in disarray after organizers defend AI writing tools

Yuval Noah Harari: What Happens When the Bots Compete for Your Love?

@1x_tech: Introducing NEO Beta. Designed for humans. Built for the home.

[00:00:00] [SPEAKER_01]: This is AI Inside Episode 33, recorded Wednesday, September 4th, 2024.

[00:00:07] [SPEAKER_01]: Amazon's Next-Gen AI Alexa.

[00:00:11] [SPEAKER_01]: This episode of AI Inside is made possible by our wonderful patrons at patreon.com

[00:00:15] [SPEAKER_01]: slash AI Inside show. If you like what you hear head on over and support us directly and thank you for making independent podcasting possible.

[00:00:30] [SPEAKER_01]: Hello everybody and welcome to another episode of AI Inside, the show where we take a look at the AI that is layered like a

[00:00:37] [SPEAKER_01]: delicious lasagna through the world of technology. I'm one of your hosts, Jason Howell joined as always by my co-host Jeff Jarvis.

[00:00:46] [SPEAKER_01]: How you doing Jeff?

[00:00:47] [SPEAKER_00]: Hello my podcast boss, my Android god, my AI collaborator, my lasagna chef. Good to see you.

[00:00:56] [SPEAKER_00]: There we go.

[00:00:57] [SPEAKER_00]: I think we need a new logo for the show, the lasagna, AI lasagna.

[00:01:01] [SPEAKER_01]: I like it. Well actually, you know when you do look at the, let's see here if I have the image.

[00:01:06] [SPEAKER_01]: When you look at the layers.

[00:01:07] [SPEAKER_01]: Yeah, there's layers on the inside part anyways.

[00:01:10] [SPEAKER_00]: So just need some oozing cheese in it.

[00:01:12] [SPEAKER_00]: Yeah.

[00:01:13] [SPEAKER_01]: Yes, some bubbling cheese dripping out. It's 1pm where you are when we record this show. Does that mean you've already eaten your lasagna for lunch or?

[00:01:24] [SPEAKER_01]: Okay. So you're no longer hungry at this point.

[00:01:26] [SPEAKER_01]: No, we talk.

[00:01:27] [SPEAKER_01]: I had a nice empanada today.

[00:01:29] [SPEAKER_01]: Oh okay, excellent.

[00:01:30] [SPEAKER_01]: The world is my oyster, but I will not be eating oysters when I eat lunch here after this show.

[00:01:36] [SPEAKER_01]: That's a later problem.

[00:01:39] [SPEAKER_01]: For now, we've got a lot of things to talk about before we get into everything.

[00:01:43] [SPEAKER_01]: Just want to remind people.

[00:01:45] [SPEAKER_01]: We do have a Patreon, patreon.com slash AI inside shows where you can offer your support for the show and for what we do like Enda McCallig.

[00:01:55] [SPEAKER_01]: Enda, thank you for being there since January since the very beginning. Appreciate you and your support patreon.com slash AI inside show.

[00:02:03] [SPEAKER_01]: Also just a reminder because we have a lot of people that join us for the live stream and I'm curious.

[00:02:09] [SPEAKER_01]: Oh, live streamers are you subscribed to the podcast because if so, well that would just make me and Jeff very, very happy and it would ensure that you wouldn't miss anything even if you don't catch the live stream.

[00:02:20] [SPEAKER_01]: AI inside.show go there.

[00:02:23] [SPEAKER_01]: You'll find all the ways to subscribe to this podcast.

[00:02:26] [SPEAKER_01]: Make sure you never miss.

[00:02:27] [SPEAKER_00]: And if you're watching on Twitter or Facebook right now, please share it now and say, oh, stuff to watch.

[00:02:34] [SPEAKER_01]: Yeah, that's awesome.

[00:02:35] [SPEAKER_01]: I love it.

[00:02:35] [SPEAKER_01]: Like we've been doing this the last handful of weeks, you know, sharing to especially you've been sharing to your Twitter feed.

[00:02:42] [SPEAKER_01]: And I mean, it immediately Twitter obviously is doing something right as far as surfacing when something goes live because it's immediate.

[00:02:50] [SPEAKER_01]: The second you switch that on those numbers start going through the roof people see it and that's that's a that's a win for the X platform, I guess.

[00:02:59] [SPEAKER_00]: Yeah.

[00:03:00] [SPEAKER_01]: So, um, all right, well with out further ado, why don't we get into some news because we've got some pretty cool stuff to talk about this week in the world of AI starting with Amazon.

[00:03:10] [SPEAKER_01]: We've got the I almost set it out loud and I don't want to set off anybody's assistance.

[00:03:16] [SPEAKER_01]: So I'll call her Madam a which was Stacy Higginbotham's name for a L EXA for Amazon.

[00:03:26] [SPEAKER_01]: Anyways, Madam a universe is about to get a generative AI infusion according to sources to Reuters.

[00:03:37] [SPEAKER_01]: It's going to be powered. I guess the initial plan, my understanding of the initial plan was that Amazon was going to do it all on its own.

[00:03:45] [SPEAKER_01]: But the sources have been saying that that hasn't been working out so well those initial trials had a six to second latency time between question and answer which that's a long time to wait.

[00:03:58] [SPEAKER_01]: That really is.

[00:04:00] [SPEAKER_00]: I once had I once had a boss who spoke very slowly and it took me a little while to learn that I had to instruct everybody else.

[00:04:07] [SPEAKER_00]: Don't finish a sentence.

[00:04:09] [SPEAKER_00]: Don't give him time right because it doesn't jump in there right.

[00:04:13] [SPEAKER_01]: Yeah.

[00:04:14] [SPEAKER_01]: Yeah, yeah, yeah.

[00:04:15] [SPEAKER_01]: And you jump in there you can get in trouble, you know, because that's just the boss might be his cadence in this new instance of Madam a it would be powered by anthropics Claude models.

[00:04:29] [SPEAKER_01]: According to these sources, it would be fee based so five to $10 a month somewhere in that range for the quote remarkable version.

[00:04:37] [SPEAKER_01]: I don't know if that's going to be the actual name of it like Ali XA remarkable edition versus the classic version which will be and continue to be free because that's what most people are used to paying for their Ali XA.

[00:04:53] [SPEAKER_01]: And yeah, so Claude come into your Amazon device.

[00:04:58] [SPEAKER_01]: Caller me dubious for two reasons.

[00:05:01] [SPEAKER_00]: On the Amazon side, madam a has not been a hit after all this time and all this effort and all these devices I mean how many device I've got three or four to sitting around here that I never plugged in because they came with something.

[00:05:12] [SPEAKER_00]: Yeah, we all have tons of the madams hanging around and which sounds a little weird.

[00:05:20] [SPEAKER_00]: And I don't know that just having more software in the back end is going to make a huge difference to the uptake of madam a point a point B.

[00:05:29] [SPEAKER_00]: We know once again, put the record and put the needle down that large language models don't do facts well.

[00:05:37] [SPEAKER_00]: So what are you going to ask it?

[00:05:39] [SPEAKER_00]: If you're going to ask for a fact, the odds that you're getting a sure answer are not terribly high.

[00:05:48] [SPEAKER_00]: And in any case, you're going to doubt it.

[00:05:50] [SPEAKER_00]: So I don't really see what this marriage fixes either.

[00:05:56] [SPEAKER_00]: That whole question of the there's the question there's there's the audio interface and the question interface and those two things I think are still unproven.

[00:06:05] [SPEAKER_00]: Right.

[00:06:06] [SPEAKER_00]: Putting in a search box, we know works.

[00:06:08] [SPEAKER_00]: I want to search for this as an entity.

[00:06:10] [SPEAKER_00]: Find me that entity.

[00:06:11] [SPEAKER_00]: We know that works.

[00:06:13] [SPEAKER_00]: Ask a question even in a search box and chat to BT.

[00:06:18] [SPEAKER_00]: I don't think people are necessarily clear how they're going to use it and audio as an interface is not clear.

[00:06:23] [SPEAKER_00]: So put it all together.

[00:06:25] [SPEAKER_00]: I shrugged.

[00:06:27] [SPEAKER_01]: What was what was the downfall or the aspect of these smart home assistance?

[00:06:34] [SPEAKER_01]: That was a failure because I mean, I remember when that first video came out and everybody was like, oh, my goodness, it feels like parody.

[00:06:41] [SPEAKER_01]: Remember that video?

[00:06:42] [SPEAKER_01]: It felt it totally felt like a parody video.

[00:06:44] [SPEAKER_01]: But then we quickly realized, oh no, Amazon's serious about this.

[00:06:49] [SPEAKER_01]: And it was pretty hot there in the beginning.

[00:06:52] [SPEAKER_01]: Google had its own.

[00:06:53] [SPEAKER_01]: I still have homes, Google homes throughout pretty much in every room.

[00:06:58] [SPEAKER_01]: Although it's doing it has some weird behavior now that is very strange that I've noticed in the last year, year and a half.

[00:07:08] [SPEAKER_01]: But what is the failure there?

[00:07:11] [SPEAKER_01]: Because I know that I use them, but I don't use them for anything that probably makes Google any money.

[00:07:16] [SPEAKER_01]: And that's probably the same for Amazon, right?

[00:07:18] [SPEAKER_00]: I think it's a classic technology case of solution without a problem.

[00:07:23] [SPEAKER_00]: And I don't think that people said that they were dying to do this and there was a lot of presumed uses.

[00:07:31] [SPEAKER_00]: That's why there were the add-ons, the kind of application layer from out of May.

[00:07:35] [SPEAKER_00]: Yeah.

[00:07:35] [SPEAKER_00]: People added those things.

[00:07:36] [SPEAKER_01]: Which really complicated things from a user perspective.

[00:07:40] [SPEAKER_01]: It just made it far more messy even though like the benefit there was, yeah, we've made it more capable.

[00:07:45] [SPEAKER_01]: But then it became this huge cognitive load to keep in mind what it could do versus what it could not.

[00:07:53] [SPEAKER_01]: Right.

[00:07:53] [SPEAKER_00]: You've got to know to ask for it.

[00:07:55] [SPEAKER_00]: That's a huge issue.

[00:07:56] [SPEAKER_00]: Right, so that entire layer kind of went away and I just don't think people had a need.

[00:08:03] [SPEAKER_00]: And there was a presumption of it.

[00:08:06] [SPEAKER_00]: But I think the same is going to be true.

[00:08:08] [SPEAKER_00]: Could be true of chat methodology on AI.

[00:08:11] [SPEAKER_00]: We'll have to see over time.

[00:08:14] [SPEAKER_00]: It's presumed to take over search.

[00:08:16] [SPEAKER_00]: It's presumed to take over other things.

[00:08:17] [SPEAKER_00]: I don't think that's at all certain.

[00:08:19] [SPEAKER_00]: So we'll see.

[00:08:21] [SPEAKER_01]: Yeah, yeah.

[00:08:22] [SPEAKER_01]: And I mean all of the big companies behind these chatbots, they all have this vision of the chatbot being everywhere we already are.

[00:08:34] [SPEAKER_01]: Be it if we're wearing it around our neck or if it's in our smartphone or if it's pinned to our lapel.

[00:08:39] [SPEAKER_01]: Or if it just happens to be in every room because there's a device there.

[00:08:42] [SPEAKER_01]: They all want to be the vector for that interaction and that engagement.

[00:08:47] [SPEAKER_01]: And I don't know if people find something in these new, especially Amazon, if they find something in these new echos or whatever they're going to call them that they didn't find before.

[00:09:00] [SPEAKER_01]: And yeah, I don't think it's about that too.

[00:09:01] [SPEAKER_00]: As Ozone says, thank you Ozone for the tip.

[00:09:05] [SPEAKER_00]: It's a timer.

[00:09:06] [SPEAKER_00]: It's an alarm.

[00:09:07] [SPEAKER_00]: Wonderful, but an alarm clock is not an industry.

[00:09:11] [SPEAKER_00]: It's not broad utility.

[00:09:13] [SPEAKER_00]: I absolutely agree.

[00:09:15] [SPEAKER_00]: If we were trying to imagine what would make it take off now, maybe to go to that point, if it were a different way to give you a reminder of things.

[00:09:28] [SPEAKER_00]: You know, part of this for us is, good morning, Jason.

[00:09:31] [SPEAKER_00]: The weather today is this and that.

[00:09:33] [SPEAKER_00]: And here's the headlines.

[00:09:35] [SPEAKER_00]: I can look at my phone and I can find out the time and weather.

[00:09:38] [SPEAKER_00]: You know me so well.

[00:09:39] [SPEAKER_00]: I feel so cared for Amazon.

[00:09:43] [SPEAKER_00]: Or Madame A, give me the NPR headlines, Madame A.

[00:09:47] [SPEAKER_00]: I can turn on NPR.

[00:09:48] [SPEAKER_00]: I can go to a podcast.

[00:09:51] [SPEAKER_00]: Yeah.

[00:09:51] [SPEAKER_00]: It's hard to imagine what that really entails.

[00:09:56] [SPEAKER_00]: I don't, I, I said the thing sitting next to me for three years, the Google version.

[00:10:01] [SPEAKER_00]: And all I ever did was tell it to stop when I use the word Google and then something else.

[00:10:06] [SPEAKER_01]: It only went off when you accidentally fired it off.

[00:10:09] [SPEAKER_00]: Yes.

[00:10:10] [SPEAKER_00]: And so that was a stop Google stop.

[00:10:12] [SPEAKER_00]: And on the car, I use a voice sometimes screaming at it and frustration because it's not doing what I want.

[00:10:19] [SPEAKER_00]: But that's, that's very straightforward.

[00:10:21] [SPEAKER_00]: It's, it's, I'm telling ways where to navigate me.

[00:10:25] [SPEAKER_00]: I'm answering somebody's chat coming text.

[00:10:31] [SPEAKER_00]: That's kind of it.

[00:10:33] [SPEAKER_01]: Yeah.

[00:10:34] [SPEAKER_01]: Yeah.

[00:10:36] [SPEAKER_01]: Yep.

[00:10:36] [SPEAKER_01]: I, I agree.

[00:10:38] [SPEAKER_01]: I'm super curious to see, even though I realize this is, it's an obvious next step for these devices because it does seem like, you know, TNG the next generation of what these devices promise to do in the beginning.

[00:10:52] [SPEAKER_01]: I just don't know if people haven't already kind of made up their mind on devices like these based on the previous experience.

[00:10:59] [SPEAKER_01]: Anyways, coupled with the kind of societal perspective and understanding around AI, what we've seen, you know, from generative AI in the last couple of years being, you know, being not always truthful, getting a lot of things wrong, overstepping boundaries and kind of ethical boundaries and things.

[00:11:19] [SPEAKER_01]: It's, it's a lot of, a lot of hurdles to overcome.

[00:11:22] [SPEAKER_01]: But, but I also understand why Amazon's doing it and why Google is doing it.

[00:11:27] [SPEAKER_01]: Like I get it.

[00:11:28] [SPEAKER_01]: It's, it's to a certain degree it's low hanging fruit.

[00:11:30] [SPEAKER_01]: It's the devices already exist.

[00:11:32] [SPEAKER_01]: So why not turn them into your new vector for this technology?

[00:11:35] [SPEAKER_01]: But we'll see what actually comes.

[00:11:37] [SPEAKER_00]: Yeah.

[00:11:37] [SPEAKER_00]: I think if we go back to bringing up the podcast goes with Stacy Hickenrotham.

[00:11:44] [SPEAKER_00]: But there was a lot of.

[00:11:45] [SPEAKER_01]: I wonder if your ears are tingling right now.

[00:11:46] [SPEAKER_01]: Yeah.

[00:11:47] [SPEAKER_00]: There was a presumption that the smart home would be it, right?

[00:11:52] [SPEAKER_00]: That then you tie in all those applications, you have it do things.

[00:11:54] [SPEAKER_00]: Right.

[00:11:55] [SPEAKER_00]: Smart home hasn't taken off that well and result either.

[00:11:59] [SPEAKER_00]: Again, Ozone says, talks about that.

[00:12:03] [SPEAKER_00]: And I think it's also even if you're in the Apple ecosystem or the Google ecosystem or one of the ecosystems, the integration is not good enough to say,

[00:12:14] [SPEAKER_00]: if I say, make leave me a keep message.

[00:12:17] [SPEAKER_00]: I don't really know how to do that.

[00:12:18] [SPEAKER_00]: It's easier for me to pick up the phone and put the keep message in.

[00:12:21] [SPEAKER_00]: Yeah.

[00:12:22] [SPEAKER_01]: Yep.

[00:12:24] [SPEAKER_01]: Oh well.

[00:12:24] [SPEAKER_01]: Yep.

[00:12:26] [SPEAKER_01]: If, uh, if syntax gets involved, then it's all downhill from there.

[00:12:29] [SPEAKER_01]: That's just continually my take on these things is if this is going to improve the syntax thing, like truly understand exactly what I'm looking for.

[00:12:39] [SPEAKER_01]: And I don't have to think in the moment how to get it to do the thing.

[00:12:42] [SPEAKER_01]: I just talk the way I talk and it gets it the way another human being in the room might in the same situation.

[00:12:48] [SPEAKER_01]: Then I might be more inclined, but it still has to kind of prove that to me.

[00:12:52] [SPEAKER_01]: I'm not convinced entirely yet.

[00:12:54] [SPEAKER_01]: We'll see.

[00:12:55] [SPEAKER_01]: Um, Elon Musk announced on Twix X Twitter, whatever you want to call it, the launch of Colossus.

[00:13:04] [SPEAKER_01]: Oh, it's just sounds so big and massive.

[00:13:07] [SPEAKER_01]: Brrr.

[00:13:08] [SPEAKER_01]: Uh, so you can take Musk math with a grain of salt.

[00:13:12] [SPEAKER_01]: That's a shoot.

[00:13:13] [SPEAKER_01]: Yeah.

[00:13:14] [SPEAKER_01]: But he says it's the quote most powerful AI training system in the world and quote, which

[00:13:19] [SPEAKER_01]: he expects to double in size from a 100,000 H 100 training cluster to 200,000 via 50,000 H

[00:13:29] [SPEAKER_01]: 200s, uh, in just a few months.

[00:13:31] [SPEAKER_01]: So he's saying right out of the gate, it's the biggest and oh, by the way, in a couple

[00:13:36] [SPEAKER_01]: of months, it's going to be twice as big.

[00:13:38] [SPEAKER_01]: Uh, so it will continue to be the biggest.

[00:13:40] [SPEAKER_01]: He also says the 100 K cluster was brought online in a 122 days, a very short amount

[00:13:46] [SPEAKER_01]: of time record time, according to Nvidia, uh, which they put out a tweet, uh, to

[00:13:51] [SPEAKER_01]: acknowledge and to congratulate.

[00:13:53] [SPEAKER_01]: Um, and so when I was reading this, I was like, all right, so some of this feels

[00:13:57] [SPEAKER_01]: like Greek to me because as we've said many times on this show, we are, you

[00:14:00] [SPEAKER_01]: know, we are students in the world of AI.

[00:14:03] [SPEAKER_01]: We don't know all the answers and we kind of use the show to really learn

[00:14:07] [SPEAKER_01]: and understand and grow our knowledge of it.

[00:14:10] [SPEAKER_01]: So to understand the magnitude of this, the most powerful AI training system air quotes

[00:14:15] [SPEAKER_01]: relates to the number of GPUs behind the model.

[00:14:19] [SPEAKER_01]: If you compare what Musk is, is touting here, which is 100,000 Google AI is

[00:14:26] [SPEAKER_01]: currently at around 90,000 open AI somewhere around 80,000 Nvidia AI around

[00:14:32] [SPEAKER_01]: 50,000 you've got Meta and Microsoft both in that 60 to 70,000 range.

[00:14:36] [SPEAKER_01]: So when you compare them based on the numbers that I was able to find anyways,

[00:14:40] [SPEAKER_01]: who knows if they're 100% accurate, but Musk is touting something that beats

[00:14:44] [SPEAKER_01]: all of them.

[00:14:45] [SPEAKER_01]: And then if you're talking about, you know, a couple of months time, um,

[00:14:49] [SPEAKER_01]: doubling that, that would be, that would be pretty, uh, significant if,

[00:14:54] [SPEAKER_01]: if that is true, if you believe Musk math.

[00:14:58] [SPEAKER_00]: That's the key.

[00:14:59] [SPEAKER_00]: Elon Musk is the, is the most historic midlife crisis ever witnessed.

[00:15:06] [SPEAKER_00]: Everything has to be the biggest.

[00:15:09] [SPEAKER_00]: He has to set off rockets.

[00:15:10] [SPEAKER_00]: He has to create ugly trucks and he has to argue that he has the biggest AI there is.

[00:15:16] [SPEAKER_00]: But first, I think we need to be dubious on the information.

[00:15:20] [SPEAKER_00]: They say that to set up something of that size would generally take a year.

[00:15:25] [SPEAKER_00]: And so how the heck did he cut all of that?

[00:15:29] [SPEAKER_00]: Then interestingly, they said that, um, where is it?

[00:15:34] [SPEAKER_00]: I just had it here, uh, that he must have found some solution for the power because

[00:15:41] [SPEAKER_00]: the utility company said that by August X AI would have access to around 50 megawatts,

[00:15:46] [SPEAKER_00]: which is only enough to power 50,000 chips.

[00:15:49] [SPEAKER_00]: An upcoming substation would come online with another 150 megawatts,

[00:15:55] [SPEAKER_00]: which would be enough for a hundred thousand chips, but not until 2025.

[00:15:58] [SPEAKER_00]: And so what they say the information is must apparently found a short-term solution to

[00:16:04] [SPEAKER_00]: make up the difference, bringing in natural gas powered turbines.

[00:16:09] [SPEAKER_00]: And a group of environmentalists recently wrote a letter to the local health department

[00:16:13] [SPEAKER_00]: that regulates air polluting facilities alleging that he's operating 20 gas combination turbines

[00:16:19] [SPEAKER_00]: without proper permits.

[00:16:21] [SPEAKER_00]: Oh boy.

[00:16:22] [SPEAKER_00]: Um, yeah, but to what end?

[00:16:25] [SPEAKER_00]: So this is the other point.

[00:16:26] [SPEAKER_00]: I think what we see happening in the discussion around models is they're getting smaller.

[00:16:32] [SPEAKER_00]: They're getting more efficient.

[00:16:33] [SPEAKER_00]: They've run out of content for training.

[00:16:37] [SPEAKER_00]: So what does this minus bigger size matters really accomplish in the ability of the model that he may create?

[00:16:47] [SPEAKER_00]: Color me once again, dubious.

[00:16:49] [SPEAKER_01]: I mean, other than other than being able to say, look, here's another avenue that I have set my sights on.

[00:16:56] [SPEAKER_01]: I want to be the biggest and the best in AI because that's where everyone else is right now.

[00:17:01] [SPEAKER_01]: You know, it was just last year, April 2023 Musk bought reportedly bought a large amount of GPUs somewhere around 10,000,

[00:17:08] [SPEAKER_01]: which I guess in the grand scheme, 100,000 is not that much.

[00:17:11] [SPEAKER_01]: But still it's easy to see that that was probably at least a component of this musk at one point.

[00:17:20] [SPEAKER_01]: You know, and not too, too very long ago went public with the fact that like, all right, AI is the next thing.

[00:17:27] [SPEAKER_01]: The next big thing that I want to be involved with and I'm going to be the biggest and the best.

[00:17:31] [SPEAKER_01]: And I think that's yeah, that's probably his, his prime directive is how can I be the biggest and the best, the fast faster than anyone else?

[00:17:40] [SPEAKER_01]: Yeah.

[00:17:41] [SPEAKER_00]: Yeah.

[00:17:42] [SPEAKER_00]: And I don't think it's all about the hardware.

[00:17:44] [SPEAKER_00]: That's what he thinks is hardware, right?

[00:17:47] [SPEAKER_00]: AI is not. Yeah, you can have the chips, but what's your underlying software?

[00:17:54] [SPEAKER_00]: What's the training set and what is it you're going to have it do?

[00:17:58] [SPEAKER_00]: Yeah, if it's going to just make up fake pictures of Kamala Harris as a communist, not worth all the effort, Elon.

[00:18:07] [SPEAKER_00]: You know, you could do that with a paintbrush.

[00:18:09] [SPEAKER_00]: A lot of other ways.

[00:18:11] [SPEAKER_00]: Right.

[00:18:11] [SPEAKER_00]: And so I just don't get, I don't see the strategy here.

[00:18:15] [SPEAKER_00]: I never have with him and except he's miffed about open AI.

[00:18:20] [SPEAKER_00]: He left in a hissy fit.

[00:18:23] [SPEAKER_00]: And so he's trying to say that I'll outdo them, but I don't think he has a strategy.

[00:18:28] [SPEAKER_01]: Yeah.

[00:18:29] [SPEAKER_01]: Yeah.

[00:18:29] [SPEAKER_01]: Maybe there's a little bit of like a revenge play in there to a certain degree.

[00:18:34] [SPEAKER_01]: And, you know, under understanding what we've seen of his kind of demonstration of ego.

[00:18:41] [SPEAKER_01]: Yes.

[00:18:41] [SPEAKER_01]: Most of what he does in a public sense.

[00:18:44] [SPEAKER_01]: That doesn't surprise me at all.

[00:18:45] [SPEAKER_01]: Speaking of open AI, reportedly planning to develop its own AI chips utilizing TSMC's 1.6 nanometer A16 process node that is supposedly coming soon.

[00:18:59] [SPEAKER_01]: Mass production scheduled to begin second half of 2026 might actually be partnering with Apple, Broadcom and Marvell for, for the chip design.

[00:19:11] [SPEAKER_01]: And ultimately this is at least according to the article here really aimed at limiting or reducing reliance on NVIDIA AI servers as it currently does.

[00:19:24] [SPEAKER_01]: And I mean, open AI is big enough to, I'm sure have ambitions to own the entire stack at some point in some way shape or form as I'm sure they all do.

[00:19:35] [SPEAKER_01]: But it's going to take time and resources to make that happen.

[00:19:39] [SPEAKER_01]: So seems like that's their plan.

[00:19:41] [SPEAKER_00]: And a lot of stories we read in the Wall Street Journal wrote a story this week or the movies The Times looking at the various threats to NVIDIA's hegemony over chips.

[00:19:50] [SPEAKER_00]: So I think people don't want to be strangled. They don't want a shortage. They do want their own thing. We saw the same thing happen in phones.

[00:19:56] [SPEAKER_00]: We saw the same thing happen in computers.

[00:19:58] [SPEAKER_00]: And so it's not surprising that they would do this.

[00:20:01] [SPEAKER_00]: NVIDIA, I just looked at NVIDIA stock.

[00:20:04] [SPEAKER_00]: So it led downhill track among the techs yesterday.

[00:20:09] [SPEAKER_00]: Last Wednesday it was at 128 and then yesterday it's low.

[00:20:15] [SPEAKER_00]: It was at 105. It's back up a percent today to 109.

[00:20:20] [SPEAKER_00]: So, you know, it takes a hit, but if you look at it, it's a literal roller coaster over.

[00:20:25] [SPEAKER_00]: It went up and then down in an early part of August.

[00:20:29] [SPEAKER_00]: It came back up again now.

[00:20:30] [SPEAKER_00]: It's going back down again.

[00:20:31] [SPEAKER_00]: And I think that's what it's going to be in this world until we figure out not just who's the winner in these chips,

[00:20:39] [SPEAKER_00]: but is there a sustainable industry market for them?

[00:20:43] [SPEAKER_00]: Resumption right now is yes.

[00:20:44] [SPEAKER_00]: There is demand for them.

[00:20:45] [SPEAKER_00]: They are in shortage. No question there, but I don't think we yet understand the full future of the industry.

[00:20:51] [SPEAKER_00]: So it'll be interesting to watch what other competitors come in.

[00:20:54] [SPEAKER_00]: Meanwhile, Intel's about to collapse completely.

[00:21:00] [SPEAKER_00]: And so I think that in some ways they're going to be out of this market more than we would have predicted two years ago.

[00:21:06] [SPEAKER_00]: And who else is in it?

[00:21:08] [SPEAKER_00]: I don't know the chip market very well.

[00:21:09] [SPEAKER_00]: Again, Stacey Hickimbotham does.

[00:21:13] [SPEAKER_00]: We should have a rather long list.

[00:21:14] [SPEAKER_01]: We should bring Stacey Hickimbotham on this show.

[00:21:15] [SPEAKER_00]: I think we do.

[00:21:17] [SPEAKER_01]: That would be fun actually.

[00:21:19] [SPEAKER_00]: Bring back her chip memory.

[00:21:23] [SPEAKER_00]: But yeah, so we'll see what happens here.

[00:21:25] [SPEAKER_00]: If I were an investor in OpenAI, again, I don't know where is the true unique value going to be?

[00:21:35] [SPEAKER_00]: Is it in the hardware or is it in the software?

[00:21:37] [SPEAKER_00]: Same discussion.

[00:21:38] [SPEAKER_00]: We just had the last story.

[00:21:40] [SPEAKER_00]: So I think in the long run it's going to be in the software.

[00:21:44] [SPEAKER_01]: Yeah, that's my hunch too.

[00:21:47] [SPEAKER_01]: Yeah, interesting.

[00:21:50] [SPEAKER_01]: This is a more fun than chips story because chips sometimes feel a little dry.

[00:21:58] [SPEAKER_01]: It's like carburetors.

[00:22:00] [SPEAKER_01]: You know, there's only so much you can say, right?

[00:22:02] [SPEAKER_01]: There's only so much you could say.

[00:22:04] [SPEAKER_01]: Although there are some people they could talk for hours about chips and I just, I don't understand.

[00:22:08] [SPEAKER_01]: I appreciate that they can do that, but that is totally not me.

[00:22:12] [SPEAKER_01]: But you know what?

[00:22:12] [SPEAKER_01]: Something that I can talk a lot about is the topic of creativity and nano-riMo is something that I've been aware of for a very long time.

[00:22:21] [SPEAKER_01]: I've never participated in it, but I know my friend, our friend Tom Merritt has done nano-riMo every year for a very long time.

[00:22:30] [SPEAKER_01]: What is it?

[00:22:31] [SPEAKER_01]: It's the National Novel Writing Month.

[00:22:34] [SPEAKER_01]: It's an organization that really is designed around the idea that every November they put out a challenge for people to write a 50,000 word manuscript during the month.

[00:22:47] [SPEAKER_01]: And it's really just an opportunity if you participate to explore what it's like to write something significant like that.

[00:22:56] [SPEAKER_01]: 50,000 words, no joke.

[00:22:57] [SPEAKER_01]: And so it's a cool challenge to get people writing.

[00:23:02] [SPEAKER_01]: Well, on Saturday the organization...

[00:23:05] [SPEAKER_01]: Oh yeah, how do you feel?

[00:23:06] [SPEAKER_01]: First of all, what is your take on nano-riMo as someone who has written many books?

[00:23:11] [SPEAKER_01]: I'm curious.

[00:23:11] [SPEAKER_00]: I never, well I actually did write a novel once.

[00:23:14] [SPEAKER_00]: Nobody knows this.

[00:23:15] [SPEAKER_00]: I wrote a novel.

[00:23:15] [SPEAKER_00]: Yeah, I guess novel versus yeah, different things.

[00:23:18] [SPEAKER_00]: Well, thank goodness no one published it.

[00:23:22] [SPEAKER_00]: It's sitting up on a shelf up there and when I die I won't know the laughter at me so my kids can burn it or whatever.

[00:23:29] [SPEAKER_01]: They'll open it up, flip a couple of pages be like alright next.

[00:23:32] [SPEAKER_01]: It's terrible.

[00:23:33] [SPEAKER_00]: It's terrible.

[00:23:34] [SPEAKER_00]: I haven't had the courage to open it.

[00:23:37] [SPEAKER_00]: So and right now I'm trying to write my book about the line of type and it's you know, a paragraph at a time.

[00:23:41] [SPEAKER_00]: I can't imagine doing 50,000 words in a month.

[00:23:45] [SPEAKER_00]: But going into the story that you're about to put out, my overarching attitude is yeah, it's not going to be James Joyce.

[00:23:55] [SPEAKER_00]: But who are we to think that writing and expression is to be limited to a small group of people.

[00:24:04] [SPEAKER_00]: And I'm generally about the democratization of creativity and voice.

[00:24:08] [SPEAKER_00]: And so in general, snobs will make fun of this, but I will try not to know having said that.

[00:24:16] [SPEAKER_01]: Now having said that well so on Saturday the organization released its perspective on the use of AI for

[00:24:24] [SPEAKER_01]: writing it did not in this perspective that it shared explicitly support or refute the technology and I think a lot of

[00:24:33] [SPEAKER_01]: will just say a lot of like self proclaimed like actual writers or whatever you want to call them authors

[00:24:42] [SPEAKER_01]: probably had hoped that Nanorima would come out against AI and the kind of you know, the approach of using AI for writing.

[00:24:51] [SPEAKER_01]: Nanorima said quote we believe that to categorically condemn AI would be to ignore classist and ableist issues surrounding the use of the technology.

[00:25:03] [SPEAKER_01]: And that questions and that questions around the use of AI tied to questions around privilege.

[00:25:10] [SPEAKER_01]: So definitely taking a somewhat of an ethical stance with you know what they're saying around AI and again, they're not saying

[00:25:19] [SPEAKER_01]: therefore or against it they're just saying there's there's no need to say all of it is bad. None of it is good like when you know as far as

[00:25:31] [SPEAKER_01]: they're spelling out in their in their post, there are you know opportunities for people who might not be able to write without an

[00:25:42] [SPEAKER_01]: assistive kind of technology like AI just as one example there are there are reasons why maybe you don't want to use it and there are reasons

[00:25:49] [SPEAKER_01]: why you might want to and that was I think their point but man people people were not happy with it.

[00:25:56] [SPEAKER_00]: And they reacted I think there's there's something to this that fascinates me so I may have discussed this in the show before

[00:26:04] [SPEAKER_00]: but when chat you came out one of my first speculations was that I was looking for you know how could it be useful.

[00:26:12] [SPEAKER_00]: One thing I said at the time was perhaps it can be useful to help people tell their own stories and that it might expand literacy in the

[00:26:21] [SPEAKER_00]: sense that those who are intimidated by writing which is most people and I do it for living you do it too.

[00:26:26] [SPEAKER_00]: But most people hate writing anything from a letter to a blog post to a paper for school to God knows a novel.

[00:26:34] [SPEAKER_00]: So I thought well this could expand literacy help you tell their stories that's a good thing.

[00:26:39] [SPEAKER_00]: Then I had two of the executive students in the program I started at CUNY who said whoa there white fella one of them runs a

[00:26:49] [SPEAKER_00]: news site for First Nations people in Canada the other one runs a news site for people who are incarcerated and they both said

[00:26:55] [SPEAKER_00]: and they were quite right.

[00:26:57] [SPEAKER_00]: Do you really want to homogenize these unique voices into this pasteurized kind of language that comes out of AI that is based on

[00:27:09] [SPEAKER_00]: the language of those who had the power to publish in the past.

[00:27:15] [SPEAKER_00]: So you're going to continue the kind of power structure of language from the past.

[00:27:21] [SPEAKER_00]: Right.

[00:27:23] [SPEAKER_00]: I agree.

[00:27:25] [SPEAKER_00]: However, we also know that in some cases people so you're in essence it enforces code switching that you have to write in this way now I

[00:27:37] [SPEAKER_00]: also believe that maybe we shouldn't in journalism school even teach classic white English as the only form of English.

[00:27:46] [SPEAKER_00]: There are many dialects that of English that matter greatly and bring more authority in their communities plural.

[00:27:57] [SPEAKER_00]: And so for respect those however there are cases when you want to get a job with the big company you want to go to work for

[00:28:04] [SPEAKER_00]: McKinsey God help you and you're going to write a letter that McKinsey is going to understand in their language not yours.

[00:28:12] [SPEAKER_00]: So you add all that together and I think I want to have it both ways.

[00:28:19] [SPEAKER_00]: I want to say that there is value in helping people to be able to create however they want to create and putting them in charge of that

[00:28:25] [SPEAKER_00]: saying to the AI no that's not what I meant no don't say it this way.

[00:28:31] [SPEAKER_00]: They're the judge of whether it's successful or not and they're responsible for it as well if it lies it's on them for having asked for it

[00:28:39] [SPEAKER_00]: and haven't gotten it.

[00:28:43] [SPEAKER_00]: On the other hand I've had students who've come from other countries for whom English is not their first or even their second language

[00:28:51] [SPEAKER_00]: and I'm an idiot America who speaks 1.1 languages the point one being really bad German.

[00:28:56] [SPEAKER_00]: And so I respect immensely but I know what happens they come over they feel intimidated and ready in English and to be able to use

[00:29:03] [SPEAKER_00]: AI to smooth out that English to code switch for people who are less tolerant in America of lapses we would say in English is really useful

[00:29:14] [SPEAKER_00]: and really important.

[00:29:15] [SPEAKER_00]: And so to cut people off from these tools as long as they are in their control.

[00:29:22] [SPEAKER_00]: I think I agree with the original statement at Neno Rymel which sounds funny to me that it's it is in a sense ableist.

[00:29:33] [SPEAKER_00]: It is discriminatory because you're taking that tool away and I mentioned in the show last week and I'm going to mention it a lot as we go forward.

[00:29:41] [SPEAKER_00]: I've written a syllabus for course at another university in AI and creativity.

[00:29:46] [SPEAKER_00]: And this is one of the things I want the students to address and to face.

[00:29:50] [SPEAKER_00]: Does it help them express themselves the way they want to express with authority or does it kind of nudge them away from what they would say on their own.

[00:30:00] [SPEAKER_00]: Right.

[00:30:01] [SPEAKER_00]: And how much are they control of it. How much is it a tool.

[00:30:05] [SPEAKER_00]: Now if you if you all ended here in a second here but but if you choose to paint you are limited by the brushes you use and the paints you use and they all bring limitations.

[00:30:17] [SPEAKER_00]: If you and choose instead to do an engraving well that brings other limitations.

[00:30:22] [SPEAKER_00]: If you as a musician choose to use a guitar to do a song versus a bassoon to do a song right.

[00:30:30] [SPEAKER_00]: They bring different things that you are only in so so much a control of sure.

[00:30:36] [SPEAKER_00]: And so this is a discussion we'll have with left manovich in I think two weeks who looks at AI and creativity in these ways.

[00:30:44] [SPEAKER_00]: So it'll be interesting actually to bring this story up with him even though he deals with visual arts but he argues that AI does not have a control.

[00:30:51] [SPEAKER_00]: It does have an aesthetic built in but that it is a very useful tool for creativity.

[00:30:56] [SPEAKER_00]: And so if you say to people who want to be creative you can't use that that's cheating.

[00:31:01] [SPEAKER_00]: It's like taking a knife away from an engraver.

[00:31:06] [SPEAKER_01]: Yeah.

[00:31:07] [SPEAKER_01]: It's yet another yet another tool.

[00:31:10] [SPEAKER_01]: And we've definitely talked about this and you know something like using AI for writing.

[00:31:17] [SPEAKER_01]: You know there's a lot of different kind of levels and variations within that kind of blanket statement how how how much are you relying on.

[00:31:27] [SPEAKER_01]: Are you saying write me a story.

[00:31:30] [SPEAKER_01]: Here's my story submitting or are you you know on the opposite side do you have your manuscript in a in a in a doc and it's telling you that you spelled their wrong and it's asking you to correct.

[00:31:42] [SPEAKER_01]: Like like are we talking about pure algorithmic stuff or are we talking about generating the entire thing top to bottom and there are differences there.

[00:31:51] [SPEAKER_01]: And they all you know depending on how you define AI you know fall into the confines of this algorithmic correction and and everything and I think it's impossible actually this this relates exactly with one of the quotes that caught my eye.

[00:32:06] [SPEAKER_01]: When they said we want to make clear that AI is a large umbrella technology the size and complexity of that category which includes both non generative and generative AI among other uses contributes to our belief that it is simply too big to categorically endorse or

[00:32:22] [SPEAKER_01]: or non endorse and I think that's that's right at the core of kind of how I how I feel about a lot of this technology and I know that wasn't what writers you know who I mean I think there were some writers that actually stepped down from the writers board or

[00:32:37] [SPEAKER_01]: at the organization because of this immediately and very vocally you know that might not be what they wanted to hear because there is that immediate reaction of if it's AI and it's writing something then that is bad and I you know and I don't I don't begrudge people for for holding that belief but I do think that the you know the making the statement that no matter

[00:33:01] [SPEAKER_01]: what if it if it's AI then throw it out like I think that that's a blunt object on something that has a lot of variability within it. So I'm trying to find it right now and I probably won't on the fly but the

[00:33:16] [SPEAKER_00]: ML a cccc task force on writing modern language association and I forget what the cccc is

[00:33:25] [SPEAKER_00]: college composition and communication no conference on college conference there's the four C's

[00:33:33] [SPEAKER_00]: so I haven't found the paper itself yet but they had a very good paper my friend Matthew Kirshenbaum University of Maryland was a member of the task force and they just said there's many tools that come before

[00:33:45] [SPEAKER_00]: the printing press was a tool spell check is a tool we've gotten used to these things in the past this is a tool to be used so if English professors are not scared of it then I think that a novel contest shouldn't be I guess I get it though Jason is somebody who says oh I

[00:34:03] [SPEAKER_00]: was sweating sweat and blood over my 50,000 words and you used a machine right yeah is it the steroids of writing and the creativity I don't know that's a fun story I'm really glad you put that one in there I had not I had not seen that one. Yeah it definitely caught my attention.

[00:34:22] [SPEAKER_01]: Oh zone thank you so much for the super thanks as this has been the biggest problem with the destroy AI folks that stance ignores any positive uses of the technology which is an immensely limiting view and I think we're kind of in a similar camp with you so thank you for that

[00:34:40] [SPEAKER_01]: as usual as usual yes indeed alright we're going to take a quick break when we come back we've got more to talk about this the latter half of the show has more fun stories as well coming in a second what no chips.

[00:34:59] [SPEAKER_01]: Alright so unfortunately to Jeff's disappointment we aren't going to be talking about chips in the second half of the show I'm sorry but no we're going to talk about the fun of elections and AI is impact on the elections or lack thereof there's an MIT technology review opinion piece that takes a look at

[00:35:18] [SPEAKER_01]: the AI's impact on you know we've heard a lot about it right like a generative AI it's going to it's going to be such a huge influential force in this in this coming election and elections around the world and everything and I'm not saying that it hasn't happened to some

[00:35:34] [SPEAKER_01]: degree but I think the fear is that it was going to completely derail everything and this opinion piece attempts to demonstrate how the predictions about AI's influence on election have so far been exaggerated but I'll just kind of stop there because you you wrote about this on LinkedIn as well as kind of a summary of it so tell me a little bit about where you're at on this.

[00:36:00] [SPEAKER_00]: So this paper comes from or this piece comes from scholars I with respect Felix Simon is now I think he's in charge of research at the Reuters Institute for the study of journalism at the Oxford University.

[00:36:12] [SPEAKER_00]: Keegan McBride is a professor of AI government policy at the Oxford Internet Institute and Sasha Alte is a research fellow in the University of Zurich so the real deal folks.

[00:36:25] [SPEAKER_00]: And what they tried to do was look at the fear that had come out about the impact of AI on elections and then puts forth I think what is not specific to this but is but was true of AI and is true of other fears about manipulation of the public as a whole.

[00:36:44] [SPEAKER_00]: And so it's really about media theory to a very extent so they list a few reasons why we shouldn't be worried. The first is that mass persuasion is notoriously challenging.

[00:36:54] [SPEAKER_00]: The presumption has been through years of media study that there's this idea of the hyperdermic model that if we just tell this people stuff they're going to believe it and it's going to change the behavior and it is much much tougher.

[00:37:06] [SPEAKER_00]: People have agency and that ignores their agency treats them like a mass. Second is the for a piece of content to be influential. I'm quoting now it first must first reach its intended audience and today it's tsunami of information is published daily by individuals political campaigns news organizations

[00:37:24] [SPEAKER_00]: and others consequently AI generated material like any other content faces significant challenges cutting through that noise. There's just a lot of speech going on there and it's just one more speaker.

[00:37:36] [SPEAKER_00]: Third emerging research challenges the idea that using a item micro target people and sway their voting behavior this is really interesting works as well as initially feared voters seem to not only recognize excessively tailored messages but actively dislike them.

[00:37:52] [SPEAKER_00]: This goes to creepiness and surveillance capitalism and your targeting right even though I will mock that view of surveillance.

[00:38:02] [SPEAKER_00]: It's true that it's gotten real cooties out there in society and people don't want to feel that scene. And so if you use a I for that purpose.

[00:38:11] [SPEAKER_00]: It's not going to work very well so you've just lost that supposed power of it. Fourth, pardon me, voting behavior shaped by complex nexus of factors. And this is critical by friend Steve of anything who's a media scholar University of Virginia constantly screams about this that people think that they can look at the impact of just one

[00:38:32] [SPEAKER_00]: Twitter just Twitter or just AI or just Russia and that see that in isolation of everything else in someone's life. Other technology other media other influences like friends and family background, all of these things have a part.

[00:38:52] [SPEAKER_00]: So to come forward and say that oh my god, AI is going to ruin the election we heard a lot of that talk is at the get go naive.

[00:39:02] [SPEAKER_00]: Same thing I think was true of Russian manipulation in the last election same thing is true of the ills of even Elon Musk and Twitter.

[00:39:11] [SPEAKER_00]: And I as much as I don't like him and what he does how influential is he really. And the other thing that's not in here is that is that what does make it spread is worrying about it is talking about it is quoting it, because you're doing their job for them.

[00:39:26] [SPEAKER_00]: That's the real strategy here is to get your misinformation spread by those who weren't against it because it still works the same effect. It makes it opens up the over to window and that makes people say, oh, that could be.

[00:39:38] [SPEAKER_00]: And so I haven't thought about that before.

[00:39:41] [SPEAKER_00]: Right. Yeah.

[00:39:43] [SPEAKER_00]: So, and finally, I this is me talking now.

[00:39:47] [SPEAKER_00]: I think we have to at the end result have some faith in some portion of our fellow humanity.

[00:39:54] [SPEAKER_00]: Because if we don't, then that's the third person effect at work, which I write about in my upcoming book, the Web we weave in which we presume that basically everybody else is vulnerable to problems.

[00:40:08] [SPEAKER_00]: And so I think that's the essence of propaganda and pornography and advertising and disinformation, but not me.

[00:40:12] [SPEAKER_00]: I'm okay. I got it.

[00:40:14] [SPEAKER_00]: And that's the essence of snobbish this.

[00:40:18] [SPEAKER_00]: So it was an interesting thing that that it takes again, just AI and disinformation in the election.

[00:40:23] [SPEAKER_00]: But I think this is true of so much about panic about technology and information.

[00:40:34] [SPEAKER_00]: I also we didn't put the rundown, but also I had a snippet over you Val Noah Harari in the New York Times today, who, you know, when the bots compete for your love.

[00:40:49] [SPEAKER_00]: And he leaps from trope to trope to trope as he does in his books, in my belief where he says that, well, information changes and that threatens democracy.

[00:41:01] [SPEAKER_00]: And information is changing right now and ergo AI can change all this and we're in danger, danger, danger.

[00:41:09] [SPEAKER_00]: So when you see these kinds of moral panic stories, you could have a drink now.

[00:41:13] [SPEAKER_00]: Just kind of keep in mind that what I like about this, this, this Oxford Plash Zurich piece is that it gives you a context, I think for judging the stuff.

[00:41:24] [SPEAKER_00]: Is it really that easy to convince people of things, even if it's an AI?

[00:41:28] [SPEAKER_00]: Are people really that vulnerable to this?

[00:41:30] [SPEAKER_00]: Is it easy to give that much attention?

[00:41:33] [SPEAKER_00]: What does it do to take change behavior?

[00:41:35] [SPEAKER_00]: If we really knew how easy it was to fully change behavior, every advertiser in the world would be rich beyond belief.

[00:41:42] [SPEAKER_00]: They wouldn't have to spend money on every commercial over time.

[00:41:45] [SPEAKER_00]: However, we would love you to put ads in this show because it'll work here.

[00:41:50] [SPEAKER_01]: Yeah, here, here it would definitely work.

[00:41:53] [SPEAKER_01]: Yeah.

[00:41:53] [SPEAKER_01]: We've got close to a thousand people watching the live stream right now.

[00:41:56] [SPEAKER_01]: So come on, come at us.

[00:41:58] [SPEAKER_01]: Let's do it.

[00:41:59] [SPEAKER_01]: Interesting.

[00:42:00] [SPEAKER_01]: You're our sheep.

[00:42:04] [SPEAKER_01]: Thank you, Jeff.

[00:42:05] [SPEAKER_01]: That's awesome.

[00:42:06] [SPEAKER_01]: I'm happy you put that in there.

[00:42:09] [SPEAKER_01]: Let's see here.

[00:42:10] [SPEAKER_01]: And I found this one interesting.

[00:42:11] [SPEAKER_01]: I think you put this story in there too.

[00:42:13] [SPEAKER_01]: And I'm super curious to talk about this one, talk about kind of creativity and how it intersects with artificial intelligence.

[00:42:21] [SPEAKER_01]: We have this article and A16Z, which is an Andreessen Horowitz on that site.

[00:42:30] [SPEAKER_01]: But it's a post by Jonathan Lye that dives into pretty deep detail around the potential of AI to combine with film and games in the future.

[00:42:41] [SPEAKER_01]: And ultimately just kind of takes a look at where entertainment is headed when you have kind of this kind of modern generative AI moment in the mix and where things are right now when it comes to modern entertainment versus traditional entertainment.

[00:43:02] [SPEAKER_01]: You know, reading music, radio, podcast, broadcast TV scene here in traditional entertainment.

[00:43:09] [SPEAKER_01]: And as you can see, you know, the boomers that occupies a lot of space as it goes down to millennials, Gen Z, Gen Alpha, it's a much smaller space.

[00:43:18] [SPEAKER_01]: What really takes up that space are things like streaming movies, series, social networks and a large the largest portion for Gen Alpha, especially 22%.

[00:43:28] [SPEAKER_01]: It says video games and it dives into, you know, it covers a lot of ground.

[00:43:33] [SPEAKER_01]: The article in total is very interesting.

[00:43:36] [SPEAKER_01]: It talks a little bit about, you know, kind of the traditional gaming approach being very predetermined, rendered through a graphics pipeline preloaded with assets, deterministic.

[00:43:48] [SPEAKER_01]: How interactive video is, you know, you don't need assets because it's just really derived on the fly from prompts.

[00:43:56] [SPEAKER_01]: Those frames are generated in real time by an AI model.

[00:44:00] [SPEAKER_01]: The gameplay is infinite and probabilistic and also takes a look at kind of Netflix's experience or experiments rather in interactive storytelling with the Bandar Snatch, which was a black mirror experiment,

[00:44:15] [SPEAKER_01]: which I still have yet to see, which is strange because I love black mirror, but there's something about the interactive model that turns me off.

[00:44:21] [SPEAKER_01]: So I found this interesting because like that's the reason I haven't watched Bandar Snatch.

[00:44:27] [SPEAKER_01]: And yet this article is kind of making the case that as AI intersects with entertainment as we go forward, it's almost like they're saying that we're going to opt more for interactive viewing experiences as opposed to kind of the detached sit back on the couch and watch it.

[00:44:51] [SPEAKER_01]: Or am I misunderstanding it? What's your...

[00:44:53] [SPEAKER_00]: No, I think you got their perspective right.

[00:44:56] [SPEAKER_00]: Yeah, something else struck me about this piece and it really goes back to the nano-rimo story.

[00:45:02] [SPEAKER_00]: It's like it's so hard to say.

[00:45:03] [SPEAKER_00]: Yeah, you got it.

[00:45:04] [SPEAKER_00]: Nano-rimo.

[00:45:06] [SPEAKER_00]: Nano-rimo.

[00:45:07] [SPEAKER_00]: Let me, if you go to that chart again, I think that there is a snobbishness slope

[00:45:21] [SPEAKER_00]: that to bring in this new tool in AI into things, in novels and reading,

[00:45:27] [SPEAKER_00]: when we saw what the reaction is, oh my God, you can't touch novels and novels are sacred.

[00:45:31] [SPEAKER_00]: Oh my God, don't do that.

[00:45:32] [SPEAKER_00]: That's wrong.

[00:45:36] [SPEAKER_00]: To do it in movies? That's cinema.

[00:45:42] [SPEAKER_00]: How dare you?

[00:45:42] [SPEAKER_00]: Even though AI is there in all kinds of ways creating images for us,

[00:45:46] [SPEAKER_00]: but it's still...

[00:45:48] [SPEAKER_00]: That's in the hands of the real pros and if they can fool us enough, we don't know it.

[00:45:51] [SPEAKER_00]: So there it's okay.

[00:45:53] [SPEAKER_01]: Well, TV...

[00:45:54] [SPEAKER_01]: I mean, there have been some examples where Generative AI has been used in cinema to create like backdrop like posters

[00:46:00] [SPEAKER_01]: and people flip their lids and it was like a single poster in the back.

[00:46:03] [SPEAKER_01]: Yes, yes.

[00:46:04] [SPEAKER_00]: Well, it's also a labor thing too, right?

[00:46:06] [SPEAKER_00]: Right, yeah, absolutely.

[00:46:08] [SPEAKER_00]: Yeah, right.

[00:46:08] [SPEAKER_00]: And TV, we're a little less snobby.

[00:46:10] [SPEAKER_00]: But I think part of this is that where the society as a whole, the culture as a whole,

[00:46:14] [SPEAKER_00]: is the least snobbish about video games because old farts like me play it less.

[00:46:20] [SPEAKER_00]: And the old structures of cultural judgment just aren't as much at play

[00:46:28] [SPEAKER_00]: because they are there to please people, to please and you want to play them

[00:46:31] [SPEAKER_00]: and they're already made with weird graphics and they're made to be otherworldly.

[00:46:36] [SPEAKER_00]: Right.

[00:46:37] [SPEAKER_00]: And so I think that for AI to enter in not just in the creation, but also as an act of part

[00:46:43] [SPEAKER_00]: of the play in video games, it makes to me a lot of sense that that's the entry point.

[00:46:49] [SPEAKER_00]: That's the wedge and we'll see it there first.

[00:46:54] [SPEAKER_00]: For my book The Good and the Repre-Parenthesis, second plug,

[00:47:00] [SPEAKER_00]: I really started as a book about mass, about mass media and all that.

[00:47:03] [SPEAKER_00]: That's a book I still want to write in the future.

[00:47:05] [SPEAKER_00]: But there's a chapter in there about that where I looked at the sociology of mass culture

[00:47:11] [SPEAKER_00]: and that when radio came along and TV came along and film was there,

[00:47:15] [SPEAKER_00]: there was a lot of tumult in the cultural world of, oh my God, this is the ruin.

[00:47:20] [SPEAKER_00]: We had books and everything was okay.

[00:47:22] [SPEAKER_00]: But if you go back farther, people have fits about novels

[00:47:25] [SPEAKER_00]: and this is going to ruin especially women and children.

[00:47:27] [SPEAKER_00]: So with every new technology, there's a protection that occurs

[00:47:32] [SPEAKER_00]: of the culture that existed before and looking down your nose

[00:47:36] [SPEAKER_00]: at the new culture that comes along.

[00:47:39] [SPEAKER_00]: And the newest...

[00:47:40] [SPEAKER_01]: There's a comfort in what existed before.

[00:47:42] [SPEAKER_00]: Yes.

[00:47:42] [SPEAKER_00]: And so the newest is the safest place to play.

[00:47:46] [SPEAKER_00]: And it's younger people, it's new.

[00:47:48] [SPEAKER_00]: The snobs don't really engage in it.

[00:47:51] [SPEAKER_00]: So I think we're going to see tremendous creativity in games.

[00:47:55] [SPEAKER_00]: My only regret is I'm not a gamer so I'm missing it.

[00:47:58] [SPEAKER_00]: I don't get to see it and you can't do it on a Chromebook.

[00:48:01] So...

[00:48:03] [SPEAKER_01]: Someday, someday you'll get your wish.

[00:48:05] [SPEAKER_01]: You'll get it on a Chromebook and it'll be part of Google's Enterprise plan.

[00:48:09] [SPEAKER_01]: So you'll get that aspect too.

[00:48:13] [SPEAKER_01]: Maybe, maybe, I don't know.

[00:48:15] [SPEAKER_01]: Yeah, that's interesting.

[00:48:17] [SPEAKER_01]: I mean just taking a look at that graph and just seeing how,

[00:48:20] [SPEAKER_01]: at least according to this graph anyways,

[00:48:23] [SPEAKER_01]: how that gaming aspect continues to increase

[00:48:27] [SPEAKER_01]: and increase with every generation, Gen Alpha.

[00:48:31] [SPEAKER_01]: That's a pretty sizable chunk.

[00:48:34] [SPEAKER_01]: I was a gamer back in the day.

[00:48:36] [SPEAKER_01]: I'm not much anymore.

[00:48:38] [SPEAKER_01]: It's a matter of time.

[00:48:39] [SPEAKER_01]: So maybe it is the sort of thing that as we get older

[00:48:41] [SPEAKER_01]: it becomes less and less possible or easy to make the time for gaming.

[00:48:48] [SPEAKER_01]: But maybe that changes as they get older

[00:48:51] [SPEAKER_01]: and especially if the predominant entertainment kind of approach

[00:48:56] [SPEAKER_01]: as I feel like this article is kind of making the case for

[00:49:00] [SPEAKER_01]: does shift towards interactive media consumption.

[00:49:05] [SPEAKER_01]: And I'm just not convinced that the majority of people,

[00:49:10] [SPEAKER_01]: and I could be completely off base and completely wrong

[00:49:12] [SPEAKER_01]: but that the majority of people are going to want to interact

[00:49:16] [SPEAKER_01]: with that media all the time.

[00:49:19] [SPEAKER_00]: Right, I think that's where A16,

[00:49:21] [SPEAKER_00]: and recent horror was going and I agree with you.

[00:49:24] [SPEAKER_00]: It's like speaker phones,

[00:49:26] [SPEAKER_00]: the speaker phones listen to me, old men,

[00:49:29] [SPEAKER_00]: like Madam A.

[00:49:33] [SPEAKER_00]: And is that form of interaction what you want?

[00:49:36] [SPEAKER_00]: Interestingly in the comments too Rob Collins cautions me

[00:49:38] [SPEAKER_00]: and says that gamers are very gatekeeping

[00:49:41] [SPEAKER_00]: and justice snobbish in their way,

[00:49:43] [SPEAKER_00]: which is really interesting.

[00:49:44] [SPEAKER_00]: Again, I'm not a gamer so I don't have the ethos

[00:49:49] [SPEAKER_00]: of that community very well.

[00:49:51] [SPEAKER_00]: But I think from the snobs perspective,

[00:49:53] [SPEAKER_00]: which world I know better,

[00:49:56] [SPEAKER_00]: they're snobbish about games in part.

[00:49:59] [SPEAKER_00]: So we'll see whether what this obviously means is

[00:50:01] [SPEAKER_00]: Andreessen Horowitz is betting on this

[00:50:03] [SPEAKER_00]: and investing in this as a business justification for A.

[00:50:07] [SPEAKER_01]: So we'll see if they're right.

[00:50:09] [SPEAKER_01]: Agreed, yeah, I understand the source of this article.

[00:50:13] [SPEAKER_01]: They've got investments for sure in this industry.

[00:50:18] [SPEAKER_01]: And then speaking of creativity,

[00:50:20] [SPEAKER_01]: there's not probably a whole lot to say about this

[00:50:23] [SPEAKER_01]: other than hey, there's a new movie that's out

[00:50:25] [SPEAKER_01]: that has to do with AI being the boogeyman.

[00:50:28] [SPEAKER_01]: I think what I find and what is it called

[00:50:30] [SPEAKER_01]: is called Afraid Spelled AFR

[00:50:32] [SPEAKER_01]: and then Capital AI D,

[00:50:35] [SPEAKER_01]: which is your traditional at this point take

[00:50:39] [SPEAKER_01]: of an AI home assistant becoming a villain,

[00:50:44] [SPEAKER_01]: becoming evil.

[00:50:45] [SPEAKER_01]: And I think what I find just kind of interesting

[00:50:48] [SPEAKER_01]: about this is that there are so many moments in time

[00:50:51] [SPEAKER_01]: and you started to entertain weekly

[00:50:54] [SPEAKER_01]: so you could probably describe this better than I could.

[00:50:56] [SPEAKER_01]: But moments in time where the boogeyman of the day

[00:51:00] [SPEAKER_01]: suddenly gets a lot of attention in media

[00:51:03] [SPEAKER_01]: and film and everything.

[00:51:04] [SPEAKER_01]: I remember in the 80s it was cold war anxiety

[00:51:08] [SPEAKER_01]: and that fueled so much of film and TV at the time.

[00:51:13] [SPEAKER_01]: And right now it seems to be apparently AI

[00:51:15] [SPEAKER_01]: and I guess it makes sense

[00:51:17] [SPEAKER_01]: because there's a lot of anxiety around AI.

[00:51:19] [SPEAKER_01]: Last year there was Megan,

[00:51:20] [SPEAKER_01]: which was another kind of AI inspired horror film

[00:51:23] [SPEAKER_01]: and everything.

[00:51:24] [SPEAKER_00]: Which you know what the hell is horror movies?

[00:51:27] [SPEAKER_00]: It is, but I think that there is potentially

[00:51:30] [SPEAKER_00]: I won't say a danger but an impact to them.

[00:51:33] [SPEAKER_00]: And I think it's an extension of moral panic.

[00:51:35] [SPEAKER_00]: I'll have another drink folks.

[00:51:40] [SPEAKER_00]: Because it can become part of the conversation

[00:51:43] [SPEAKER_00]: in a way that says well this could happen,

[00:51:45] [SPEAKER_00]: oh my God it could be this.

[00:51:46] [SPEAKER_00]: It sets a tone about it.

[00:51:48] [SPEAKER_00]: That says that AI is a bad guy.

[00:51:52] [SPEAKER_00]: And it fits right in with the doomers

[00:51:55] [SPEAKER_00]: and the escris people.

[00:51:56] [SPEAKER_00]: Watch out!

[00:51:57] [SPEAKER_00]: It could take over the world

[00:51:58] [SPEAKER_00]: and it fits into that agenda and that worries me.

[00:52:01] [SPEAKER_00]: This is going to sound way off the mark.

[00:52:05] [SPEAKER_00]: But this week there was an investigation

[00:52:07] [SPEAKER_00]: I think by the New York Times and Washington Post

[00:52:09] [SPEAKER_00]: I get the going for used.

[00:52:11] [SPEAKER_00]: About a for profit psychiatric hospital chain

[00:52:16] [SPEAKER_00]: that was keeping people basically locked up

[00:52:18] [SPEAKER_00]: against their will for too long.

[00:52:20] [SPEAKER_00]: It was good reporting.

[00:52:22] [SPEAKER_00]: It's a frightening thing.

[00:52:26] [SPEAKER_00]: And you wonder where the hell is Jeff going with this?

[00:52:29] [SPEAKER_00]: Well we're going to get him locked up

[00:52:30] [SPEAKER_00]: because it makes no sense.

[00:52:30] [SPEAKER_00]: What it ties into to me is the movie

[00:52:33] [SPEAKER_00]: One Flew Over the Cuckoo's Nest and Book.

[00:52:36] [SPEAKER_00]: Which I think had a real impact in this country

[00:52:39] [SPEAKER_00]: about making people afraid of psychiatric care.

[00:52:44] [SPEAKER_00]: Not that there wasn't necessarily a reason to be

[00:52:46] [SPEAKER_00]: but it was in the fictional form

[00:52:49] [SPEAKER_00]: it made it, I think the laws.

[00:52:52] [SPEAKER_00]: It did.

[00:52:53] [SPEAKER_00]: And laws were passed.

[00:52:54] [SPEAKER_00]: A friend of mine in California had a manic episode

[00:52:57] [SPEAKER_00]: that was a bipolar episode that was really bad.

[00:52:59] [SPEAKER_00]: His partner and I tried to get him committed

[00:53:02] [SPEAKER_00]: and it's really, really hard.

[00:53:04] [SPEAKER_00]: Not that there isn't good reason to make it hard

[00:53:06] [SPEAKER_00]: but I think it was made harder

[00:53:08] [SPEAKER_00]: by the cultural fictional ethos that came

[00:53:13] [SPEAKER_00]: around psychiatric care.

[00:53:15] [SPEAKER_00]: And I think it's part of the reason

[00:53:16] [SPEAKER_00]: that what we think of as a homeless crisis

[00:53:18] [SPEAKER_00]: is a crisis of insufficient mental care for people.

[00:53:27] [SPEAKER_00]: And fear that they have for going in.

[00:53:30] [SPEAKER_00]: And so this story, this news story this week

[00:53:32] [SPEAKER_00]: only adds to that, it adds to it legitimately

[00:53:34] [SPEAKER_00]: because this is a bad organization that would appear

[00:53:36] [SPEAKER_00]: that's doing bad things

[00:53:37] [SPEAKER_00]: but I come back to the cultural piece of this.

[00:53:40] [SPEAKER_00]: So what do a whole bunch of movies

[00:53:43] [SPEAKER_00]: presenting AI as an evil monster

[00:53:46] [SPEAKER_00]: coming to get ya?

[00:53:47] [SPEAKER_00]: Right, too.

[00:53:48] [SPEAKER_00]: Well once you've finished laughing

[00:53:51] [SPEAKER_00]: or screaming first then laughing

[00:53:54] [SPEAKER_00]: does that stay in your hand?

[00:53:55] [SPEAKER_00]: Don't worry, it's only PG-13.

[00:53:57] [SPEAKER_00]: There's not a lot to scream at here.

[00:53:59] [SPEAKER_00]: I don't like horror movies.

[00:54:00] [SPEAKER_00]: I'm absolutely marshmallow.

[00:54:02] [SPEAKER_00]: I'm a huge fan.

[00:54:04] [SPEAKER_00]: Oh no.

[00:54:05] [SPEAKER_01]: I'm a total huge fan.

[00:54:06] [SPEAKER_00]: The same to my wife the other day.

[00:54:07] [SPEAKER_00]: I don't get it.

[00:54:09] [SPEAKER_00]: Don't understand.

[00:54:11] [SPEAKER_00]: It's like eating too much hot sauce.

[00:54:15] [SPEAKER_00]: I don't get it.

[00:54:18] [SPEAKER_00]: So what does that stay

[00:54:19] [SPEAKER_00]: as part of the cultural conversation then?

[00:54:22] [SPEAKER_00]: Does it have an effect on things like legislation

[00:54:26] [SPEAKER_00]: and coverage?

[00:54:27] [SPEAKER_00]: I'm not suggesting that anything should be done

[00:54:29] [SPEAKER_00]: about the horror movies.

[00:54:29] [SPEAKER_00]: Have fun, go ahead and do them.

[00:54:30] [SPEAKER_00]: That's fine.

[00:54:31] [SPEAKER_00]: Let's try to stand back a little bit

[00:54:33] [SPEAKER_00]: and ask that we think that AI is a dangerous force.

[00:54:37] [SPEAKER_00]: Are we listening to the cultists of the doomstress?

[00:54:39] [SPEAKER_00]: Are we listening to those who are exploiting it

[00:54:42] [SPEAKER_00]: in entertainment?

[00:54:43] [SPEAKER_00]: Is there a rational reason or not?

[00:54:46] [SPEAKER_00]: You thought there wasn't much to say about the story?

[00:54:49] [SPEAKER_00]: I can drone on about anything Jason.

[00:54:51] [SPEAKER_00]: Anything at all.

[00:54:51] [SPEAKER_00]: I figured we might go to some interesting places

[00:54:55] [SPEAKER_01]: and something like that.

[00:54:56] [SPEAKER_01]: Considering your background in entertainment weekly

[00:54:58] [SPEAKER_01]: and now AI, so I'm happy that we did talk about it.

[00:55:01] [SPEAKER_01]: I love horror movies.

[00:55:03] [SPEAKER_01]: I did see Megan last year

[00:55:07] [SPEAKER_01]: and it's a silly AI driven horror film

[00:55:12] [SPEAKER_01]: and everything like that.

[00:55:13] [SPEAKER_01]: From my perspective it's kind of like

[00:55:15] [SPEAKER_01]: the horror film thing for me,

[00:55:18] [SPEAKER_01]: there's a certain anxiety component of it

[00:55:21] [SPEAKER_01]: that strangely is enjoyable to I think horror fans

[00:55:25] [SPEAKER_01]: and there's a compartmentalization that comes out of it too.

[00:55:29] [SPEAKER_01]: So while I totally understand where you're coming from

[00:55:32] [SPEAKER_01]: I do also think that like I came out of Megan

[00:55:35] [SPEAKER_01]: thinking that it was a ridiculous premise

[00:55:37] [SPEAKER_01]: even though it was AI, you know.

[00:55:39] [SPEAKER_01]: Were you a little scared during it?

[00:55:41] [SPEAKER_00]: Were you one inch?

[00:55:43] [SPEAKER_01]: No, it takes a lot to scare me with horror movies nowadays.

[00:55:45] [SPEAKER_01]: I wasn't scared at all.

[00:55:48] [SPEAKER_00]: Are your kids in the age where you go to the movies

[00:55:50] [SPEAKER_00]: like that with them or are you there to old now?

[00:55:53] [SPEAKER_01]: I have taken, I took total tangent

[00:55:55] [SPEAKER_01]: but my older daughter, she's now 14,

[00:55:58] [SPEAKER_01]: I took her by her request to The Shining

[00:56:01] [SPEAKER_01]: at the movie theater last Halloween

[00:56:04] [SPEAKER_01]: and it was awesome.

[00:56:06] [SPEAKER_01]: We had a great time.

[00:56:08] [SPEAKER_01]: I'm like oh bad, is she starting to get to the age

[00:56:10] [SPEAKER_01]: where I can show her some of my favorites

[00:56:12] [SPEAKER_01]: and I think she is.

[00:56:13] [SPEAKER_01]: I appreciate it.

[00:56:14] [SPEAKER_01]: I'm sorry.

[00:56:15] [SPEAKER_01]: But I totally get it.

[00:56:15] [SPEAKER_01]: Some people hate horror movies.

[00:56:17] [SPEAKER_01]: I totally understand.

[00:56:19] [SPEAKER_01]: I got a bit about it that I just enjoy.

[00:56:21] [SPEAKER_00]: I'm still asking nosy questions.

[00:56:23] [SPEAKER_00]: What about your wife?

[00:56:25] [SPEAKER_01]: She can watch some of them.

[00:56:28] [SPEAKER_01]: She can't watch some of the ones.

[00:56:30] [SPEAKER_01]: Like I've seen so many at this point

[00:56:33] [SPEAKER_01]: and I know that there are some that like

[00:56:35] [SPEAKER_01]: I just wouldn't show her.

[00:56:38] [SPEAKER_01]: You know best.

[00:56:38] [SPEAKER_01]: She would not last.

[00:56:40] [SPEAKER_01]: But she's seen some.

[00:56:41] Yeah.

[00:56:42] [SPEAKER_01]: She's not a super fan like I am.

[00:56:44] [SPEAKER_01]: Right.

[00:56:45] [SPEAKER_01]: I'll just say that and I totally respect it.

[00:56:49] [SPEAKER_00]: So anyway, one last one last time.

[00:56:51] [SPEAKER_00]: Just curious now.

[00:56:52] [SPEAKER_00]: Is there any movie that is so freaky

[00:56:56] [SPEAKER_00]: you wouldn't watch it again?

[00:56:59] [SPEAKER_01]: Oh boy.

[00:57:00] [SPEAKER_01]: That's oh God.

[00:57:02] [SPEAKER_01]: That's.

[00:57:04] [SPEAKER_01]: Yeah.

[00:57:05] [SPEAKER_01]: There's probably there's a couple.

[00:57:08] [SPEAKER_01]: There's a new a new a new whatever

[00:57:13] [SPEAKER_01]: franchise called terrifier.

[00:57:15] [SPEAKER_01]: That was pretty hard to watch.

[00:57:20] [SPEAKER_01]: Also when you go into horror movie fandom

[00:57:23] [SPEAKER_01]: it gets really weird really quickly.

[00:57:25] [SPEAKER_01]: Like there's certain aspects of horror

[00:57:26] [SPEAKER_01]: that I do not care for.

[00:57:29] [SPEAKER_01]: You know that really take it to a total extreme.

[00:57:33] [SPEAKER_01]: They stop being fun and they start being

[00:57:35] [SPEAKER_01]: a little too real.

[00:57:37] [SPEAKER_01]: I'm not sick of sticking.

[00:57:38] [SPEAKER_00]: Yeah.

[00:57:38] [SPEAKER_01]: Yeah.

[00:57:39] [SPEAKER_01]: And I don't really enjoy those.

[00:57:41] [SPEAKER_01]: And so those would be the ones that I probably wouldn't turn to.

[00:57:44] [SPEAKER_01]: That's the ones where it's in the end.

[00:57:45] [SPEAKER_01]: It's funny.

[00:57:46] [SPEAKER_01]: At the end it's kind of a joke, right?

[00:57:49] [SPEAKER_01]: But sometimes you're either you're in on the joke.

[00:57:51] [SPEAKER_01]: Yeah.

[00:57:52] [SPEAKER_01]: And you're in the you're on the joke hopefully

[00:57:54] [SPEAKER_01]: but sometimes they go so far that like I'm not even connected

[00:57:58] [SPEAKER_01]: to the joke anymore and this is just uncomfortable

[00:58:00] [SPEAKER_01]: and I don't like that.

[00:58:03] [SPEAKER_01]: Thank you.

[00:58:05] [SPEAKER_01]: I should probably start a horror movie podcast actually.

[00:58:08] [SPEAKER_01]: That would be well.

[00:58:08] [SPEAKER_00]: The next story will inspire horror movies of plenty.

[00:58:11] [SPEAKER_01]: There we go.

[00:58:12] [SPEAKER_01]: Yeah, this is the perfect way to cap it off.

[00:58:14] [SPEAKER_01]: Speaking of anxiety, the X1 Neo Beta robot

[00:58:20] [SPEAKER_01]: because apparently we end our show with robots nowadays.

[00:58:24] [SPEAKER_01]: Norwegian Robotics Company 1X released a home

[00:58:27] [SPEAKER_01]: robot introduction video if you're watching the video version.

[00:58:31] [SPEAKER_01]: I can show you real quick here.

[00:58:33] [SPEAKER_01]: No audio.

[00:58:33] [SPEAKER_01]: I'm going to mute the audio but it's basically a woman in

[00:58:36] [SPEAKER_01]: a living room with a humanoid robot that I still can't

[00:58:41] [SPEAKER_01]: entirely tell whether it's a human.

[00:58:42] [SPEAKER_01]: It's a human.

[00:58:43] [SPEAKER_01]: It's messed up.

[00:58:44] [SPEAKER_01]: A Neo cream.

[00:58:45] [SPEAKER_01]: It really looks like it is.

[00:58:47] [SPEAKER_01]: Yeah, no.

[00:58:48] [SPEAKER_01]: Yeah, no.

[00:58:49] [SPEAKER_01]: I mean if it is a robot it's got some moves.

[00:58:53] [SPEAKER_00]: No, it's not.

[00:58:54] [SPEAKER_00]: It's not.

[00:58:55] [SPEAKER_00]: No.

[00:58:55] [SPEAKER_00]: This is just a Wally Bieland Musk's demo of a robot.

[00:58:58] [SPEAKER_00]: No.

[00:59:00] [SPEAKER_00]: Why would you do that?

[00:59:01] [SPEAKER_00]: Jason, this is why this is why you like horror movies

[00:59:04] [SPEAKER_00]: because you're a sucker for this.

[00:59:05] [SPEAKER_00]: You're ready.

[00:59:05] [SPEAKER_00]: You want to believe.

[00:59:07] [SPEAKER_00]: No.

[00:59:07] [SPEAKER_00]: I want to believe.

[00:59:08] [SPEAKER_01]: No, this is not.

[00:59:09] [SPEAKER_01]: I mean there's something very eerie and emotion picture like

[00:59:13] [SPEAKER_01]: about this little sequence in the video.

[00:59:15] [SPEAKER_01]: So if you're listening to the podcast definitely go find it

[00:59:19] [SPEAKER_01]: on Twitter.

[00:59:19] [SPEAKER_01]: It won't be difficult to find.

[00:59:21] [SPEAKER_01]: Search for 1X Neo Beta.

[00:59:23] [SPEAKER_01]: At the very end of the video the lady who's in the room

[00:59:28] [SPEAKER_01]: with the robot, she'd already walked out of the room.

[00:59:30] [SPEAKER_01]: She comes back and puts her arm around the robot who

[00:59:33] [SPEAKER_01]: puts its arm around her and they both wave to the camera.

[00:59:37] [SPEAKER_01]: There is just something really weird about this video.

[00:59:39] [SPEAKER_01]: I don't know, you know, if it is real I might be a little scared.

[00:59:44] [SPEAKER_00]: Like, legit.

[00:59:45] [SPEAKER_00]: The company's mission is to create an abundant supply

[00:59:48] [SPEAKER_00]: of labor via safe and totally robots.

[00:59:53] [SPEAKER_00]: I get it, but that's a little direct.

[00:59:57] [SPEAKER_01]: Yeah.

[00:59:58] [SPEAKER_01]: Well, in prior to 2022 so a little backstory here.

[01:00:03] [SPEAKER_01]: They had been building robotics for factories and

[01:00:06] [SPEAKER_01]: commercial applications in 2022 partnered with OpenAI.

[01:00:12] [SPEAKER_01]: And so they're everywhere.

[01:00:14] [SPEAKER_01]: Now we're going into the kind of the consumery, here's a home

[01:00:19] [SPEAKER_01]: robot.