Jason Howell and Jeff Jarvis discuss Meta's new Movie Gen tool for AI video creation, Jason demos OpenAI's new Canvas interface, the controversial I-XRAY facial recognition demo and Zillow's AI staging acquisition.

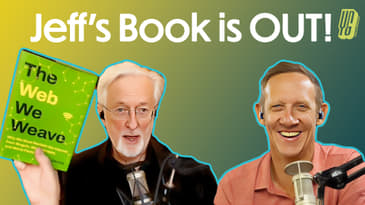

Jeff's book, The Web We Weave, is out now! Use code WEB20 for 20% off at checkout!

Please support our work on Patreon!

NEWS

How Meta Movie Gen could usher in a new AI-enabled era for content creators

OpenAI launches new ‘Canvas’ ChatGPT interface tailored to writing and coding projects

OpenAI Leaders Say Microsoft Isn't Moving Fact Enough to Supply Servers

[00:00:00] This is AI Inside, episode 38, recorded Wednesday, October 9th, 2024. Canvas, OpenAI's Answer to Integrated AI Workspaces.

[00:00:10] This episode of AI Inside is made possible by our wonderful patrons at patreon.com slash AI Inside Show.

[00:00:17] If you like what you hear, head on over and support us directly, and thank you for making independent podcasting possible.

[00:00:30] Boom! Welcome to another episode of AI Inside, the podcast where we take a look at the AI that's sprinkled, layered, whatever you want to say, throughout the world of technology.

[00:00:40] I'm one of your hosts, Jason Howell, joined as always by my co-host, smartest guy on the planet, Jeff Jarvis.

[00:00:47] Boom! I like that big opener. I think you should do that all the time.

[00:00:51] Boom!

[00:00:52] Yeah, you got to switch it up. You know, you got to keep people on their toes.

[00:00:55] How you doing, Jeff?

[00:00:56] How you doing? How about you?

[00:00:57] This is a big week for you. I'm doing good. We're going to talk about your big week here.

[00:01:01] Why don't we just start with that? Dude, the web we weave out now. Like, it's done. It's done deal.

[00:01:09] Your amazing book.

[00:01:10] Hardback.

[00:01:12] Available. Hardback. It's got to feel really good. You were sharing some videos online of, like, unboxing, and it was exciting. I'm so excited for you.

[00:01:22] Thank you. Congrats.

[00:01:22] So, yeah, it's out. It's, if you go to, now I also have a site, thanks to my son, Jake Jarvis. We finally have my site up. So if you go to Jake Jarvis, I mean, sorry, if you go to JeffJarvis.com, you find all the links to buy it.

[00:01:35] Along with my prior books, the Gutenberg Parentheses and Magazine, which are still out and new. And so I'm grateful to all of you. Some very nice folks in our podcast world had already bought it, and they got it yesterday, which is really cool to see, and they held it up, and I love seeing that. So thank you.

[00:01:49] Let me know what you think. That is great.

[00:01:51] I already have the first bad review, so here we go.

[00:01:54] Well, you haven't made it until the bad reviews come, I guess.

[00:01:58] For this book, I expect it, because I say nice things about the internet and bad things about media, so guess what?

[00:02:04] Okay. Yeah.

[00:02:05] Okay. Interesting. Well, I can't wait to read it myself. I will admit I'm probably going to get it on Audible. It's just how I do books.

[00:02:13] Unfortunately, there isn't an Audible book, or not?

[00:02:15] Oh, there's going to be, though, right?

[00:02:17] I hope so. The publishing industry is so slow about this stuff now. It took months after Gutenberg Parentheses before we did it, so I don't know what's going on.

[00:02:24] Then I found out today my editor is now leaving as of Friday, so who knows?

[00:02:30] Oh, my goodness. Well, I'm going to cross my fingers, and then obviously, if it's not going to be an audiobook, then I'm going to get the – I will get the book.

[00:02:38] I will get the book. It's nice to have the real tangible thing, too.

[00:02:42] But big-time congratulations. Again, jeffjarvis.com, and of course, at the end of the show, we're going to mention it again, too, because I want Jeff's book to move tons of copies or whatever the parlance is in the publishing world.

[00:02:58] First, subscribe to this podcast and go to Patreon and support this podcast because this thing is ongoing.

[00:03:05] That's right. Indeed. You can subscribe by going to AIinside.show. That's how you can subscribe to the podcast.

[00:03:11] So if you're watching live, like many people are right now, do that so that you don't miss it.

[00:03:15] And then, yes, like Jeff said, patreon.com slash AIinsideshow. Ian Smart is one of our amazing smart patrons who joined us up from the very beginning.

[00:03:27] And we've got a lot more of you that I'm going to be reading out your names over the coming weeks and months, and I would love to add more names to that list.

[00:03:34] Plus, you get extra bonuses as well, along with our deep gratitude for enabling us to continue to do this show.

[00:03:41] Patreon.com slash AIinsideshow.

[00:03:46] All right. Well, let's get into some of the news topics that we have.

[00:03:52] And we had to do a little bit of a last-minute shuffling, so I'm not sure that I would have necessarily put Meta's MovieGen at the top of the list if we hadn't done that.

[00:04:03] But nonetheless, here we are. Meta unveiled MovieGen. It's a suite of AI models for video and audio content generation.

[00:04:11] Also, video editing. So it's kind of a...

[00:04:14] My dog is chewing on a squeaky toy.

[00:04:17] Can you hear that?

[00:04:19] Yes. This is not like a blip in the Matrix.

[00:04:22] This is just my dog passive-aggressively saying, you're not paying attention to me.

[00:04:27] Is that annoying?

[00:04:28] No.

[00:04:29] No, it's endearing.

[00:04:30] All right. Hopefully, because it is quite cute to watch him do that.

[00:04:35] I don't want to stop him.

[00:04:36] Meta's Sora competitor is essentially what this is.

[00:04:39] It's not something that's available yet, but it is pretty impressive.

[00:04:44] It's four total models, a 3 billion video gen model, 13 billion attribute audio model, and HD videos up to 16 seconds long.

[00:04:56] And you can remember when these video models were first starting to come out, I think it was like three seconds or five.

[00:05:03] And so people were doing all this gymnastics to try and extend things because often you need clips that are longer to do anything with them.

[00:05:11] And now these systems, this one's capable of 16 second long generations.

[00:05:16] It's getting interesting.

[00:05:17] It is, of course.

[00:05:18] Audio generated as well.

[00:05:19] And that's the thing about this one.

[00:05:20] This one adds audio.

[00:05:21] Yeah.

[00:05:21] Which is new and important.

[00:05:23] Not that you couldn't do that otherwise, but to get it all in one piece, I think it's really interesting.

[00:05:28] Yes, right.

[00:05:29] I always wonder, Jason, if the problem, I think, is trying to make the AI remember what it did because of the randomness.

[00:05:38] Is you think that, well, the thing you do is you do 16 seconds, then the next 16 seconds, then the next 16 seconds.

[00:05:43] But then the surfing hippo might go from green to purple to red because it does something different each time.

[00:05:52] So I think the challenge is how do you get it to string together without making the one effort so consuming of resource that you can't do it.

[00:06:00] Mm-hmm.

[00:06:01] Mm-hmm.

[00:06:02] Yeah, that is interesting, especially when it comes to things like extending something from one point to another.

[00:06:09] Like you do a generation, and oftentimes what they do is they do an analysis of the last frame, and then they extend from there.

[00:06:17] And one thing that I've noticed in a lot of these things, both image generation and movie generation, is if you give it a source thing and you say generate something around this, it does something like if it's a face, for example, and especially if it's a face you know, then the generation might look kind of mostly like it, but the mannerisms aren't the same.

[00:06:38] That next generation, you know, similar in like text, like oftentimes it kind of loses the subtlety and the detail.

[00:06:47] And so, yes, if it acted on its own, it would be interesting, but merged together, there's like a disconnect between how they both approach the same thing.

[00:06:55] And that's a real big challenge with systems like this.

[00:06:58] But having something that goes longer from the offset, I'm guessing, I'm wondering if that kind of tackles that a little bit because it keeps it focused on that particular interpretation of what that scene would be versus having to reinterpret it again.

[00:07:14] Yeah, and it's just a scene.

[00:07:16] And, you know, as I mentioned on the show, I wrote a syllabus for a course on AI and creativity.

[00:07:22] And it strikes me that you can do something cute and neat in 16 seconds.

[00:07:28] Is that sufficient to express yourself?

[00:07:32] Now, if you go back to what was the old Twitter video thing?

[00:07:36] Was it seven seconds?

[00:07:37] What was that?

[00:07:38] Jesus.

[00:07:39] I'm old enough to forget.

[00:07:40] What was it called?

[00:07:41] Yeah, I think, right, totally.

[00:07:43] I can't remember because the squeaking is throwing me off a little bit.

[00:07:49] But, yeah, I mean, a lot of the earlier ones were much smaller.

[00:07:53] Were shorter, yeah.

[00:07:54] But still, it has limited utility.

[00:07:59] But it's getting there.

[00:08:00] But it's getting there, and it's interesting, it's fun, and I'm very curious to know what Hollywood is thinking about.

[00:08:06] You know that there are huge meetings going on inside studios trying to figure out how to use this without peeving the unions.

[00:08:13] Yeah, well, for sure.

[00:08:15] And that's going to be a really big hurdle, right?

[00:08:18] Because you look at things like this, you know, the swimming hippo.

[00:08:22] And prior to where we are right now, you know, this would have taken a visual effects artist or a visual effects team a lot of time and resources.

[00:08:33] And energy and knowledge and, you know, and along with that, a large paycheck to generate something like this.

[00:08:41] So Hollywood, very union-based in a lot of ways, going to be really interested in ensuring that.

[00:08:49] He just wants that.

[00:08:51] I like it.

[00:08:51] I like it.

[00:08:52] It's the squeak show.

[00:08:53] Oh, dude, I love you.

[00:08:54] I love you, Bronson.

[00:08:56] You're fine.

[00:08:57] But I got to take this away.

[00:08:58] And it worked.

[00:08:59] I wanted Dad's attention, and I got it.

[00:09:01] Oh, it totally did.

[00:09:02] Oh, no, you took it away?

[00:09:05] I can't concentrate, man.

[00:09:08] He'll be okay.

[00:09:09] He'll get all of me once the show is over.

[00:09:12] And look, I took him to the dog park for like 30 minutes, which wasn't enough, but it was something.

[00:09:17] He'll be fine.

[00:09:18] I'm sorry, Bronson.

[00:09:21] Anyways.

[00:09:22] Well, I think we need to make, now you have to make a video of Bronson squeaking.

[00:09:27] Pouting?

[00:09:28] Yes.

[00:09:29] Pouting.

[00:09:29] Oh, yeah.

[00:09:30] There we go.

[00:09:31] Yeah, and I could just play that in the background every time we talk about a topic to throw myself off.

[00:09:35] No.

[00:09:36] Well, when you said that, you know, 16 seconds, like, is that enough to do something with?

[00:09:42] I mean, when I think of the Hollywood context, I think this is where it gets to the point to where it is enough to do something with.

[00:09:50] I think it's the stability, because, you know, how often are you watching a scene that is, you know, some sort of like, let's say, visual kind of VFX sort of laden scene that's longer than 16 seconds?

[00:10:04] I mean, it doesn't really happen in Hollywood.

[00:10:07] That's true, an establishing shot.

[00:10:07] And I remember we had on a tool, like, two months ago, where there were dials on it, and you had the same building, and you could turn it from snowy to hot to this style of architecture to that style.

[00:10:23] Continuity matters so tremendously in entertainment that how do you guarantee that you can get what you want, and that it's contiguous with what you had?

[00:10:36] I think that's going to be a challenge.

[00:10:38] I think that's going to be a challenge, and that's going to be a real big frontier for these tools to really solidify themselves as, you know, as true go-tos in Hollywood and for digital, you know, video effects artists.

[00:10:52] They're going to need that continuity.

[00:10:54] They're going to need to know that when they use that tool, it's not going to, you know, produce these little variations that are a tell to people who know, you know, what they're looking for.

[00:11:04] A story that's not in the rundown because it came in late, but California, last week we talked about how the governor vetoed legislation on AI, but he signed three or four bills before that.

[00:11:17] Well, one of them just got challenged in the courts and was halted with an injunction because somebody made a deepfake video of, I was going to say Hillary Clinton, of Kamala Harris doing something.

[00:11:32] And is this in the rundown?

[00:11:33] Did I screw up?

[00:11:34] I don't know that this is in the rundown.

[00:11:36] Okay, fine.

[00:11:36] So I'll just add it in.

[00:11:40] And so the person who made, the right winger who made the video sued and won an injunction based on the First Amendment.

[00:11:48] My point being, he was using AI to do a deepfake and we're going to see a lot more of that and we're just going to have to live with it.

[00:11:56] We have to live with it because the technology is possible.

[00:11:59] It's getting better as these videos show you.

[00:12:00] It can do amazing things.

[00:12:03] And you've got a point.

[00:12:04] 16 seconds is enough to make disinformation.

[00:12:06] Yep, pretty easily.

[00:12:07] And the First Amendment is going to allow it to be out there.

[00:12:10] And so we're going to have to rely on other systems and other mechanisms to know what's real, if we even know what's real.

[00:12:17] Yeah, right.

[00:12:18] Yeah, well, and I saw also an article that was about a lot of kind of deepfake video or I guess it's more of like images being shared around the hurricane and kind of misleading stuff there.

[00:12:35] And yeah, yeah.

[00:12:36] I mean, we will continue to see more and more of this.

[00:12:41] It's interesting.

[00:12:44] I don't know what more I have to say about that, but I am interested from a production standpoint.

[00:12:52] Like I have the Adobe Creative Cloud Suite and this has nothing to do specifically with Adobe tools.

[00:12:59] But Adobe is one kind of example of a company that makes creative tools that are definitely integrating the generative AI stuff into the tools that people are already using in useful ways.

[00:13:14] I mean, I've definitely used some of the generative aspects of the app, like Photoshop primarily.

[00:13:22] Some of this stuff, you know, is available and coming to Adobe Premiere.

[00:13:26] And I think that's ultimately I think that's where in my mind a lot of this is headed is at a certain point we get these kind of generative video models, let's say, to a point to where they're good enough that we can then bring those tools into the software you're using.

[00:13:43] And when you find yourself in a pinch, you can generate that thing.

[00:13:47] And, you know, Adobe's already shown off technology for Premiere that does this.

[00:13:52] I don't know that it's necessarily rolled out yet.

[00:13:54] I could be wrong on that.

[00:13:55] I haven't.

[00:13:56] I certainly haven't used it, but I think that's the next frontier.

[00:13:59] I think that's what we see in the next couple of years.

[00:13:59] Is the user interface for all that, Jason, descriptive by command?

[00:14:02] In other words, you're telling it what you want by describing it?

[00:14:06] Yeah, actually.

[00:14:07] Well, so my interaction with this in Adobe tools is in Photoshop.

[00:14:10] And pretty much by default, when you open up an image and you have this feature enabled, you get a little kind of prompt window underneath the image that you're looking at.

[00:14:23] And it's, you know, like one thing that I use literally every single day for the stuff that I do in podcasting when I'm creating thumbnails.

[00:14:29] I can pull up an image of you and I can say remove, you know, from background or actually what it is is it selects you.

[00:14:38] So it intelligently analyzes your view and is able to do the outline of you, which is something that used to be a manual process and it would take minutes.

[00:14:47] Around the hairs and everything, it's a pain, yeah.

[00:14:49] Yeah.

[00:14:50] And it's not 100% perfect every single time, but it gets you 95% of the way there.

[00:14:54] Do a little touch up and you're good to go.

[00:14:56] I can remove you from your background very easily in just a couple of seconds.

[00:15:00] Cool.

[00:15:01] Yeah.

[00:15:02] So, yeah, it's integrated in and then, you know, you could do generative stuff around that too.

[00:15:06] But I think they've got a lot more ideas as far as how they expand upon that in the future.

[00:15:11] Good.

[00:15:12] Well, so the next story is one that I put in.

[00:15:15] Yeah.

[00:15:15] And I noticed this is one that missed my radar anyways.

[00:15:20] Came out a couple of weeks ago.

[00:15:21] So this isn't like entirely new, but it's completely related.

[00:15:25] Yeah.

[00:15:26] And so it's super worth talking about.

[00:15:29] Yeah.

[00:15:29] Do you want to set it up?

[00:15:30] It's very simple.

[00:15:31] Well, it's very complex, of course, but it's simple.

[00:15:33] And what it does is that it's an Amazon add-on to ads.

[00:15:37] So you can take any still image and it will add a contextual animation to it.

[00:15:43] So there's one here where you've got an Amazon Madame A sitting on a table in front of a seashore.

[00:15:50] And it will make the sea waves come up.

[00:15:54] And if you click on that video there, Jason.

[00:15:57] Oh, this video right here.

[00:15:58] Right there.

[00:15:58] So there's a video of coffee mug and now the steam is animated and moving.

[00:16:04] Flowers and now there's a breeze in the background.

[00:16:09] Sky and now the stars are moving.

[00:16:11] And whether that's moving the right way or the wrong way, I have no idea.

[00:16:14] Right.

[00:16:16] The toilet is flashing in the wrong direction.

[00:16:18] Right.

[00:16:19] Seashore.

[00:16:19] So it's in the end, it's simple, but it does something.

[00:16:24] I don't know.

[00:16:24] If you've read the New York Times these days, they've had this, you know, well, I forget what the Harry Potter newspaper was called.

[00:16:34] Oh, yeah.

[00:16:35] I am not a Harry Potter.

[00:16:37] Aficionado?

[00:16:38] No, neither am I.

[00:16:39] But there was the newspaper.

[00:16:40] And it was wow-y at the time of the movie that the photos in the newspaper moved.

[00:16:46] Right?

[00:16:46] And that's basically where we are now.

[00:16:47] So the New York Times right now.

[00:16:48] Kind of where we're at now.

[00:16:49] Exactly.

[00:16:50] Totally.

[00:16:50] You're absolutely right.

[00:16:51] The Times on its homepage now regularly does celebrity interviews and they'll have somebody like Robert De Niro and he's doing a three-second movement.

[00:16:58] And it just loops and repeats and that's all it is.

[00:17:01] Well, this brings the same technology and ability to advertisers.

[00:17:05] So I think that more and more the still image becomes rarer.

[00:17:09] That's why I found this interesting.

[00:17:11] So if you add these two things together, this is a very much lighter version than the swimming hippo.

[00:17:17] It's just flowers in a breeze.

[00:17:19] That's all it is.

[00:17:19] But it's now very easy to do that.

[00:17:22] And I think it will create more of an expectation of moving photos.

[00:17:27] That was...

[00:17:27] Mm-hmm.

[00:17:28] Right.

[00:17:29] Entertain my eyes, please.

[00:17:31] Right.

[00:17:31] The still image isn't good enough anymore.

[00:17:32] Which I also think we will get tired of very quickly.

[00:17:34] Yeah.

[00:17:35] Yeah, it's kind of like Flash, right?

[00:17:37] Yep.

[00:17:37] Like, oh, Flash is amazing.

[00:17:39] It animates the web.

[00:17:40] And then at a certain point, it's like, oh, my God, it gives me a screaming headache and makes everything unsafe.

[00:17:45] In this case, generates 720p videos with two scenes, headlines, background music, call to action, can customize.

[00:17:54] And then if you've got an ad campaign, you can customize the font, the soundtrack, the brand logo, which is pretty darn important for you to be able to dial in specifically.

[00:18:07] Yeah.

[00:18:08] Yeah.

[00:18:08] Super interesting.

[00:18:09] Coming in a lot of different ways.

[00:18:10] And I wonder, you know, this is all about ad marketing and stuff.

[00:18:14] Like, I do wonder, like, how, what the data says about the effectiveness of ads with moving images versus something that is still in static.

[00:18:24] Yeah.

[00:18:25] Yeah.

[00:18:25] Yeah.

[00:18:26] So.

[00:18:27] If it's not effective, it will disappear.

[00:18:29] If it is effective, it will be everywhere until it's ineffective because it's everywhere.

[00:18:33] Until it's ineffective.

[00:18:34] And then it's, yeah, we're going back to the way it was.

[00:18:37] Black and white.

[00:18:38] Duraga types.

[00:18:40] Can you just print it out onto a piece of paper for me?

[00:18:43] Seriously.

[00:18:46] Okay.

[00:18:46] So OpenAI launched Canvas also.

[00:18:49] This is another piece of news from, I think, last week, late last week.

[00:18:53] And what this is is a chat interface combined with a kind of a windowed interface, essentially, for writing and code projects.

[00:19:05] So the idea, my understanding of the idea, and I'm going to show it off here in a second because I have it all loaded up,

[00:19:11] is that instead of going into the chat window and just having everything be that vertical kind of stream of text

[00:19:18] and having to go back up and highlight and copy that down and whatever, it's more of like an editing platform.

[00:19:25] It's kind of like an editor that has the AI tools built into it so you can do things in line in the experience,

[00:19:33] and it might just be a more creative environment to work within.

[00:19:37] That's really interesting.

[00:19:38] It's analogous.

[00:19:39] It seems to me, the early days of the internet, which was a terminal interface at first, just lines of text,

[00:19:47] until you got to the GUI, the graphical user interface.

[00:19:50] So is this the GUI phase of ChatGPT?

[00:19:53] Yeah, certainly, to a certain degree.

[00:19:55] So I've got it up here.

[00:19:57] And let me think here.

[00:20:00] Should we do something about your book?

[00:20:04] Are you curious to hear what it has to say about your book?

[00:20:08] Let's say, tell me about Jeff Jarvis's new book, The Web We Weave.

[00:20:21] List the highlights that you find.

[00:20:25] Okay.

[00:20:26] So, oh, I'm sorry.

[00:20:28] I went into the wrong mode.

[00:20:29] So this is just 4.0.

[00:20:30] If I go up here, I can go to 4.0 with Canvas.

[00:20:33] So I copied this.

[00:20:35] I'm wondering if it's actually going to redo that.

[00:20:38] So let me try and fire this off again.

[00:20:41] I'm with Canvas.

[00:20:42] I'm going to go ahead and enter that in and fire it off.

[00:20:45] So now it's searching.

[00:20:46] It's doing its research.

[00:20:47] It's printing things out.

[00:20:49] So it's going off the web.

[00:20:50] It's current.

[00:20:52] Yep, yep.

[00:20:52] Search from five sites.

[00:20:54] So it has Hachette, Book Group, Bing.com,

[00:21:00] which is just the search that probably pulled up this.

[00:21:03] Barnes & Noble, a review on Kirkus Reviews, I'm assuming.

[00:21:08] Christianity Today.

[00:21:09] I don't know if that has anything to do with it.

[00:21:11] It is.

[00:21:11] It's my first bad review, but I'm not reading it.

[00:21:14] Oh, okay.

[00:21:15] Interesting.

[00:21:15] You were talking about you got your first bad review.

[00:21:17] That must be the one.

[00:21:18] Okay.

[00:21:19] So now we've got this kind of layout.

[00:21:21] And then what we can do from here is we can highlight portions, I believe,

[00:21:27] and expand upon them, right?

[00:21:30] Oh, give me this in markup.

[00:21:33] First of all, let me try this in markup.

[00:21:37] Let's see if it can kind of – oh, okay.

[00:21:39] So here we go.

[00:21:40] So now we've got the interface.

[00:21:42] So that's one way that I know how to pull up the interface,

[00:21:45] and I'm not sure, but there's probably another way to do it,

[00:21:47] but I had to find an example because I was getting that other interface

[00:21:50] even though I was in the canvas mode,

[00:21:53] and it wasn't giving me this separated interface.

[00:21:55] But once I asked for it in markup, then it like pops right over.

[00:21:58] So now we've got this kind of interactive interface

[00:22:01] where we've got the kind of chat GPT conversation on the left-hand side,

[00:22:07] and then we've got the formatted or the output on the right-hand side.

[00:22:11] I could highlight this and hop into here,

[00:22:17] and is this an added prompt?

[00:22:22] Expand to two paragraphs.

[00:22:26] Let's see.

[00:22:27] Okay, so that's highlighted that particular thing.

[00:22:31] Okay, it didn't quite give me two paragraphs,

[00:22:33] but it did lengthen it.

[00:22:35] All right.

[00:22:36] Can you say change the number of paragraphs to bullets?

[00:22:39] Just something like that?

[00:22:44] Change the number of paragraphs.

[00:22:46] The numbered paragraphs.

[00:22:47] Oh, the number.

[00:22:48] Oh, I got you.

[00:22:49] Numbered paragraphs to a bulleted list.

[00:22:54] Bulleted list.

[00:22:57] And then would it edit these?

[00:22:59] Okay, so it did.

[00:23:00] It dropped those down.

[00:23:01] And then what you were talking about,

[00:23:02] kind of like a GUI interface,

[00:23:03] down here is a little tool set area.

[00:23:08] Aha.

[00:23:08] And essentially, you know,

[00:23:09] there's like suggest edits,

[00:23:11] adjust the length, reading level.

[00:23:13] This is interesting.

[00:23:14] So take, let's take this first paragraph

[00:23:17] and go to reading level,

[00:23:19] and keep current reading level.

[00:23:21] I could boost it up to like graduate school,

[00:23:23] college.

[00:23:24] Let's go to graduate school.

[00:23:25] Let's see what it does.

[00:23:26] Or down to kindergarten.

[00:23:27] I mean, we can go either direction.

[00:23:30] Let's do one paragraph graduate school.

[00:23:32] All right, graduate school.

[00:23:33] Let's see what it does.

[00:23:35] And yeah, I mean,

[00:23:36] we might not want to read entirely through this,

[00:23:39] but contends that regulatory attempts

[00:23:41] to fix the internet are often misdirected

[00:23:43] as they risk subverting the fundamental ideals

[00:23:45] of free expression and community

[00:23:47] that underpin the media.

[00:23:48] Okay, so here's the next sentence begins.

[00:23:52] He posits.

[00:23:53] That's a very grand school word.

[00:23:56] Yes, yes, indeed, indeed.

[00:23:57] Okay, so now let's go kindergarten on this.

[00:24:00] I'm really curious to know

[00:24:01] what the kindergarten perspective is on this.

[00:24:05] Jarvis thinks that trying to fix the internet

[00:24:06] with rules can be a bad idea.

[00:24:09] Bad idea.

[00:24:09] Because it can hurt people's freedom

[00:24:11] to say what they want

[00:24:12] and be a part of a community.

[00:24:14] He says the problems people see on the internet,

[00:24:16] like people being mean or sharing wrong information

[00:24:19] are not because of the internet,

[00:24:21] but because of how people act.

[00:24:24] So this little tool gives you the ability to work.

[00:24:28] And I think this is kind of the power

[00:24:29] of this sort of approach.

[00:24:31] And I think this is obviously where we're headed.

[00:24:34] Is that we can,

[00:24:35] instead of being locked into the long stream of things

[00:24:39] and hoping that it gets it right,

[00:24:40] I can isolate my commands to a very specific portion

[00:24:45] and control around that,

[00:24:47] leave everything else untouched,

[00:24:49] I could, you know, add emojis.

[00:24:51] Okay, let's add emojis to this particular thing.

[00:24:53] I don't know.

[00:24:54] That's not something that,

[00:24:55] oh my goodness, look at that.

[00:24:57] That's pretty awful.

[00:24:57] Now this paragraph is ready for social media.

[00:25:04] Anyways.

[00:25:05] So after the word widespread,

[00:25:06] it has the frightened face.

[00:25:09] Yes.

[00:25:09] Oh my goodness.

[00:25:11] Moral entrepreneurs who use,

[00:25:13] it has the dubious face for money

[00:25:16] and puts in a bag of money.

[00:25:18] Yeah.

[00:25:18] Well, okay.

[00:25:20] No word there, right?

[00:25:22] It's not like the word money

[00:25:24] followed by the bag of money.

[00:25:26] The bag of money is meant to represent the word money.

[00:25:30] So this cannot do illustrations, right?

[00:25:32] This Chachapiti has got its own do images.

[00:25:35] Not that I'm aware of.

[00:25:39] Turn this concept into an image.

[00:25:43] I don't know.

[00:25:45] I doubt that it's going to do it.

[00:25:46] Oh, oh, wait a minute.

[00:25:48] Here you go.

[00:25:49] We'll see what it comes,

[00:25:50] like what's it going to come up with.

[00:25:52] I'm super curious.

[00:25:54] Here's an illustration based on the concept

[00:25:56] of reclaiming the internet from exploitation.

[00:25:58] So it's just a big globe,

[00:26:00] like a World Trades, World Fairs globe

[00:26:02] with people standing all over it

[00:26:05] trying to keep their balance and jumping off.

[00:26:08] It really doesn't illustrate anything,

[00:26:10] but I have sympathy for the machine

[00:26:12] because I wouldn't know how to illustrate this.

[00:26:14] I know.

[00:26:14] Like where would you even begin?

[00:26:16] Hey, it's better than nothing, I suppose.

[00:26:18] Or maybe it's not.

[00:26:19] Now what I want to do is I want to run this

[00:26:20] through Amazon's animation engine

[00:26:23] and see if the animated version of this image

[00:26:27] is more appealing than the still image.

[00:26:31] Anyways, I think all in all what this shows to me,

[00:26:35] because I think we've seen this also

[00:26:36] in other kind of approaches.

[00:26:39] Anthropic has its artifacts interface

[00:26:41] from earlier this year in June.

[00:26:44] And there is this kind of trend

[00:26:46] towards editable workspaces kind of like this.

[00:26:50] Taking what we know about how we interact

[00:26:53] with these LLMs to get certain pieces of information

[00:26:58] and what we use them for,

[00:27:00] writing a report of some sort.

[00:27:04] Or this is also really useful for coding.

[00:27:06] And if I had any sort of sense of coding,

[00:27:09] I fire that up and show you

[00:27:10] that these tools down here

[00:27:11] are different on the coding experience.

[00:27:14] And they're really dialed into coding tools.

[00:27:17] And it makes it more interactive

[00:27:21] and integrated, I guess,

[00:27:23] versus what we've been seeing.

[00:27:25] And I think that's a positive trend.

[00:27:27] Yeah.

[00:27:29] That's really, really interesting.

[00:27:31] Yeah.

[00:27:32] This is available to ChatGPT Plus and team users.

[00:27:35] I am a Plus user right now as I experiment,

[00:27:39] continue to experiment with ChatGPT.

[00:27:41] I saw there was a story on the rundown for Twig

[00:27:43] that Leo put in there.

[00:27:45] So it's one of his that said,

[00:27:48] from CIO magazine,

[00:27:50] the devs are gaining little, if anything,

[00:27:52] from AI coding assistance.

[00:27:54] So it'll be really interesting.

[00:27:55] I don't think we've seen a lot of anecdotal stuff,

[00:28:00] a lot of wishful stuff.

[00:28:02] I don't think we've yet seen

[00:28:04] the real impact of this on coding.

[00:28:05] And since I'm not a coder either,

[00:28:07] I can't really judge the value of it.

[00:28:09] People have used Copilot for a long time.

[00:28:10] They've done a lot of things in this.

[00:28:12] But it will be interesting to see over a period,

[00:28:15] and with these augmented tools,

[00:28:17] if you take somebody,

[00:28:18] I should try to do this

[00:28:19] and then try to have it make some code for me

[00:28:21] and then have it figure it out,

[00:28:22] whether it can help an idiot like me.

[00:28:25] We'll see.

[00:28:26] Yeah.

[00:28:27] Yeah.

[00:28:28] Like, is the value in enabling people

[00:28:32] who wouldn't normally know how to code

[00:28:35] have an ability to do so

[00:28:37] with the assistance of the machine?

[00:28:38] Or is the value in giving people

[00:28:40] who do know how to code

[00:28:41] tools that help them code better

[00:28:44] or more efficiently

[00:28:45] or more effectively or whatever?

[00:28:46] I mean, obviously,

[00:28:47] there's value probably in both.

[00:28:48] But where does this technology

[00:28:51] prove itself to be most valuable?

[00:28:53] And I think that still remains to be seen.

[00:28:56] Yep.

[00:28:56] Seems like.

[00:28:59] Speaking of OpenAI,

[00:29:01] reportedly backing off on Reliance

[00:29:03] on its kind of closeness

[00:29:06] to Microsoft's compute resources,

[00:29:09] developing its own AI chip

[00:29:11] as one example.

[00:29:12] It's planning to lease a data center

[00:29:14] from Oracle in Texas

[00:29:15] as another.

[00:29:17] OpenAI CFO Sarah Fryer

[00:29:18] has been reportedly

[00:29:20] been frustrated

[00:29:23] with Microsoft's pace

[00:29:25] in supplying compute power,

[00:29:27] which just shows me

[00:29:29] kind of like accelerationism

[00:29:31] on the OpenAI side.

[00:29:34] In effect,

[00:29:35] OpenAI really seems like

[00:29:36] it's at a point right now

[00:29:37] where it's like,

[00:29:38] let's accelerate,

[00:29:40] let's move fast.

[00:29:41] You know,

[00:29:41] they're starting to kind of

[00:29:42] open up the business model

[00:29:45] to be a for-profit,

[00:29:47] which is only going to accelerate

[00:29:49] that even faster.

[00:29:51] And this seems like

[00:29:52] kind of a component of that.

[00:29:55] Or am I off base on that?

[00:29:56] I mean,

[00:29:57] accelerationism was the word

[00:29:58] that came to mind for me

[00:29:59] when I read this article.

[00:30:00] I was like,

[00:30:01] God,

[00:30:01] am I applying that appropriately here?

[00:30:04] Well,

[00:30:04] if you go to the full accelerationism,

[00:30:06] it's the opposite of doomerism.

[00:30:08] Yeah.

[00:30:09] That's the philosophy piece.

[00:30:10] On a practical level,

[00:30:12] this is,

[00:30:13] we want more servers faster.

[00:30:15] Right.

[00:30:16] Right.

[00:30:16] So accelerate that.

[00:30:18] And I was trying to look,

[00:30:20] I don't know that we ever saw

[00:30:21] an accounting

[00:30:22] for how much of Microsoft's

[00:30:23] $100 billion,

[00:30:25] or sorry,

[00:30:26] $10 billion investment

[00:30:27] in OpenAI

[00:30:29] was cash

[00:30:30] versus in-kind contribution

[00:30:32] of compute.

[00:30:34] And so,

[00:30:35] what's interesting here

[00:30:36] to me

[00:30:37] is whether this

[00:30:38] will have an impact

[00:30:40] in the new

[00:30:42] structure

[00:30:42] of OpenAI

[00:30:43] as a for-profit company.

[00:30:44] Is this also a way

[00:30:46] to try to reduce

[00:30:47] Microsoft's

[00:30:49] holdings

[00:30:49] in OpenAI?

[00:30:51] Yeah.

[00:30:52] Well,

[00:30:52] you guys just aren't fast enough.

[00:30:53] You're not giving us

[00:30:54] what we want.

[00:30:54] Let's,

[00:30:55] you know,

[00:30:56] we're going to take back

[00:30:57] some stock.

[00:30:58] Okay,

[00:30:58] fine.

[00:30:58] We're going to take back

[00:30:59] some of that equity

[00:31:00] because it's now

[00:31:01] much more valuable

[00:31:02] than it was

[00:31:02] when we did this deal.

[00:31:05] So,

[00:31:06] this is high-powered.

[00:31:08] We don't need you,

[00:31:08] Microsoft.

[00:31:09] It's high-powered

[00:31:13] finances

[00:31:14] and M&A

[00:31:15] that's beyond my pay grade

[00:31:16] for sure,

[00:31:17] but I think

[00:31:18] there's a lot

[00:31:19] of fascinating

[00:31:21] spreadsheeting

[00:31:21] going on

[00:31:22] between these companies.

[00:31:24] Mm-hmm.

[00:31:25] For sure.

[00:31:26] For sure there is.

[00:31:28] Interesting.

[00:31:29] Kind of feeling

[00:31:29] a little bit like

[00:31:30] the bloom

[00:31:31] was off the rose

[00:31:32] as they say

[00:31:33] with the

[00:31:34] Yeah,

[00:31:34] the thorns

[00:31:35] are still there.

[00:31:35] Yep.

[00:31:36] We'll stop

[00:31:36] after.

[00:31:39] All right.

[00:31:40] We're going to take

[00:31:40] a super quick break

[00:31:41] and then when we come back

[00:31:42] we're going to talk

[00:31:43] about smart glasses

[00:31:44] because everybody

[00:31:45] had opinions

[00:31:46] about smart glasses

[00:31:48] and what they're capable of.

[00:31:49] That's coming up

[00:31:49] here in a second.

[00:31:53] All right.

[00:31:54] It seemed like

[00:31:55] last week

[00:31:56] everybody was

[00:31:56] flipping their lid

[00:31:57] about a video

[00:31:59] shared online

[00:32:00] that showed

[00:32:01] two Harvard students

[00:32:03] demonstrating

[00:32:03] their creation

[00:32:05] something called

[00:32:05] iXray

[00:32:06] where they took

[00:32:08] meta

[00:32:09] Ray-Ban glasses

[00:32:10] and they were

[00:32:12] streaming video

[00:32:13] my understanding

[00:32:14] anyways is that

[00:32:15] they were streaming

[00:32:15] video

[00:32:16] to a system

[00:32:17] that then

[00:32:18] processed the video

[00:32:19] in real time

[00:32:20] with facial recognition

[00:32:21] cross-checked

[00:32:23] what it found

[00:32:24] so identifying

[00:32:25] who that person

[00:32:26] is let's say

[00:32:27] based on public

[00:32:29] databases

[00:32:30] public information

[00:32:31] so what

[00:32:31] essentially

[00:32:32] what it would allow

[00:32:33] according to their video

[00:32:35] was the ability

[00:32:36] to wear the glasses

[00:32:37] and identify

[00:32:38] random people

[00:32:39] out and about

[00:32:40] in the real world

[00:32:41] access their personal

[00:32:42] information

[00:32:42] in real time

[00:32:43] and you know

[00:32:44] throughout the video

[00:32:44] they're stopping people

[00:32:46] and asking them

[00:32:47] very specific

[00:32:48] targeted questions

[00:32:49] based on the information

[00:32:51] they're receiving

[00:32:51] from the system

[00:32:52] and you know

[00:32:54] what do you know

[00:32:55] they're right

[00:32:56] and isn't that amazing

[00:32:58] and it

[00:32:59] essentially

[00:33:00] the demonstration

[00:33:01] shows like

[00:33:02] all these tools

[00:33:02] working together

[00:33:04] combined together

[00:33:05] to do something

[00:33:06] like this

[00:33:07] and you know

[00:33:08] they were able

[00:33:08] to get

[00:33:08] you know

[00:33:09] a lot of

[00:33:10] information online

[00:33:11] right

[00:33:12] names

[00:33:12] addresses

[00:33:13] phone numbers

[00:33:14] ages

[00:33:14] names of relatives

[00:33:15] partial social security

[00:33:17] numbers

[00:33:19] but

[00:33:20] and so

[00:33:20] of course

[00:33:21] people saw this

[00:33:22] and many people

[00:33:23] were like

[00:33:24] oh my goodness

[00:33:25] insert panic here

[00:33:26] right

[00:33:27] yeah

[00:33:27] the sky is falling

[00:33:29] this is

[00:33:29] this is horrible

[00:33:30] this is our worst fears

[00:33:31] coming to light

[00:33:33] Mike Elgin

[00:33:33] friend of the show

[00:33:34] who possibly

[00:33:35] are going to get

[00:33:36] on the show today

[00:33:37] but I think

[00:33:37] there was a miscommunication

[00:33:38] on when exactly it is

[00:33:39] so I will get him on

[00:33:40] in a future episode

[00:33:42] but he wrote

[00:33:43] for his

[00:33:44] machine society

[00:33:44] newsletter

[00:33:45] and he has another

[00:33:46] piece coming out

[00:33:47] in a couple of days

[00:33:48] that you want to look

[00:33:49] for on this topic

[00:33:51] about

[00:33:51] these smart glasses

[00:33:53] and

[00:33:55] essentially

[00:33:55] proving the case

[00:33:56] that I think

[00:33:57] I'm guessing

[00:33:58] and assuming

[00:33:59] you probably

[00:34:00] prescribe to

[00:34:01] as I do

[00:34:02] which is

[00:34:03] that the glasses

[00:34:04] aren't really

[00:34:05] the issue here

[00:34:06] I mean

[00:34:07] we've all

[00:34:07] we've all

[00:34:08] made the commitment

[00:34:09] to share

[00:34:10] our data

[00:34:10] in wide swaths

[00:34:12] online

[00:34:13] over the course

[00:34:13] of decades

[00:34:14] at this point

[00:34:15] are we really

[00:34:16] surprised

[00:34:16] that it's easy

[00:34:17] to take

[00:34:18] this information

[00:34:18] publicly available

[00:34:19] information

[00:34:20] and squish it

[00:34:21] together

[00:34:22] into such

[00:34:22] a potent

[00:34:23] soup

[00:34:23] what do you

[00:34:24] think

[00:34:24] right

[00:34:24] I agree

[00:34:25] and there are

[00:34:26] other issues

[00:34:26] to deal with

[00:34:27] other concerns

[00:34:27] of course

[00:34:28] there are

[00:34:28] stipulated

[00:34:29] your honor

[00:34:29] but

[00:34:31] what Mike's

[00:34:32] saying is

[00:34:32] let's calm

[00:34:33] down the panic

[00:34:34] here

[00:34:34] and he said

[00:34:34] what the glasses

[00:34:35] really contribute

[00:34:35] is a camera

[00:34:37] and you could

[00:34:38] use a camera

[00:34:39] of any sort

[00:34:40] you can use

[00:34:40] your own phone

[00:34:41] with a selfie

[00:34:42] with somebody

[00:34:43] behind you

[00:34:44] unsuspectingly

[00:34:44] and

[00:34:46] it's not

[00:34:47] the glasses

[00:34:47] it's not that

[00:34:48] technology

[00:34:48] it's the larger

[00:34:49] picture here

[00:34:50] and again

[00:34:51] this is something

[00:34:51] that as you

[00:34:52] just said

[00:34:53] Jason

[00:34:53] we've built

[00:34:54] this for

[00:34:55] ourselves

[00:34:55] I put my

[00:34:56] photos up

[00:34:57] online

[00:34:58] I put my

[00:34:59] information

[00:34:59] online

[00:35:00] I'm out

[00:35:01] there

[00:35:01] and so

[00:35:02] I've got

[00:35:02] to be aware

[00:35:03] of the

[00:35:04] implications

[00:35:04] of that

[00:35:04] if someone

[00:35:05] wants to

[00:35:05] use it

[00:35:06] with malign

[00:35:07] intent

[00:35:08] yeah

[00:35:10] yeah I mean

[00:35:11] I think it's

[00:35:11] an interesting

[00:35:12] I think that

[00:35:13] the technology

[00:35:14] that they

[00:35:14] showed off

[00:35:15] is an

[00:35:15] interesting

[00:35:17] project

[00:35:18] to

[00:35:19] kind of

[00:35:20] demonstrate

[00:35:21] like it

[00:35:21] felt very

[00:35:22] I think this

[00:35:23] is what

[00:35:23] this is what

[00:35:24] resonated I

[00:35:25] think with

[00:35:25] people who

[00:35:25] were scared

[00:35:26] of it

[00:35:26] is that

[00:35:26] it felt

[00:35:27] like a

[00:35:28] real world

[00:35:29] example

[00:35:30] of a

[00:35:30] futuristic

[00:35:31] idea

[00:35:31] slash

[00:35:32] concept

[00:35:32] is that

[00:35:34] you know

[00:35:34] how often

[00:35:35] do we

[00:35:35] see in

[00:35:36] science

[00:35:36] fiction

[00:35:37] these

[00:35:38] heads up

[00:35:39] displays

[00:35:39] over the

[00:35:40] top of

[00:35:40] some

[00:35:40] robots

[00:35:41] you know

[00:35:42] face

[00:35:42] where they're

[00:35:43] identifying

[00:35:43] in real

[00:35:43] time

[00:35:44] oh you

[00:35:44] know

[00:35:44] height

[00:35:45] weight

[00:35:45] that person

[00:35:46] went to

[00:35:46] school

[00:35:47] with this

[00:35:47] blah blah

[00:35:47] blah and

[00:35:48] I think

[00:35:49] to a certain

[00:35:50] degree

[00:35:50] our minds

[00:35:50] go to

[00:35:51] the worst

[00:35:51] possible

[00:35:52] place

[00:35:53] because of

[00:35:54] that

[00:35:54] without

[00:35:55] and so

[00:35:56] then the

[00:35:56] glasses

[00:35:57] become

[00:35:57] the

[00:35:58] demonization

[00:35:59] and I'm

[00:36:00] not even

[00:36:00] saying that

[00:36:01] something like

[00:36:01] this deserves

[00:36:02] to exist

[00:36:03] or not

[00:36:03] exist

[00:36:03] I fully

[00:36:04] admit that

[00:36:05] there are

[00:36:05] serious

[00:36:06] you know

[00:36:07] there are

[00:36:07] serious privacy

[00:36:08] implications

[00:36:09] let's say

[00:36:10] it feels

[00:36:11] like

[00:36:11] invasiveness

[00:36:12] to have

[00:36:13] someone walk

[00:36:13] up to me

[00:36:14] with a camera

[00:36:14] and immediately

[00:36:15] know who I

[00:36:16] am and identify

[00:36:17] me

[00:36:17] and I

[00:36:17] don't

[00:36:18] particularly

[00:36:19] like that

[00:36:20] but I

[00:36:20] think getting

[00:36:21] angry at

[00:36:22] the glasses

[00:36:24] is

[00:36:24] kind of

[00:36:25] the wrong

[00:36:26] direction

[00:36:27] of that

[00:36:28] frustration

[00:36:29] because

[00:36:29] really

[00:36:30] you know

[00:36:31] it's like

[00:36:31] that Radiohead

[00:36:32] song

[00:36:32] which maybe

[00:36:33] you've not

[00:36:33] heard it

[00:36:34] or maybe

[00:36:34] you have

[00:36:34] but you

[00:36:35] did it

[00:36:36] to yourself

[00:36:36] it's true

[00:36:37] and that's

[00:36:38] why it

[00:36:38] really hurts

[00:36:39] which is

[00:36:40] which is

[00:36:40] you know

[00:36:41] which is

[00:36:41] really what

[00:36:42] my whole

[00:36:43] book is

[00:36:43] about

[00:36:43] too

[00:36:43] yes

[00:36:44] the internet

[00:36:44] is what

[00:36:45] we made

[00:36:46] and we're

[00:36:46] there

[00:36:46] but you look

[00:36:48] at that

[00:36:48] function

[00:36:48] from the

[00:36:49] other end

[00:36:49] I'm an

[00:36:50] old guy

[00:36:50] and I have

[00:36:51] always been

[00:36:52] terrible with

[00:36:53] names

[00:36:53] awful

[00:36:54] awful

[00:36:54] awful

[00:36:55] with names

[00:36:55] yeah me too

[00:36:56] I'm not

[00:36:56] great with

[00:36:56] faces either

[00:36:57] and so

[00:36:58] it would be

[00:36:59] a blessing

[00:36:59] and a way

[00:37:00] for me

[00:37:00] to interact

[00:37:01] better

[00:37:01] with people

[00:37:01] if I say

[00:37:02] that's right

[00:37:02] that's her

[00:37:03] name

[00:37:03] in fact

[00:37:03] I'm at

[00:37:04] such a point

[00:37:05] where I

[00:37:05] try very

[00:37:06] hard to

[00:37:06] never say

[00:37:07] a name

[00:37:08] because I'll

[00:37:09] get it

[00:37:09] wrong

[00:37:09] and then

[00:37:10] it's really

[00:37:10] embarrassing

[00:37:11] and so

[00:37:12] I get

[00:37:12] names wrong

[00:37:13] when I know

[00:37:13] someone very

[00:37:14] very well

[00:37:15] I still

[00:37:15] get names

[00:37:16] wrong

[00:37:16] well you

[00:37:17] actually just

[00:37:18] a minute

[00:37:18] ago

[00:37:18] I said

[00:37:20] Jason

[00:37:21] if you

[00:37:21] play back

[00:37:22] the tape

[00:37:23] there was

[00:37:23] a little

[00:37:24] beat

[00:37:25] before I

[00:37:26] said your

[00:37:26] name

[00:37:26] because I'm

[00:37:27] always scared

[00:37:27] of getting

[00:37:28] it wrong

[00:37:28] and I had

[00:37:28] to think

[00:37:28] and make

[00:37:29] sure

[00:37:29] yeah that's

[00:37:30] Jason

[00:37:30] I know

[00:37:30] it's

[00:37:30] okay

[00:37:31] that's

[00:37:31] really

[00:37:32] Jason

[00:37:32] on

[00:37:32] on

[00:37:33] zoom

[00:37:34] I will

[00:37:34] mouse

[00:37:34] over

[00:37:35] the

[00:37:36] boxes

[00:37:36] to see

[00:37:37] if

[00:37:37] somebody's

[00:37:37] name

[00:37:37] is

[00:37:37] there

[00:37:39] guilty

[00:37:39] yep

[00:37:40] and so

[00:37:41] absolutely

[00:37:42] there's

[00:37:43] an argument

[00:37:43] to be

[00:37:43] made

[00:37:44] that just

[00:37:45] in terms

[00:37:45] of being

[00:37:45] able

[00:37:46] to

[00:37:46] identify

[00:37:46] somebody

[00:37:46] as an

[00:37:47] aid

[00:37:47] to

[00:37:48] conversation

[00:37:48] and

[00:37:49] connection

[00:37:49] is not

[00:37:50] a bad

[00:37:51] thing

[00:37:51] the question

[00:37:52] is what

[00:37:52] are our

[00:37:53] norms

[00:37:53] around

[00:37:54] that

[00:37:55] right

[00:37:55] do you

[00:37:55] do

[00:37:55] that

[00:37:56] to

[00:37:56] strangers

[00:37:57] is that

[00:37:57] only from

[00:37:58] a

[00:37:58] database

[00:37:58] of people

[00:37:59] you know

[00:37:59] is it

[00:38:00] from your

[00:38:00] contacts

[00:38:00] is that

[00:38:01] okay

[00:38:02] does it

[00:38:03] only give

[00:38:03] the information

[00:38:03] that you

[00:38:04] already know

[00:38:05] does it go get

[00:38:05] information

[00:38:06] elsewhere

[00:38:06] where does it

[00:38:07] get the

[00:38:07] information

[00:38:07] from

[00:38:07] how do you

[00:38:09] use that

[00:38:09] information

[00:38:11] there was a

[00:38:12] wonderful piece

[00:38:12] by

[00:38:13] Lisa Reichelt

[00:38:15] years ago

[00:38:16] called

[00:38:17] ambient

[00:38:17] intimacy

[00:38:19] and if you

[00:38:20] blog

[00:38:21] I'm looking at

[00:38:22] your picture

[00:38:22] so I'm going to

[00:38:23] say

[00:38:23] let's imagine

[00:38:24] Jason that

[00:38:24] you blogged

[00:38:25] that you got

[00:38:25] a new guitar

[00:38:27] and you

[00:38:28] didn't tell

[00:38:28] me that

[00:38:29] but I

[00:38:30] saw it

[00:38:30] in the

[00:38:30] blog

[00:38:30] and then

[00:38:31] I can

[00:38:32] come on

[00:38:32] the show

[00:38:32] and say

[00:38:33] hey

[00:38:33] Jason

[00:38:33] how do

[00:38:33] you like

[00:38:34] your new

[00:38:34] guitar

[00:38:35] because

[00:38:35] we're already

[00:38:36] skipped a

[00:38:36] step

[00:38:36] right

[00:38:37] and the

[00:38:37] internet

[00:38:38] does it

[00:38:38] because you

[00:38:38] willingly

[00:38:39] shared

[00:38:39] that you

[00:38:39] got it

[00:38:40] and I

[00:38:40] saw it

[00:38:41] and I

[00:38:41] follow

[00:38:41] you

[00:38:42] and you

[00:38:42] let me

[00:38:42] follow

[00:38:42] you

[00:38:43] so all

[00:38:44] of that

[00:38:44] is okay

[00:38:44] with both

[00:38:44] of us

[00:38:45] right

[00:38:45] and that's

[00:38:46] clear

[00:38:46] the norms

[00:38:47] are set

[00:38:47] there

[00:38:47] I'm not

[00:38:48] invading

[00:38:48] your privacy

[00:38:49] by saying

[00:38:50] how's

[00:38:51] the guitar

[00:38:52] right

[00:38:53] now

[00:38:55] I'll go

[00:38:56] the next

[00:38:56] step

[00:38:57] if I

[00:38:57] don't say

[00:38:58] it

[00:38:58] and I

[00:38:58] don't

[00:38:58] share

[00:38:59] it

[00:39:01] sometimes

[00:39:02] people

[00:39:03] this is

[00:39:04] going to

[00:39:04] sound

[00:39:04] really

[00:39:04] weird

[00:39:05] but there

[00:39:06] are some

[00:39:06] people

[00:39:06] I

[00:39:06] know

[00:39:06] who

[00:39:07] whenever

[00:39:07] I get

[00:39:07] a haircut

[00:39:08] will say

[00:39:08] so you

[00:39:08] got a

[00:39:09] haircut

[00:39:10] yeah

[00:39:10] and

[00:39:11] why

[00:39:12] are we

[00:39:13] doing

[00:39:13] this

[00:39:14] and I

[00:39:14] forgot

[00:39:14] that I

[00:39:15] got

[00:39:15] the

[00:39:15] haircut

[00:39:16] right

[00:39:17] so I

[00:39:18] kind of

[00:39:18] didn't

[00:39:23] think Mike

[00:39:24] is right

[00:39:24] that it's

[00:39:25] just about

[00:39:26] a camera

[00:39:26] it's just

[00:39:27] about our

[00:39:27] data

[00:39:27] that's up

[00:39:27] there

[00:39:28] it's not

[00:39:28] terribly

[00:39:29] new

[00:39:29] with this

[00:39:30] so let's

[00:39:31] let's just

[00:39:31] dial down

[00:39:32] the panic

[00:39:32] so I

[00:39:33] always like

[00:39:33] that message

[00:39:35] yeah I

[00:39:36] think before

[00:39:36] we move

[00:39:37] on on

[00:39:37] this

[00:39:37] conversation

[00:39:38] I think

[00:39:39] another

[00:39:39] thing that

[00:39:40] comes up

[00:39:40] for me

[00:39:41] is around

[00:39:42] authenticity

[00:39:44] and I

[00:39:45] could see

[00:39:45] people having

[00:39:46] an you

[00:39:47] know having

[00:39:47] issue

[00:39:48] with the

[00:39:48] fact that

[00:39:49] devices like

[00:39:50] this allow

[00:39:51] for folks

[00:39:52] like you

[00:39:53] and I

[00:39:53] who can

[00:39:54] be

[00:39:54] forgetful

[00:39:54] but but

[00:39:55] truly want

[00:39:56] to be

[00:39:57] better at

[00:39:58] that you

[00:39:59] know or

[00:39:59] you know

[00:40:00] what I

[00:40:00] mean

[00:40:01] about that

[00:40:02] kind of

[00:40:03] remembering

[00:40:04] the story

[00:40:05] or knowing

[00:40:05] about the

[00:40:06] guitar

[00:40:06] being

[00:40:06] something

[00:40:07] presented

[00:40:08] in a pair

[00:40:08] of glasses

[00:40:09] at the

[00:40:09] second that

[00:40:10] you see

[00:40:10] someone

[00:40:10] being a

[00:40:11] less

[00:40:12] authentic

[00:40:13] interaction

[00:40:14] with someone

[00:40:15] versus

[00:40:16] something else

[00:40:17] and I

[00:40:17] don't know

[00:40:18] where I'm

[00:40:18] going with

[00:40:18] that but

[00:40:19] that's

[00:40:19] just

[00:40:19] I could

[00:40:20] see that

[00:40:20] being a

[00:40:20] concern

[00:40:23] it's

[00:40:24] seduce

[00:40:25] the

[00:40:26] friend

[00:40:27] based

[00:40:27] on

[00:40:27] someone

[00:40:28] else's

[00:40:29] writing

[00:40:30] for you

[00:40:30] whispering

[00:40:30] in your

[00:40:31] ear

[00:40:31] and so

[00:40:32] can you

[00:40:33] then judge

[00:40:35] wow I'm

[00:40:36] so flattered

[00:40:36] Jeff remembered

[00:40:38] that I'm a

[00:40:38] guitarist

[00:40:39] isn't that

[00:40:39] nice

[00:40:40] well Jeff

[00:40:40] didn't

[00:40:41] Jeff called

[00:40:42] on the

[00:40:42] database

[00:40:42] to get

[00:40:43] it

[00:40:43] and he

[00:40:43] actually

[00:40:43] is a

[00:40:44] narcissistic

[00:40:45] schmuck

[00:40:45] who doesn't

[00:40:46] care about

[00:40:46] you and

[00:40:47] your talent

[00:40:47] but he

[00:40:48] does this

[00:40:48] because he

[00:40:49] knows it's

[00:40:49] going to

[00:40:49] play to

[00:40:49] your heart

[00:40:50] right

[00:40:50] that's

[00:40:51] the way

[00:40:51] to do

[00:40:52] this

[00:40:52] right

[00:40:52] so it

[00:40:53] wins him

[00:40:53] a lot

[00:40:54] of friends

[00:40:54] but he

[00:40:54] doesn't

[00:40:55] bloody

[00:40:55] deserve

[00:40:56] because

[00:40:57] Jeff's

[00:40:57] an ass

[00:40:57] and he

[00:40:59] just uses

[00:40:59] the machine

[00:40:59] to do

[00:41:00] this

[00:41:00] and you

[00:41:00] expect him

[00:41:01] to

[00:41:01] right

[00:41:01] so yeah

[00:41:03] we all

[00:41:04] have our

[00:41:04] Cyrano

[00:41:05] to Bergerac

[00:41:05] then

[00:41:07] and does

[00:41:07] that make

[00:41:08] us

[00:41:09] you use

[00:41:10] the right

[00:41:10] word

[00:41:10] I think

[00:41:10] authentic

[00:41:11] because it's

[00:41:12] not really

[00:41:12] from us

[00:41:13] or devil's

[00:41:15] advocate

[00:41:15] I wouldn't

[00:41:16] do that

[00:41:17] if I only

[00:41:17] could

[00:41:17] but I've

[00:41:18] got a

[00:41:18] crappy

[00:41:18] memory

[00:41:18] and I

[00:41:19] don't

[00:41:19] remember

[00:41:19] who you

[00:41:19] are

[00:41:20] right

[00:41:21] so we'll

[00:41:21] negotiate

[00:41:22] this and

[00:41:22] figure it

[00:41:23] out

[00:41:23] yes

[00:41:23] yeah

[00:41:24] totally

[00:41:25] totally

[00:41:25] it's as

[00:41:26] simple

[00:41:26] now as

[00:41:27] when I'm

[00:41:27] responding to

[00:41:28] an email

[00:41:29] and it

[00:41:29] tells me

[00:41:29] what to

[00:41:30] say next

[00:41:31] and I

[00:41:31] use what

[00:41:32] it says

[00:41:32] next

[00:41:32] I feel

[00:41:33] really

[00:41:33] guilty

[00:41:34] but it

[00:41:35] saved me a

[00:41:35] line of

[00:41:36] typing

[00:41:36] and it's

[00:41:37] what I was

[00:41:37] going to

[00:41:37] say anyway

[00:41:38] so I do it

[00:41:40] and Google's

[00:41:40] now going to

[00:41:40] offer with

[00:41:41] Gemini more

[00:41:42] richer

[00:41:42] responses

[00:41:44] and I

[00:41:45] feel

[00:41:46] schmucky

[00:41:46] using that

[00:41:47] but sometimes

[00:41:48] I do

[00:41:49] but sometimes

[00:41:50] you do

[00:41:50] better than

[00:41:51] not responding

[00:41:51] I would argue

[00:41:54] or suspect

[00:41:55] that the

[00:41:56] more you

[00:41:57] do the

[00:41:57] less

[00:41:58] you feel

[00:41:59] that

[00:41:59] because

[00:42:00] at least

[00:42:01] that's been

[00:42:02] my experience

[00:42:02] too from

[00:42:03] an authenticity

[00:42:04] standpoint

[00:42:04] is there

[00:42:05] are some

[00:42:05] times where

[00:42:06] those things

[00:42:06] appear

[00:42:06] and that

[00:42:07] is the

[00:42:08] thing

[00:42:08] that I

[00:42:08] was

[00:42:08] going

[00:42:08] to say

[00:42:09] and why

[00:42:10] wouldn't

[00:42:10] I want

[00:42:10] to save

[00:42:10] myself

[00:42:11] five seconds

[00:42:12] of typing

[00:42:13] I'll just

[00:42:14] hit that

[00:42:14] and at the

[00:42:15] end of the

[00:42:16] day the

[00:42:17] payload

[00:42:17] or that's

[00:42:19] not the

[00:42:19] right word

[00:42:20] but you

[00:42:20] know

[00:42:21] the downside

[00:42:22] of that

[00:42:23] is not

[00:42:24] that far

[00:42:24] down

[00:42:25] and

[00:42:25] they probably

[00:42:26] wouldn't know

[00:42:27] the difference

[00:42:27] anyways

[00:42:28] I want a

[00:42:28] next generation

[00:42:29] Gmail

[00:42:29] that

[00:42:31] doesn't wait

[00:42:32] for me to

[00:42:33] open the

[00:42:33] email to

[00:42:33] try to

[00:42:33] respond

[00:42:34] but that

[00:42:35] says

[00:42:35] here's

[00:42:36] 10 things

[00:42:37] you might

[00:42:37] want to

[00:42:37] respond to

[00:42:38] and here's

[00:42:38] what you

[00:42:38] might want

[00:42:38] to say

[00:42:41] or the

[00:42:42] email

[00:42:42] comes

[00:42:43] through

[00:42:43] and when

[00:42:44] you check

[00:42:44] your inbox

[00:42:45] it says

[00:42:46] I've

[00:42:46] prepared

[00:42:47] your

[00:42:47] response

[00:42:47] yes

[00:42:48] exactly

[00:42:49] look

[00:42:50] I

[00:42:50] haven't

[00:42:50] sent

[00:42:51] it

[00:42:51] right

[00:42:51] just

[00:42:52] open

[00:42:52] it

[00:42:52] up

[00:42:52] right

[00:42:52] and

[00:42:53] it's

[00:42:53] you know

[00:42:54] thank you

[00:42:55] you know

[00:42:55] that's

[00:42:56] coming

[00:42:56] oh that's

[00:42:56] I want

[00:42:57] that

[00:42:57] that's

[00:42:58] somewhere

[00:42:58] down the

[00:42:58] line

[00:42:58] yeah

[00:43:00] I mean

[00:43:01] yeah

[00:43:01] that's

[00:43:02] interesting

[00:43:03] anyways

[00:43:04] really

[00:43:05] really

[00:43:05] fascinating

[00:43:06] topic

[00:43:06] it was

[00:43:06] really

[00:43:07] interesting

[00:43:07] to see

[00:43:08] how

[00:43:08] people

[00:43:08] respond

[00:43:09] so thank

[00:43:09] you

[00:43:09] Mike

[00:43:10] Elgin

[00:43:10] in

[00:43:10] absentia

[00:43:11] yeah

[00:43:11] thank

[00:43:12] you

[00:43:13] Mike

[00:43:13] sorry

[00:43:13] you

[00:43:14] couldn't

[00:43:14] be

[00:43:14] here

[00:43:14] to

[00:43:15] discuss

[00:43:15] it

[00:43:15] with

[00:43:15] us

[00:43:16] I

[00:43:16] apologize

[00:43:16] about

[00:43:17] that

[00:43:19] we'll

[00:43:20] have

[00:43:20] you

[00:43:39] not

[00:43:39] to

[00:43:39] go

[00:43:39] that

[00:43:39] route

[00:43:40] and

[00:43:40] actually

[00:43:40] the

[00:43:41] timing

[00:43:41] of

[00:43:41] the

[00:43:41] market

[00:43:42] and

[00:43:42] everything

[00:43:42] it

[00:43:42] was

[00:43:42] probably

[00:43:42] a

[00:43:43] really

[00:43:43] great

[00:43:43] idea

[00:43:43] that

[00:43:43] I

[00:43:44] didn't

[00:43:44] but

[00:43:45] anyways

[00:43:45] you

[00:43:46] put

[00:43:46] in

[00:43:46] this

[00:43:46] story

[00:43:47] about

[00:43:47] Zillow

[00:43:48] and

[00:43:48] acquiring

[00:43:49] a

[00:43:50] virtual

[00:43:51] staging

[00:43:52] AI

[00:43:53] company

[00:43:54] this

[00:43:54] is a

[00:43:54] company

[00:43:54] that

[00:43:55] uses

[00:43:55] AI

[00:43:55] to

[00:43:55] create

[00:43:56] digitally

[00:43:56] staged

[00:43:57] listed

[00:43:59] images

[00:44:00] and

[00:44:01] in

[00:44:01] my

[00:44:01] short

[00:44:01] time

[00:44:02] really

[00:44:02] exploring

[00:44:03] this

[00:44:04] world

[00:44:04] I

[00:44:05] found

[00:44:05] out

[00:44:06] I

[00:44:07] recognized

[00:44:08] through

[00:44:08] a couple

[00:44:09] of

[00:44:09] friends

[00:44:09] of mine

[00:44:09] who

[00:44:09] are

[00:44:09] agents

[00:44:10] just

[00:44:10] the

[00:44:11] cost

[00:44:11] and

[00:44:11] the

[00:44:11] time

[00:44:12] and

[00:44:12] the

[00:44:13] effort

[00:44:13] that

[00:44:13] goes

[00:44:13] into

[00:44:14] staging

[00:44:15] places

[00:44:16] and

[00:44:16] yes

[00:44:17] there's

[00:44:17] value

[00:44:18] to

[00:44:18] that

[00:44:18] because

[00:44:19] beyond

[00:44:20] just

[00:44:20] like

[00:44:20] the

[00:44:20] photos

[00:44:21] that

[00:44:21] are

[00:44:21] taken

[00:44:21] on

[00:44:22] a

[00:44:22] listing

[00:44:22] because

[00:44:23] people

[00:44:23] when

[00:44:24] they

[00:44:24] walk

[00:44:24] into

[00:44:24] a

[00:44:24] room

[00:44:25] that's

[00:44:26] that's

[00:44:27] professionally

[00:44:28] staged

[00:44:29] you get

[00:44:29] a different

[00:44:29] sense

[00:44:30] of

[00:44:30] what it

[00:44:31] could be

[00:44:31] for

[00:44:31] yourself

[00:44:32] but

[00:44:33] people

[00:44:34] there is

[00:44:34] also

[00:44:34] the

[00:44:35] world

[00:44:35] of

[00:44:35] digital

[00:44:36] staging

[00:44:36] which

[00:44:37] is

[00:44:37] basically

[00:44:38] taking

[00:44:38] an

[00:44:38] image

[00:44:39] of

[00:44:39] the

[00:44:39] empty

[00:44:39] room

[00:44:39] and

[00:44:40] putting

[00:44:40] in

[00:44:41] furniture

[00:44:41] and

[00:44:41] this

[00:44:42] seems

[00:44:43] like

[00:44:43] a

[00:44:43] solution

[00:44:45] that

[00:44:45] AI

[00:44:46] is

[00:44:47] almost

[00:44:47] perfect

[00:44:48] for

[00:44:48] when I

[00:44:49] think

[00:44:49] about

[00:44:49] the

[00:44:49] strengths

[00:44:50] of

[00:44:50] AI

[00:44:50] it

[00:44:51] really

[00:44:51] does

[00:44:51] I

[00:44:51] I'm

[00:44:52] addicted

[00:44:52] to

[00:44:53] HGTV

[00:44:54] and all

[00:44:54] those

[00:44:54] home

[00:44:55] shows

[00:44:55] I

[00:44:56] have

[00:44:57] been

[00:44:57] addicted

[00:44:57] to

[00:44:58] this

[00:44:59] old

[00:44:59] house

[00:45:00] because

[00:45:00] I

[00:45:00] am

[00:45:00] clumsy

[00:45:01] as

[00:45:01] hell

[00:45:01] with

[00:45:01] a

[00:45:01] hammer

[00:45:02] so

[00:45:02] I

[00:45:02] lived

[00:45:03] vicariously

[00:45:03] through

[00:45:04] the

[00:45:05] hosts

[00:45:05] and

[00:45:06] watched

[00:45:06] them

[00:45:06] hammer

[00:45:07] nails

[00:45:07] and

[00:45:08] felt

[00:45:08] like

[00:45:08] I

[00:45:08] was

[00:45:08] doing

[00:45:08] something

[00:45:09] to

[00:45:09] me

[00:45:09] major

[00:45:10] construction

[00:45:11] is

[00:45:12] putting

[00:45:12] together

[00:45:13] an

[00:45:13] IKEA

[00:45:13] chair

[00:45:14] that's

[00:45:15] a

[00:45:15] big

[00:45:15] deal

[00:45:15] if

[00:45:16] I

[00:45:16] can

[00:45:16] follow

[00:45:16] the

[00:45:17] things

[00:45:17] right

[00:45:17] so

[00:45:18] I

[00:45:19] love

[00:45:19] the

[00:45:19] home

[00:45:19] stuff

[00:45:19] I

[00:45:20] love

[00:45:20] that

[00:45:20] and

[00:45:21] it

[00:45:21] really

[00:45:21] occurs

[00:45:22] to me

[00:45:22] that

[00:45:23] staging

[00:45:23] is

[00:45:23] extremely

[00:45:24] expensive

[00:45:24] and

[00:45:24] basically

[00:45:24] all

[00:45:25] of

[00:45:25] these

[00:45:25] shows

[00:45:25] where

[00:45:25] we

[00:45:26] remake

[00:45:26] your

[00:45:26] home

[00:45:26] and

[00:45:27] at

[00:45:27] the

[00:45:27] end

[00:45:27] oh

[00:45:27] it's

[00:45:28] beautiful

[00:45:28] a lot

[00:45:29] of

[00:45:29] it

[00:45:29] isn't

[00:45:29] what

[00:45:29] they

[00:45:29] did

[00:45:29] in

[00:45:30] construction

[00:45:30] it's

[00:45:31] how

[00:45:31] they

[00:45:31] staged

[00:45:32] it

[00:45:51] and

[00:45:52] all

[00:45:52] the

[00:45:52] pictures

[00:45:53] I

[00:45:53] died

[00:45:54] knowing

[00:45:55] it's

[00:45:55] at

[00:45:56] least

[00:45:56] cleaned

[00:45:56] up

[00:45:56] if

[00:45:57] not

[00:45:57] staged

[00:45:57] what

[00:45:58] interests

[00:45:58] me

[00:45:58] here

[00:45:58] Jason

[00:45:59] I'm

[00:45:59] curious

[00:45:59] to

[00:45:59] hear

[00:46:00] your

[00:46:00] thoughts

[00:46:00] on

[00:46:00] this

[00:46:00] is

[00:46:01] that

[00:46:02] so

[00:46:02] if

[00:46:02] I

[00:46:03] obviously

[00:46:03] if

[00:46:04] somebody

[00:46:04] went

[00:46:04] in

[00:46:22] can

[00:46:22] even

[00:46:22] stage

[00:46:23] it

[00:46:23] myself

[00:46:23] as

[00:46:23] a

[00:46:23] no

[00:46:24] I

[00:46:24] don't

[00:46:24] like

[00:46:25] gray

[00:46:25] couches

[00:46:25] I

[00:46:26] want

[00:46:26] those

[00:46:26] couches

[00:46:26] to be

[00:46:27] purple

[00:46:27] I

[00:46:27] love

[00:46:28] purple

[00:46:28] right

[00:46:29] could

[00:46:29] it

[00:46:29] do

[00:46:29] that

[00:46:30] for

[00:46:30] me

[00:46:30] could

[00:46:30] it

[00:46:30] stage

[00:46:30] it

[00:46:30] the

[00:46:31] way

[00:46:31] I

[00:46:52] a

[00:46:52] large

[00:46:52] part

[00:46:53] of

[00:46:53] the

[00:46:54] process

[00:46:54] of

[00:46:55] selling

[00:46:55] real

[00:46:56] estate

[00:46:56] to

[00:46:56] someone

[00:46:57] is

[00:46:57] getting

[00:46:58] that

[00:46:59] person

[00:46:59] who

[00:46:59] is

[00:47:00] the

[00:47:00] buyer

[00:47:00] to

[00:47:01] see

[00:47:01] themselves

[00:47:02] in

[00:47:02] the

[00:47:02] home

[00:47:02] if

[00:47:03] you

[00:47:04] can

[00:47:04] get

[00:47:04] them

[00:47:05] to

[00:47:05] start

[00:47:06] like

[00:47:06] one

[00:47:07] big

[00:47:07] tell

[00:47:07] is

[00:47:08] if

[00:47:08] you're

[00:47:08] an

[00:47:08] agent

[00:47:08] you're

[00:47:09] walking

[00:47:09] through

[00:47:09] a

[00:47:09] home

[00:47:09] with

[00:47:09] someone

[00:47:10] and

[00:47:10] you

[00:47:10] hear

[00:47:10] someone

[00:47:11] say

[00:47:11] oh

[00:47:12] and

[00:47:12] this

[00:47:12] could

[00:47:12] be

[00:47:12] Timmy's

[00:47:13] room

[00:47:13] like

[00:47:14] those

[00:47:14] are

[00:47:14] signs

[00:47:15] that